Description

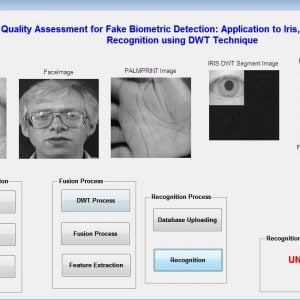

Fake Biometric Detection using DWT Technique with Secret Key Analysis

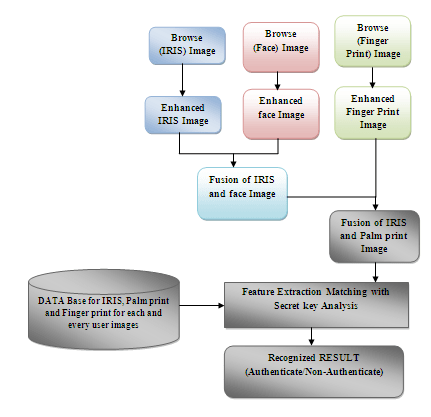

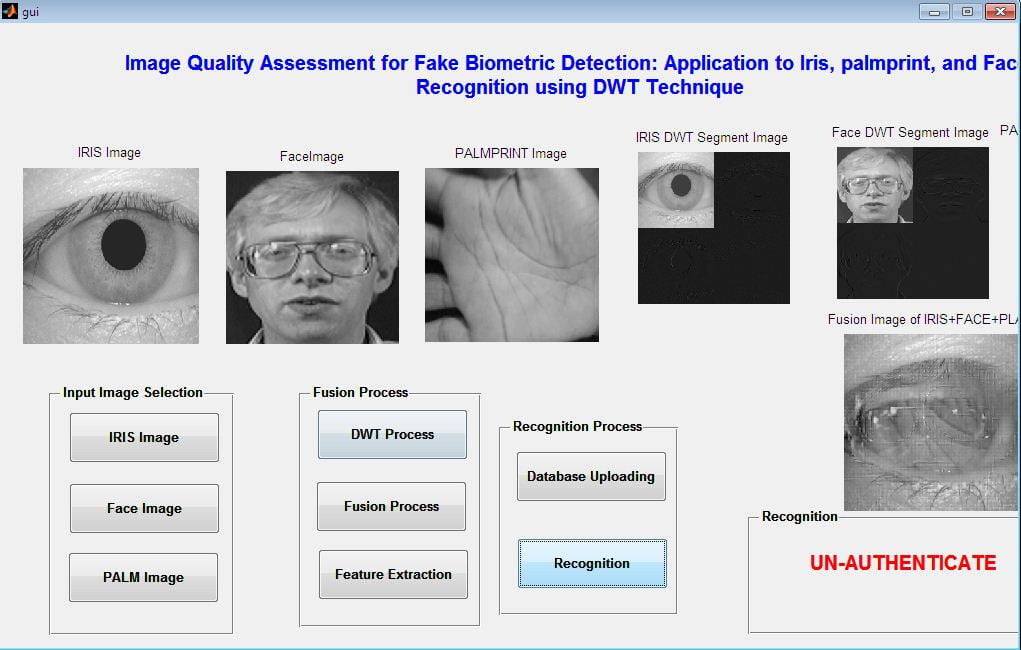

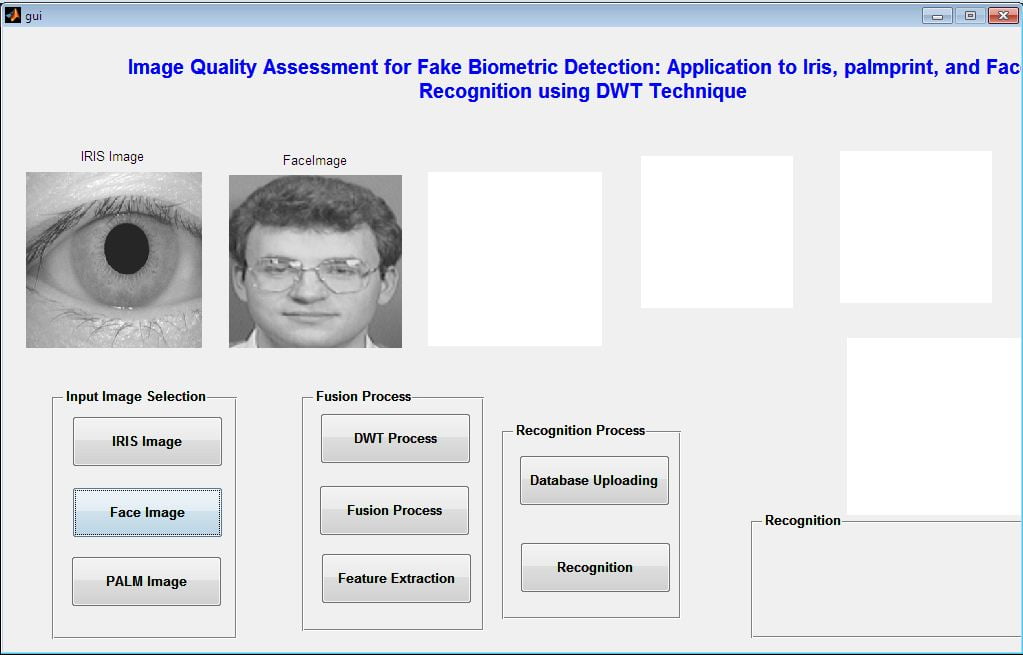

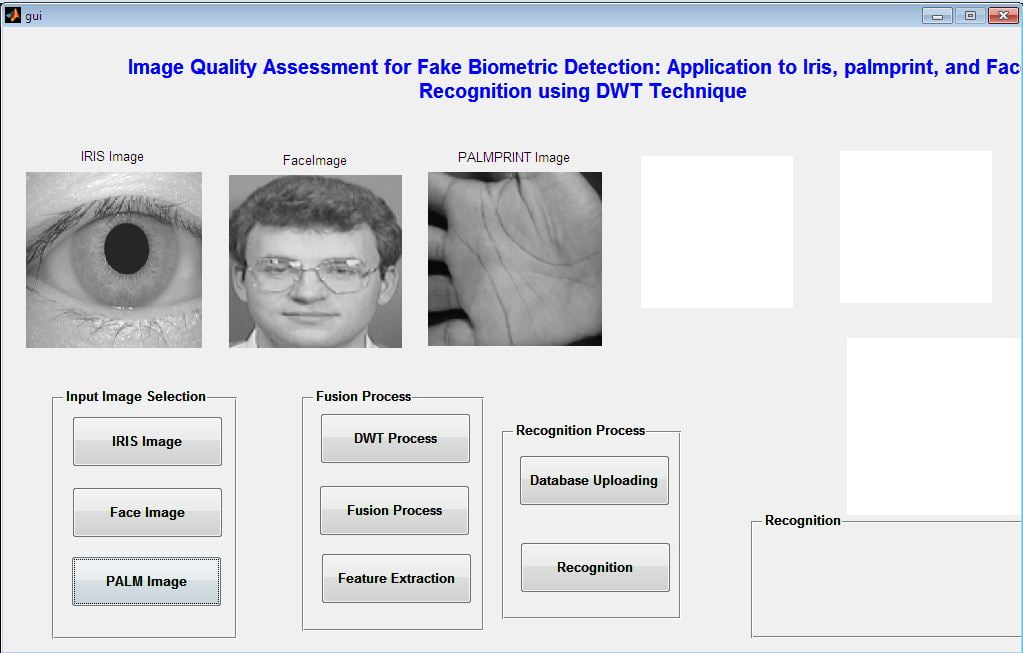

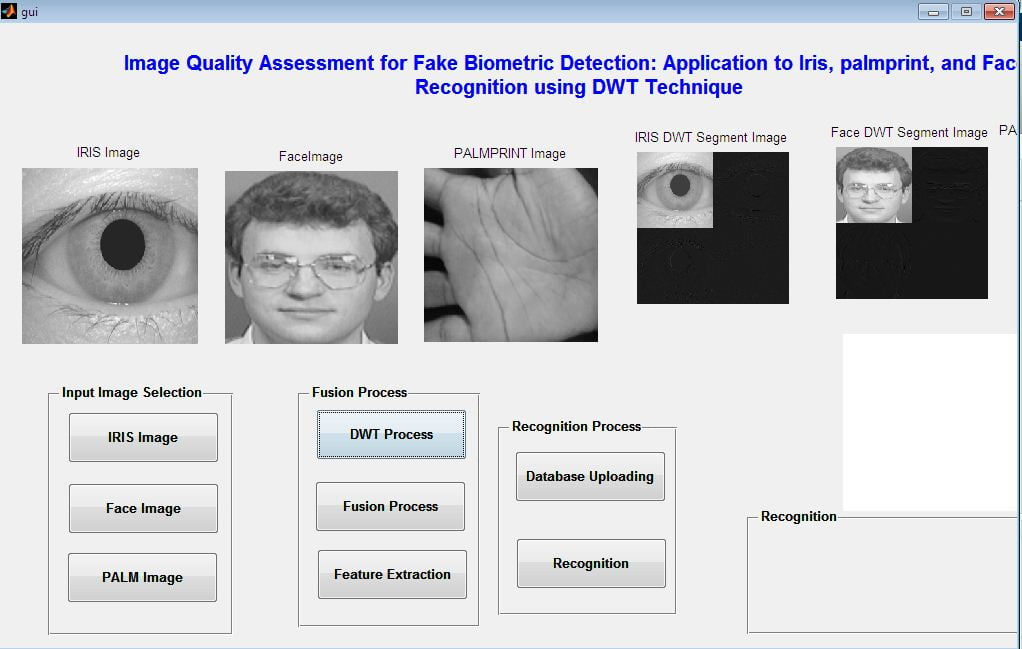

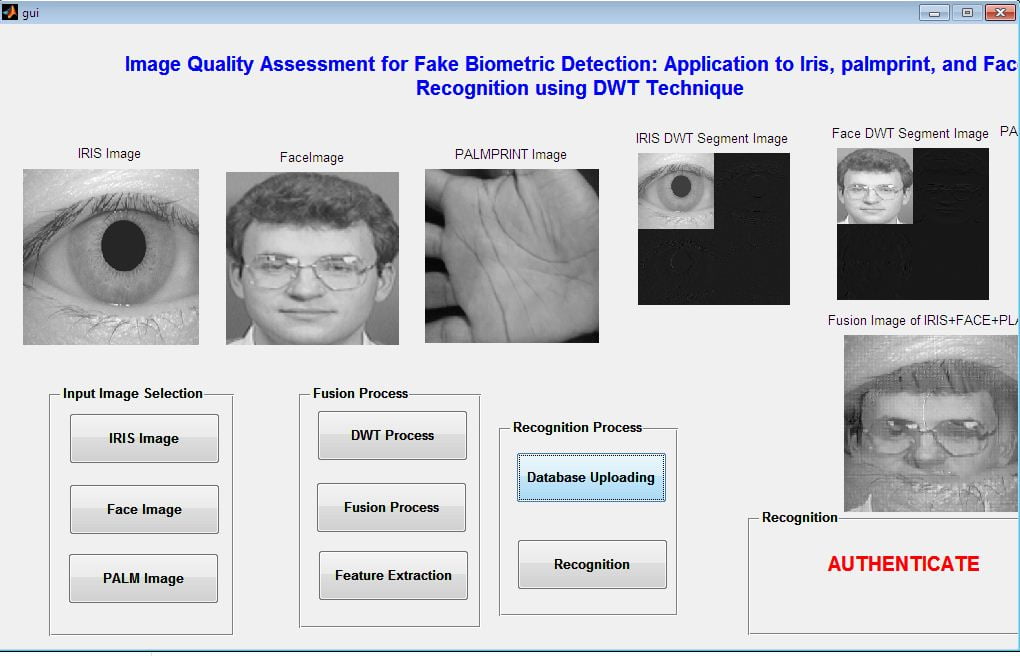

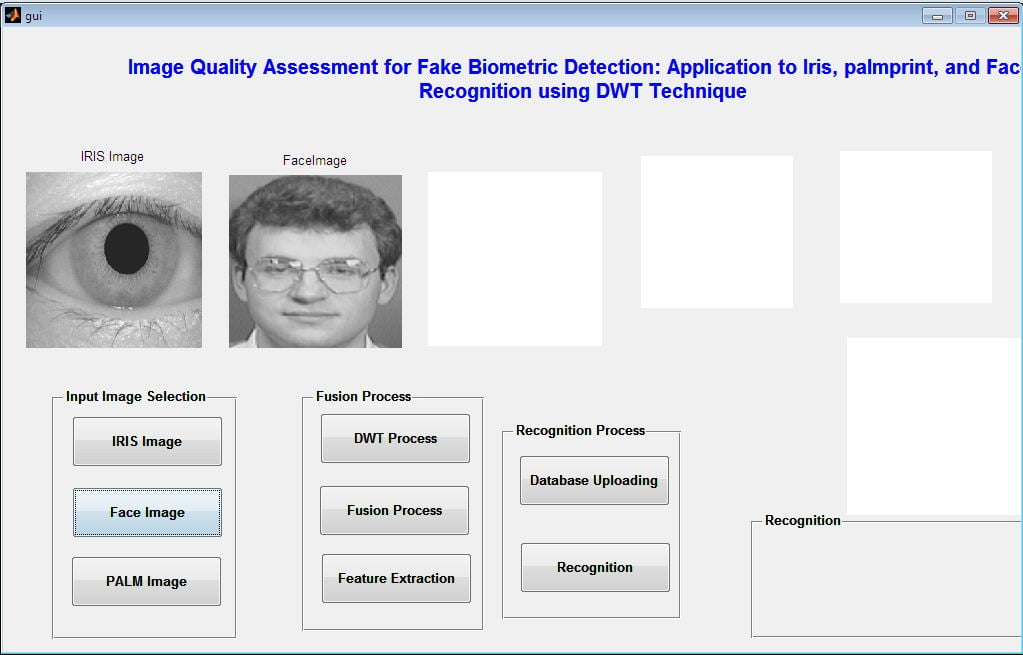

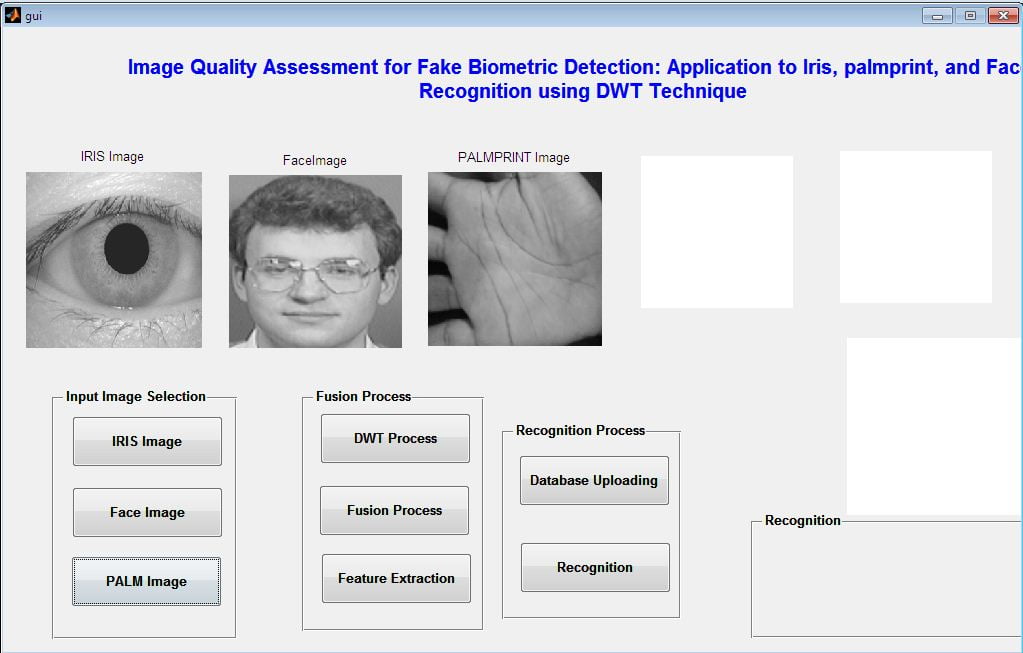

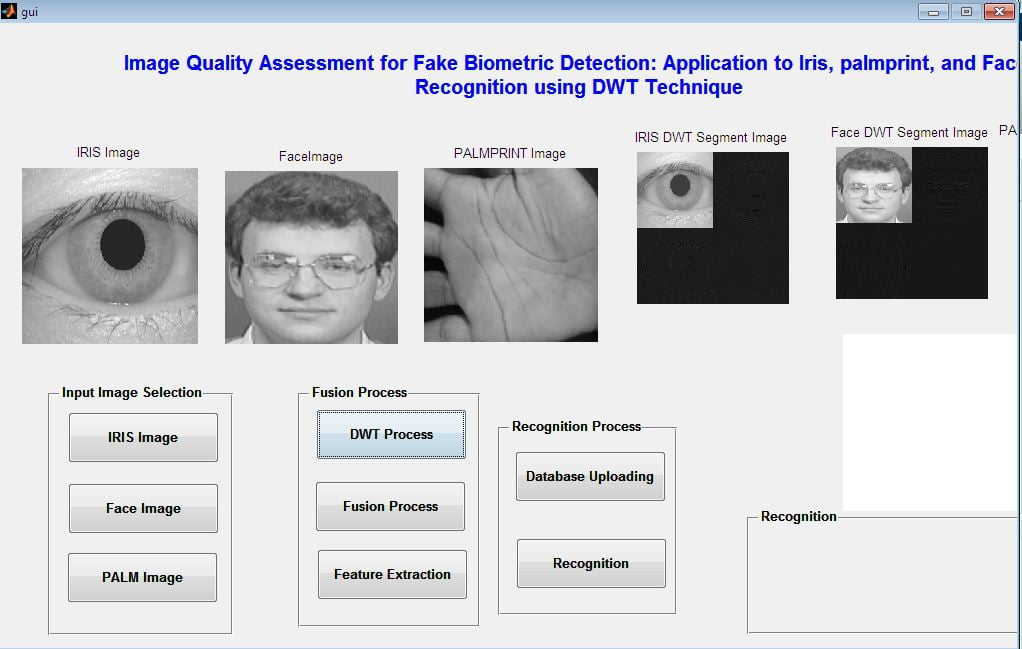

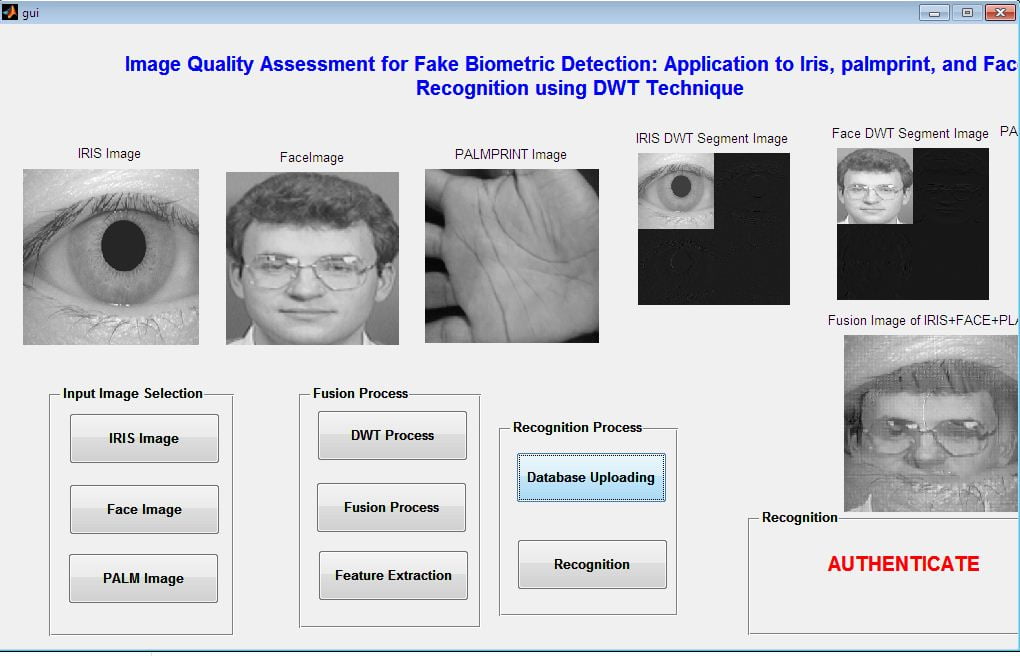

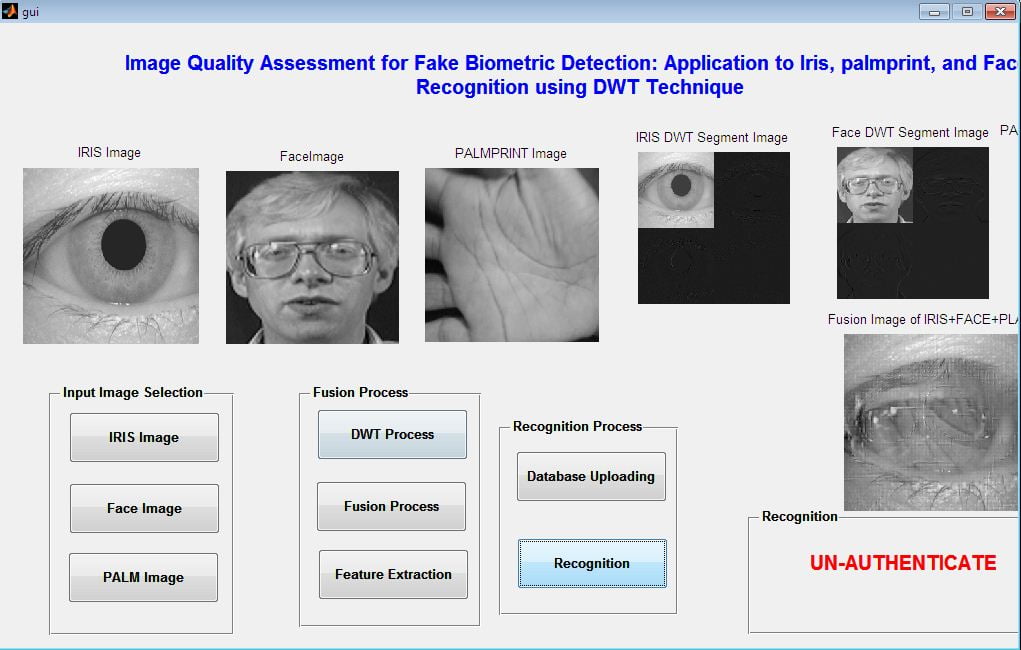

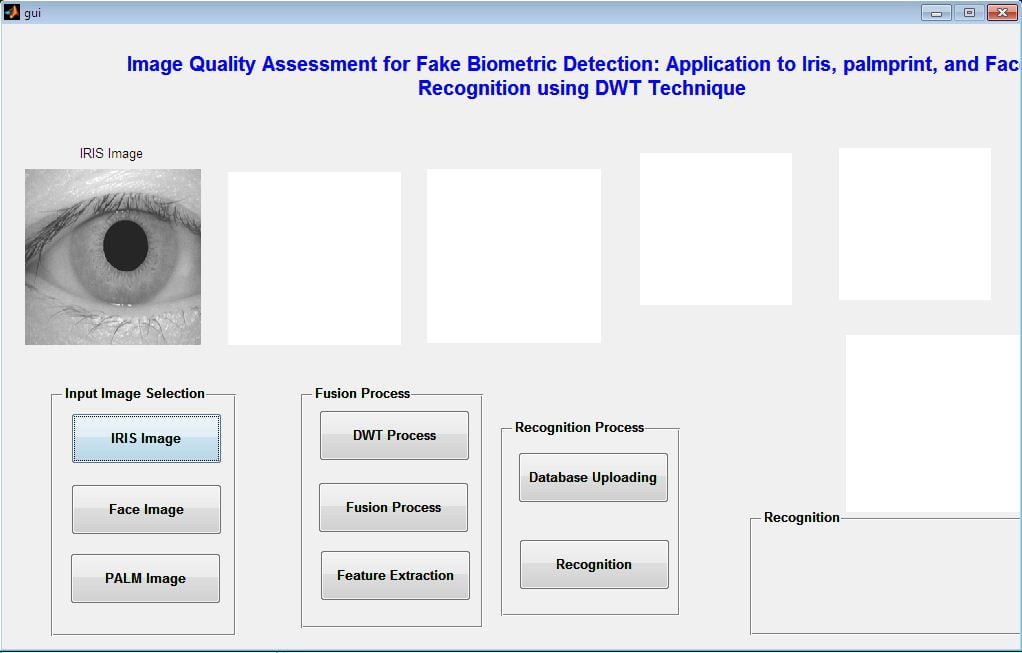

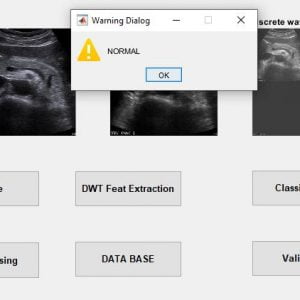

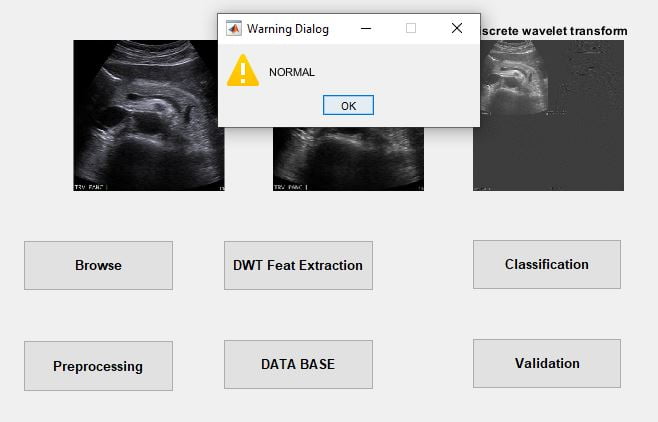

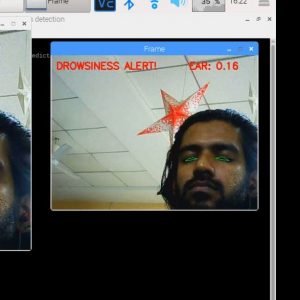

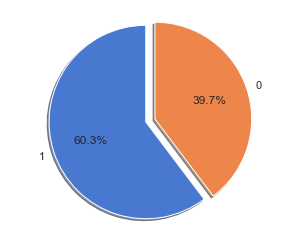

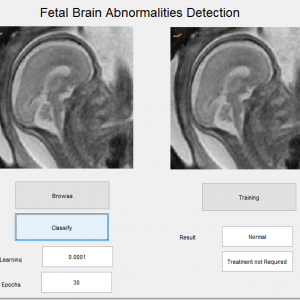

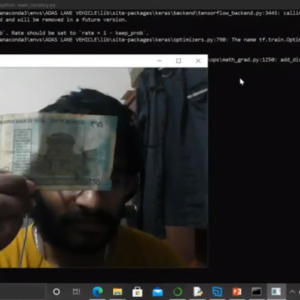

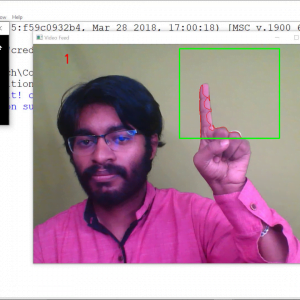

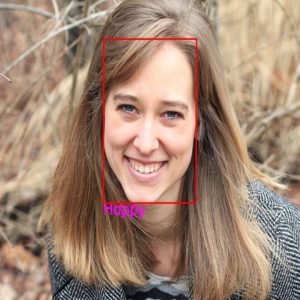

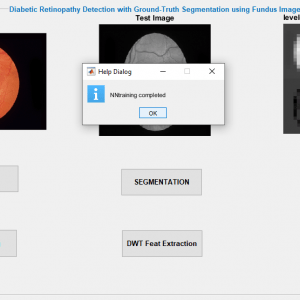

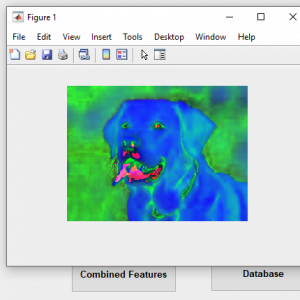

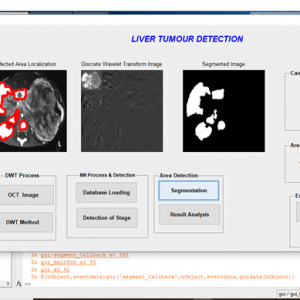

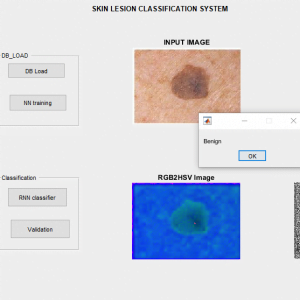

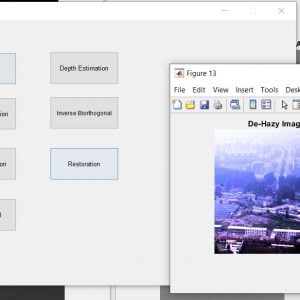

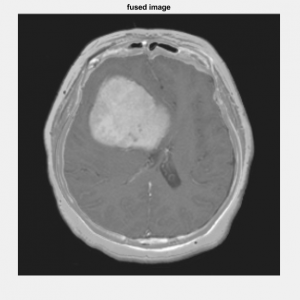

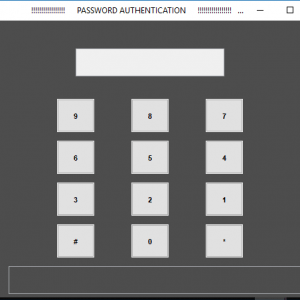

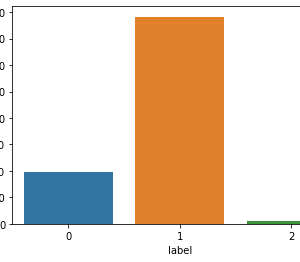

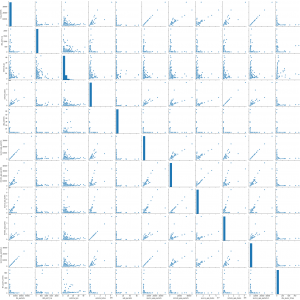

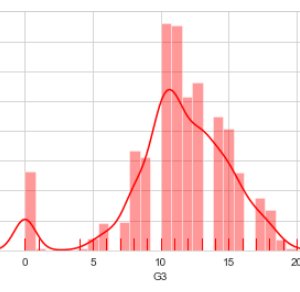

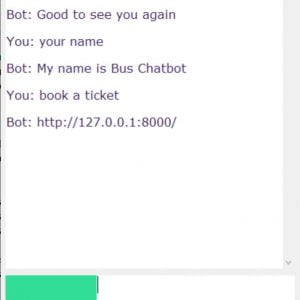

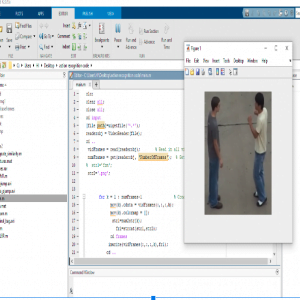

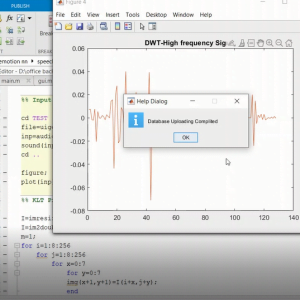

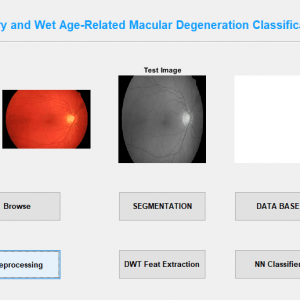

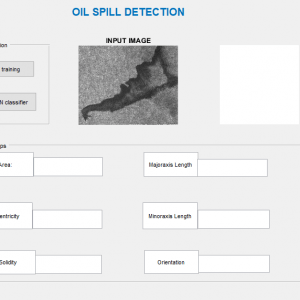

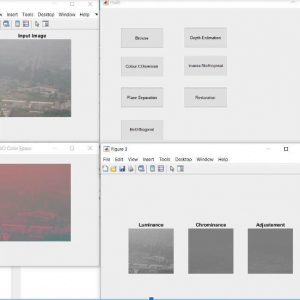

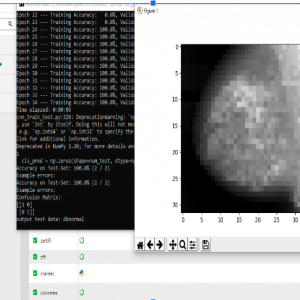

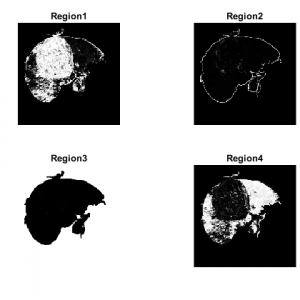

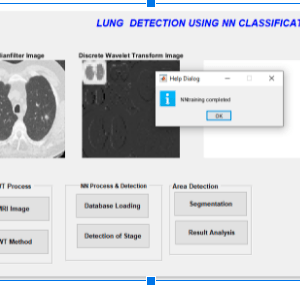

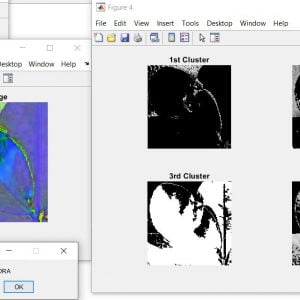

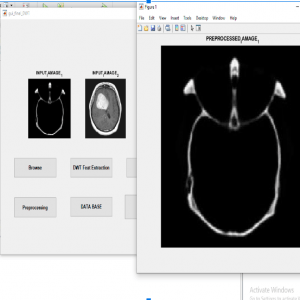

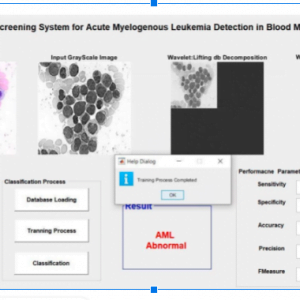

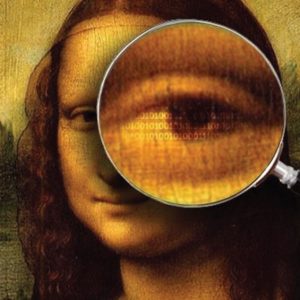

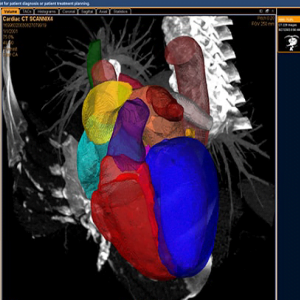

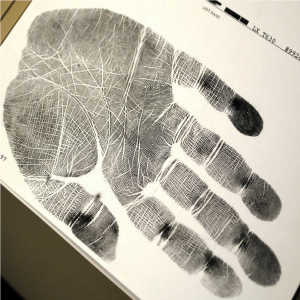

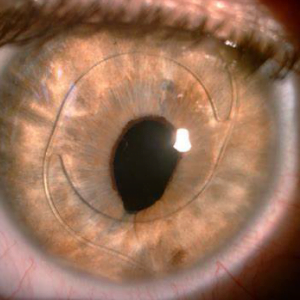

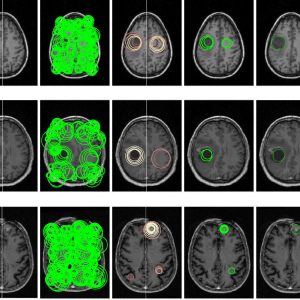

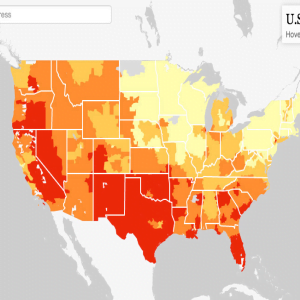

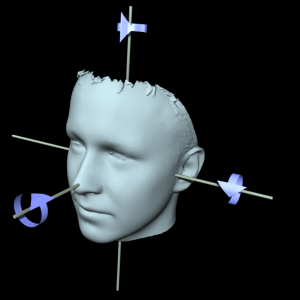

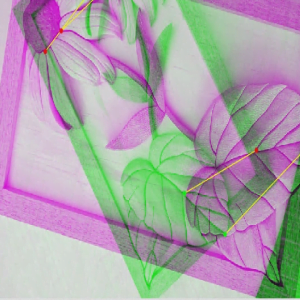

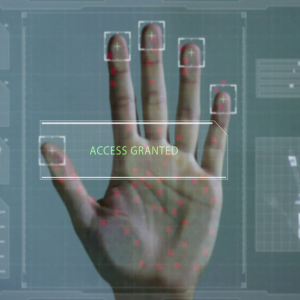

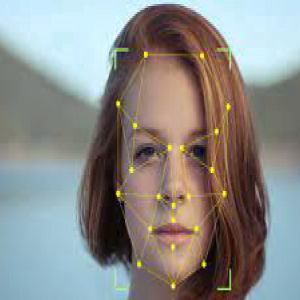

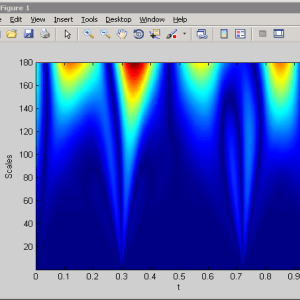

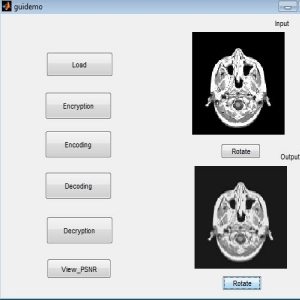

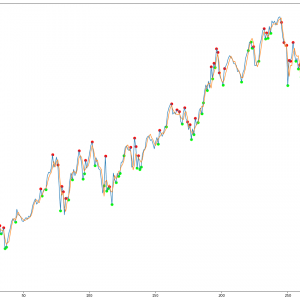

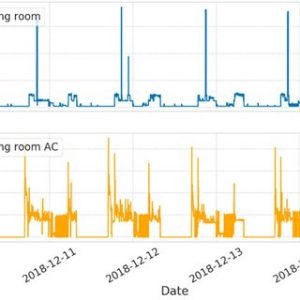

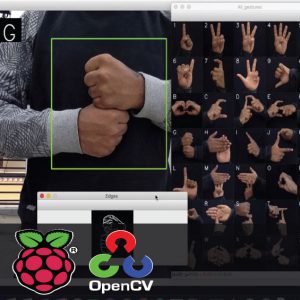

This paper presents the fusion of three biometric traits, i.e., iris, face, and fingerprint, at matching score level architecture using a weighted sum of score technique. The features are extracted from the pre-processed images of the iris, face, and fingerprint. These features of a query image are compared with those of a database image to obtain matching scores. The individual scores generated after matching are passed to the fusion module. This module consists of three major steps i.e., Pre-Processing, DWT Segmentation, and Image fusion. The final fusion is then used to declare the person as Authenticate or Un-Authenticate with Secret Key Analysis.Fake Biometric Detection using DWT Technique with Secret Key Analysis

Fake Biometric Detection using DWT Technique with Secret Key Analysis

INTRODUCTION:

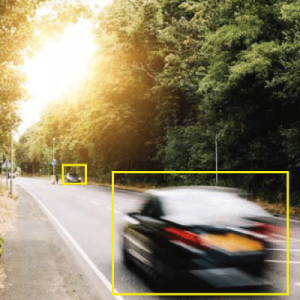

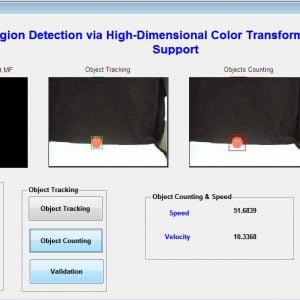

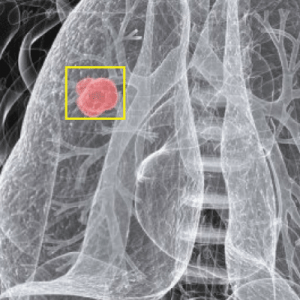

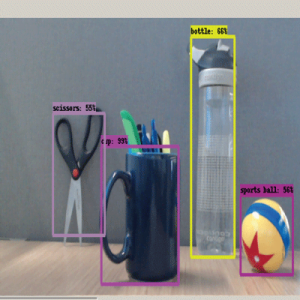

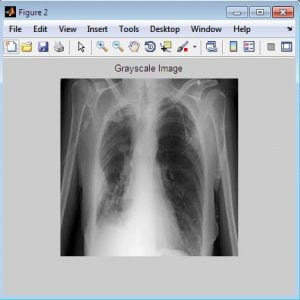

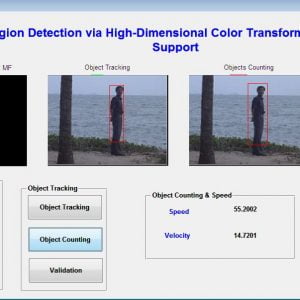

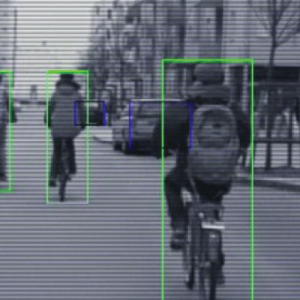

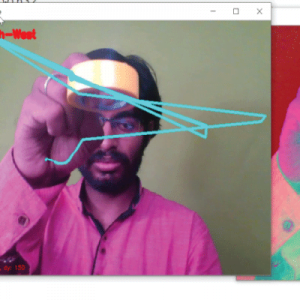

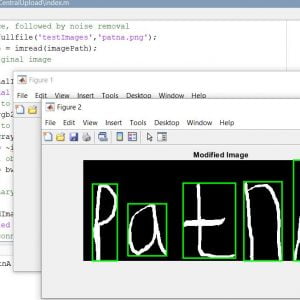

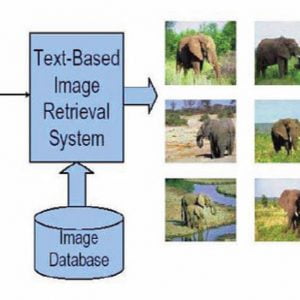

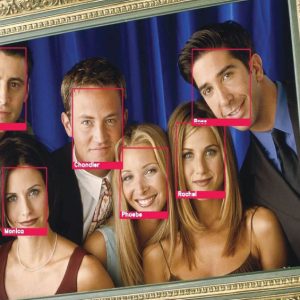

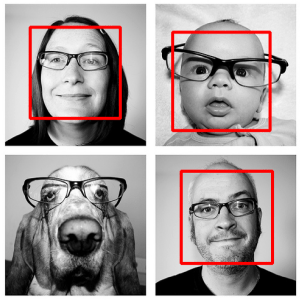

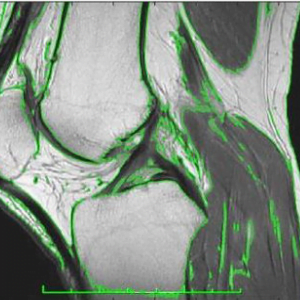

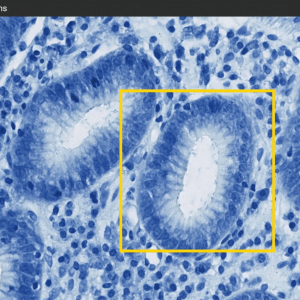

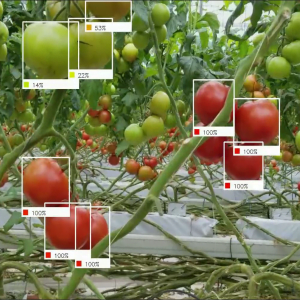

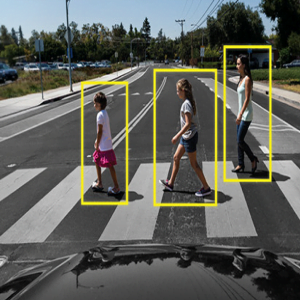

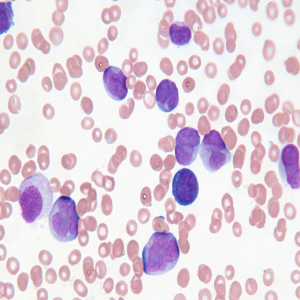

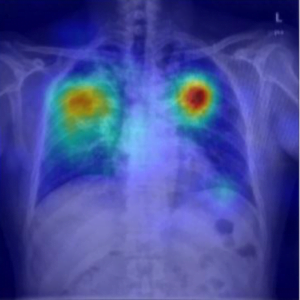

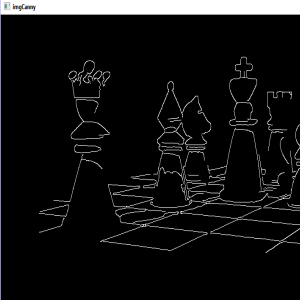

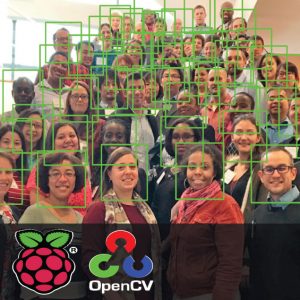

The identification of objects in an image. This process would probably start with image processing techniques such as noise removal, followed by (low-level) feature extraction to locate lines, regions, and possibly areas with certain textures. The clever bit is to interpret collections of these shapes as single objects, e.g. cars on a road, boxes on a conveyor belt, or cancerous cells on a microscope slide. One reason this is an AI problem is that an object can appear very different when viewed from different angles or under different lighting. Another problem is deciding what features belong to what object and which are background or shadows etc. The human visual system performs these tasks mostly unconsciously but a computer requires skill full programming and lots of processing power to approach human performance.

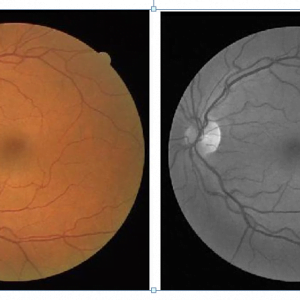

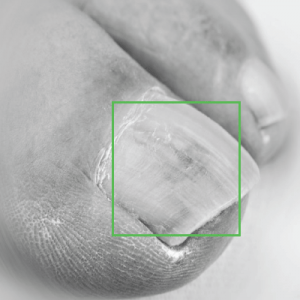

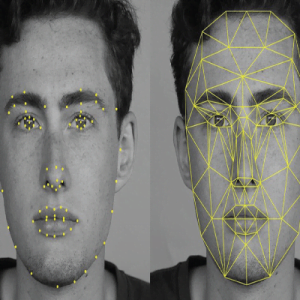

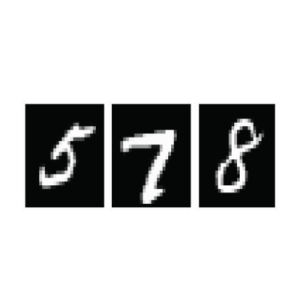

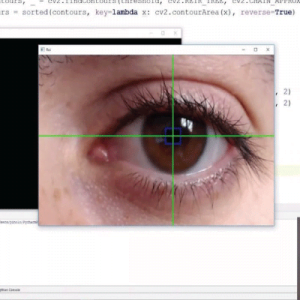

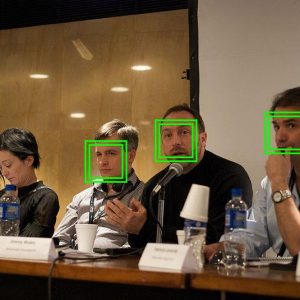

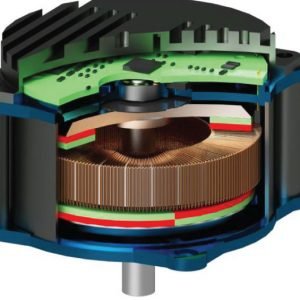

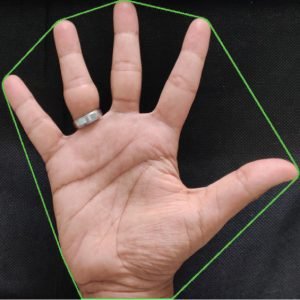

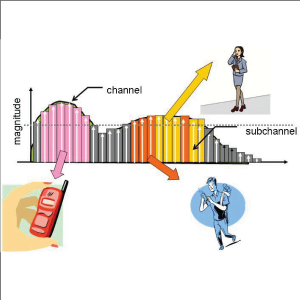

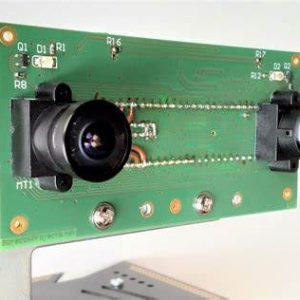

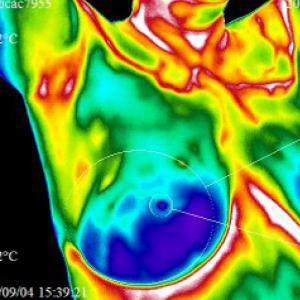

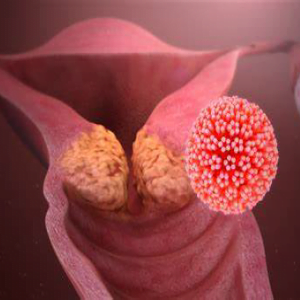

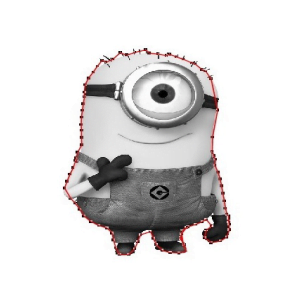

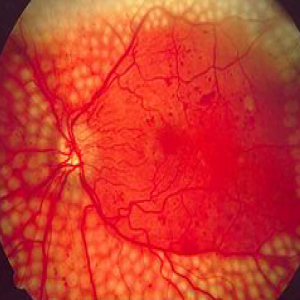

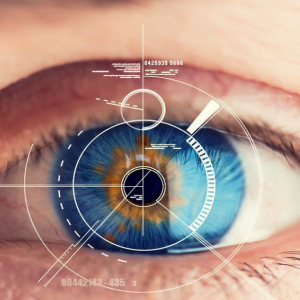

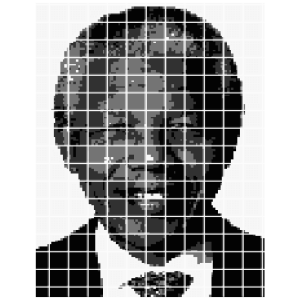

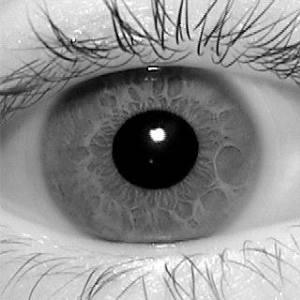

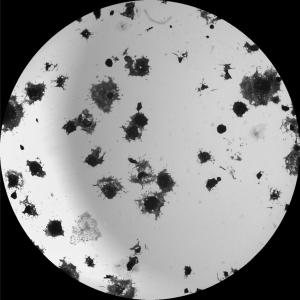

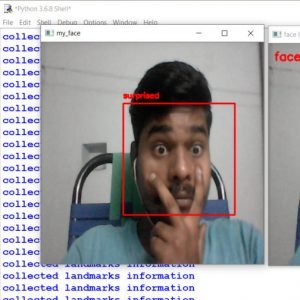

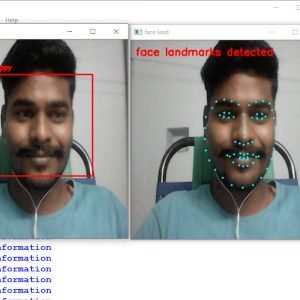

Manipulating data in the form of an image through several possible ways of iris, fingerprint, and face recognition. The automated method of iris recognition is relatively young, existing in the patent only since 1994. The human iris, an annular region located around the pupil and covered by the cornea, can provide independent and unique information about a person. A? facial recognition system is a? computer application capable of?identifying?or?verifying?a person from a? digital image or a? frame from a? video? or source. One of the ways to do this is by comparing selected facial features? from the image and a facial database.

It is typically used in security systems and can be compared to other?biometrics?such as fingerprint or eye iris recognition systems. Recently, it has also become popular as a commercial identification and marketing tool.

System Analysis

Existing Systems

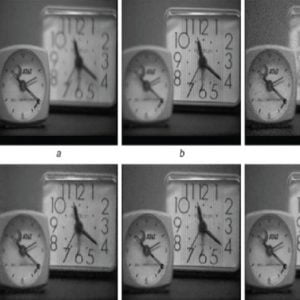

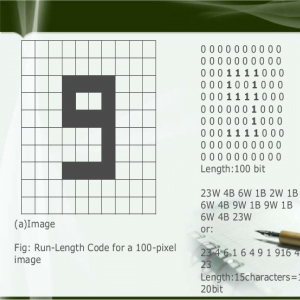

- Edge detection

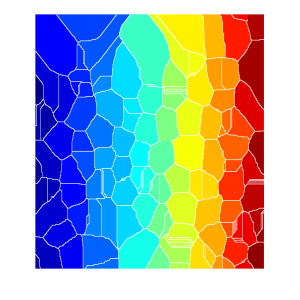

- Segmentation

- Feature vector

DISADVANTAGE

- Existing is done using Fingerprinting. Fingerprinting is that much not flexible because we can make duplicates of fingers and bluff people. It is not that much efficient.

- Only the spatial domain is calculated.

Fake Biometric Detection using DWT Technique with Secret Key Analysis

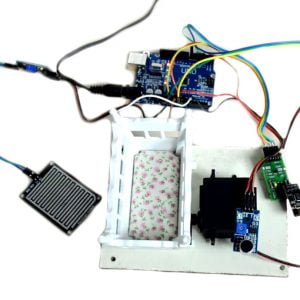

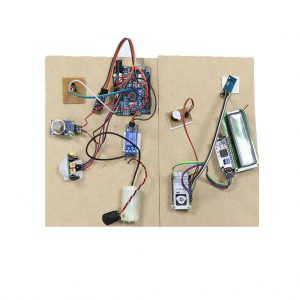

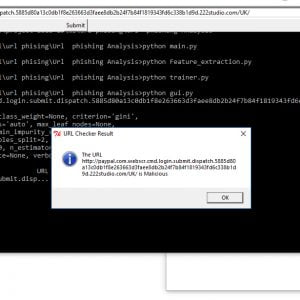

Proposed Systems

- Biometric system based on the combination of iris palm print and fingerprint features for person authentication

- We will be using PCA i.e. Principal Component Analysis algorithm to find out co-variance and variance.

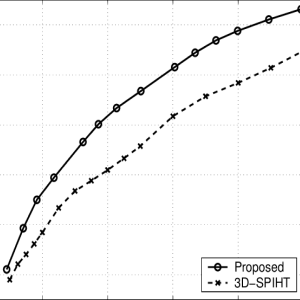

Advantages

- The Sequential Haar coefficient requires only two bytes to store each of the extracted coefficients.

- The cancellation of the division in subtraction results avoids the usage of decimal numbers while preserving the difference between two adjacent pixels.

- This system gives more security compared to a uni-modal system because of two biometric features

Fake Biometric Detection using DWT Technique with Secret Key Analysis

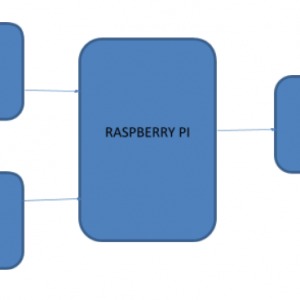

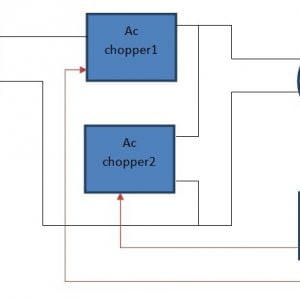

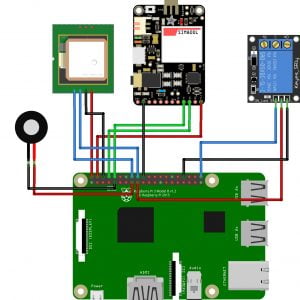

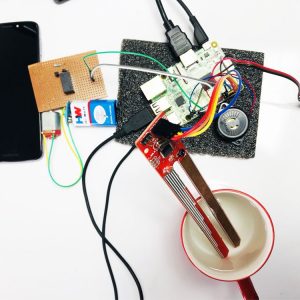

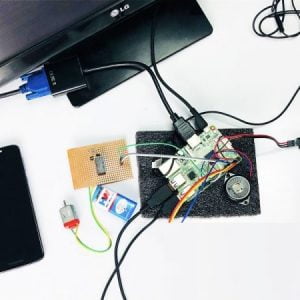

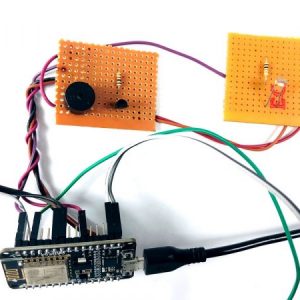

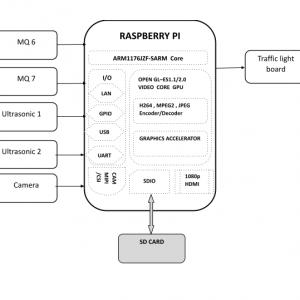

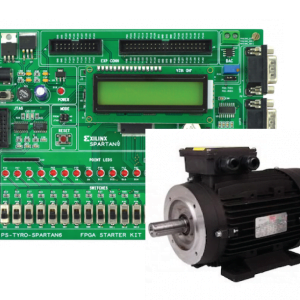

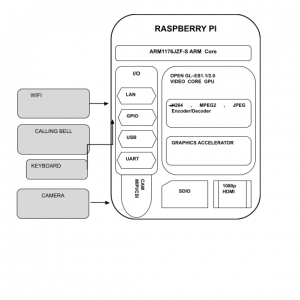

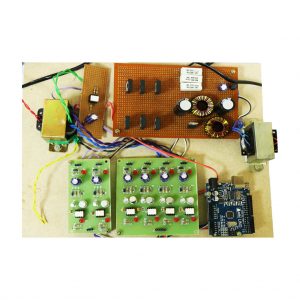

Block Diagram

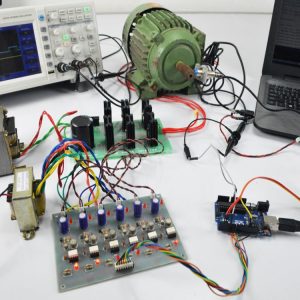

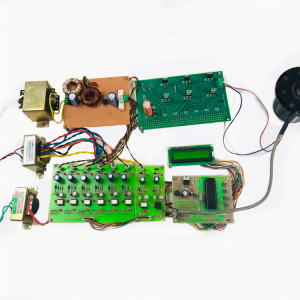

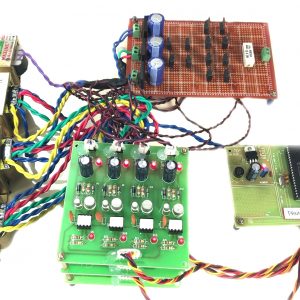

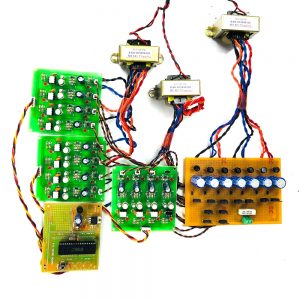

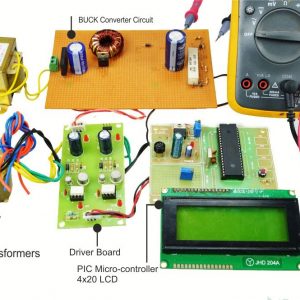

Requirement Specifications

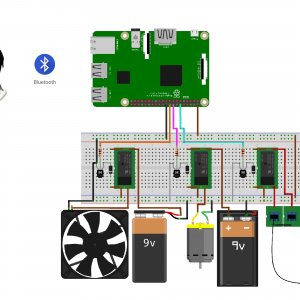

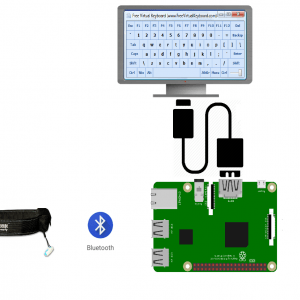

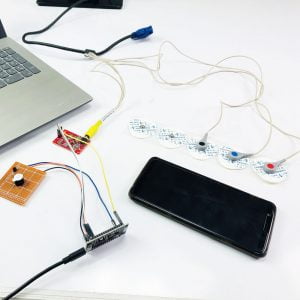

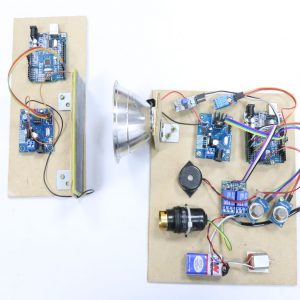

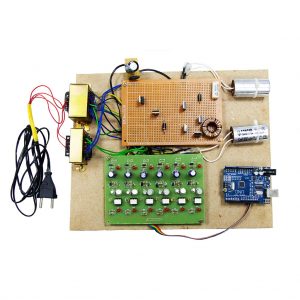

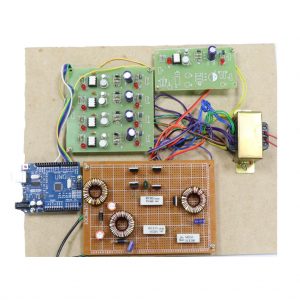

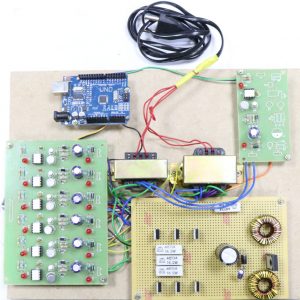

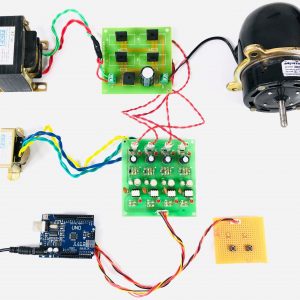

Hardware Requirements

- system

- 4 GB of RAM

- 500 GB of Hard disk

SOFTWARE REQUIREMENTS:

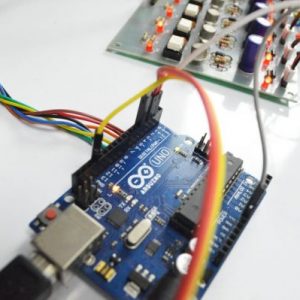

- MATLAB 2018b

Fake Biometric Detection using DWT Technique with Secret Key Analysis

REFERENCES:

[1] S. Prabhakar, S. Pankanti, and A. K. Jain, ?Biometric recognition: Security and privacy concerns,? IEEE Security Privacy, vol. 1, no. 2, pp. 33?42, Mar./Apr. 2003.

[2] T. Matsumoto,?Artificial irises: Importance of vulnerability analysis,? in Proc. AWB, 2004.

[3] J. Galbally, C. McCool, J. Fierrez, S. Marcel, and J. Ortega-Garcia, ?On the vulnerability of face verification systems to hill-climbing attacks,? Pattern Recognit., vol. 43, no. 3, pp. 1027? 1038, 2010.

[4] A. K. Jain, K. Nandakumar, and A. Nagar, ?Biometric template security,? EURASIP J. Adv. Signal Process., vol. 2008, pp. 113? 129, Jan. 2008.

[5] J. Galbally, F. Alonso-Fernandez, J. Fierrez, and J. Ortega-Garcia, ?A high-performance fingerprint liveness detection method based on quality-related features,? Future Generation. Comput. Syst., vol. 28, no. 1, pp. 311? 321, 2012.

[6] K. A. Nixon, V. Animale, and R. K. Rowe,?Spoof detection schemes,? Handbook of Biometrics. New York, NY, USA: Springer-Verlag, 2008, pp. 403? 423.

[7] ISO/IEC 19792:2009, Information Technology?Security Techniques? Security Evaluation of Biometrics, ISO/IEC Standard 19792, 2009.

[8] Biometric Evaluation Methodology. v1.0, Common Criteria, 2002.

[9] K. Bowyer, T. Boult, A. Kumar, and P. Flynn, Proceedings of the IEEE Int. Joint Conf. on Biometrics. Piscataway, NJ, USA: IEEE Press, 2011.

[10] G. L. Marcialis, A. Lewicke, B. Tan, P. Coli, D. Grimberg, A. Congiu, et al., ?First international fingerprint liveness detection competition? Livet 2009,? in Proc. IAPR ICIAP, Springer LNCS-5716. 2009, pp. 12? 23.

Customer Reviews

There are no reviews yet.