Advance Learning

Keeps you in

the Lead

Become a Master in your Dream Domain!

INR 15,000

@ INR 2999/-

Academic Collaboration with India's leading Universities

Previous

Next

Awards & Achievements

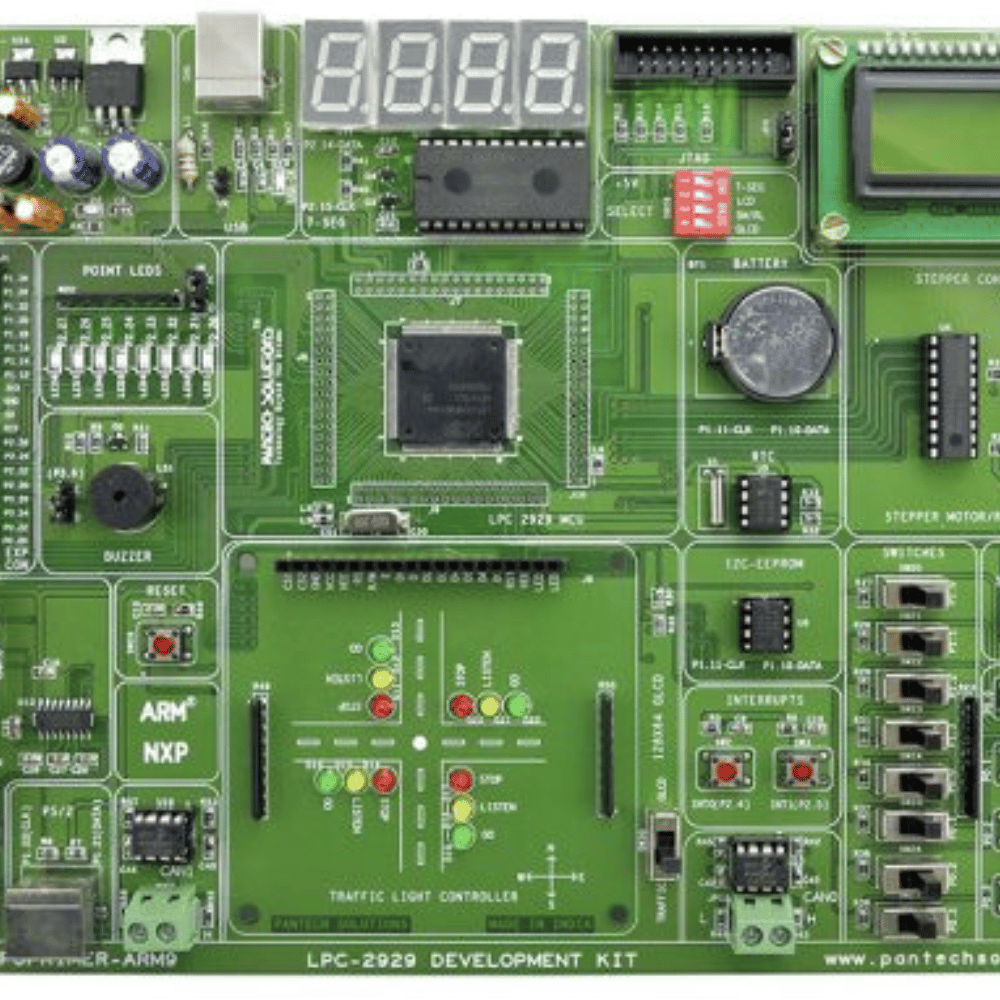

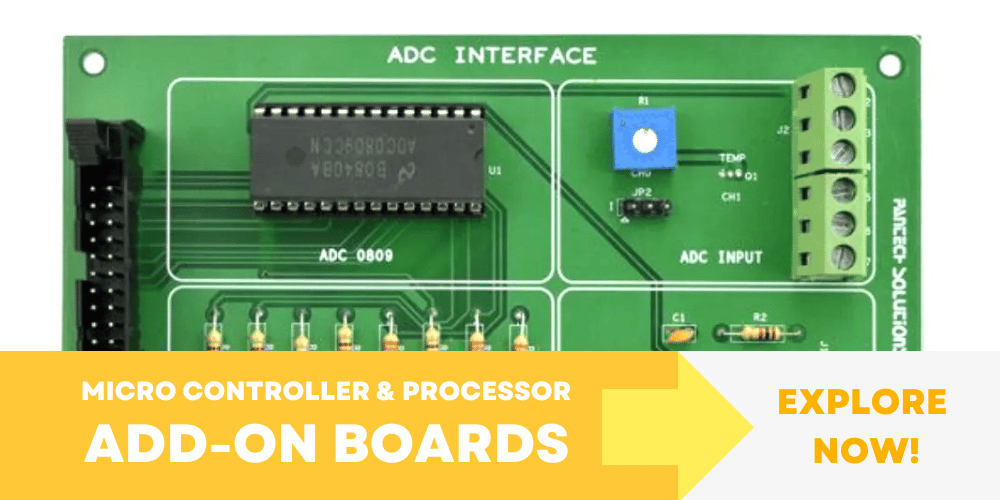

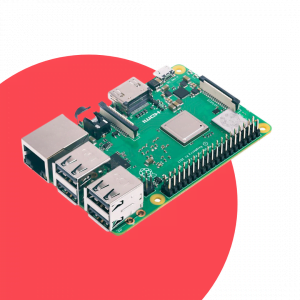

What Pantech offers ?

Live Class

Live classes by industry experts in various aspects of technology & certification

Start Now!Internship

Get Internship & Core Preparation Tech Trainings by certified by standard org's

Start Now! Excellent

Based on 5881 reviews

Parashuram N Bannibagi

2023-11-20

Pantech eLearning conducting free certified masterclass, webinars on YouTube platform which will give ideas and makes you to learn more and paid self-paced learning with internship certificate.

Ravi Teja

2023-10-20

Amazing Python Internship Program offered by PantechELearning, great teaching by Mr. Philip Bedit & Shankar Sir. Special Thanks to Mr. Kumara Swamy. Thankyou so much for this amazing experience, thoroughly enjoyed the explanation #PhillipBedit #PantechELearning #PythonHandsOnInternship

electrical eee

2023-09-30

Session was good informative. could have added with present and future research areas.

Shambhu Nath Chaturvedi

2023-09-30

Nice

Shobana Velu

2023-09-23

I am Shobhan Palanivelu I studied computer science Engineering

This good place and improve my knowledge

Reshma Syed

2023-09-09

Very nice

Mohan Babu

2023-08-18

This is a good place to gain knowledge and teaching mentor are so friendly and genuine at teaching