Description

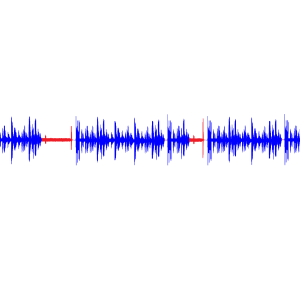

Speech Emotion Recognition Using Matlab

Objective

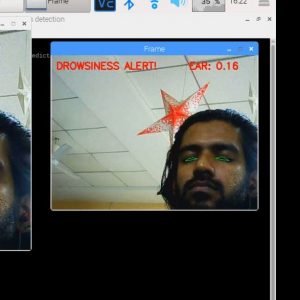

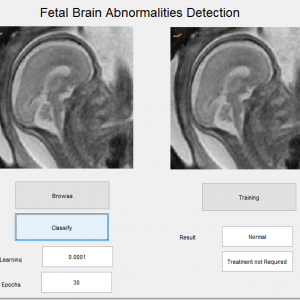

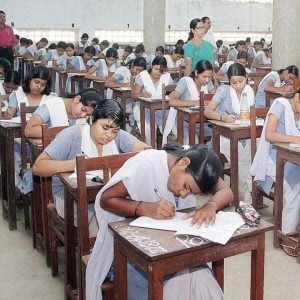

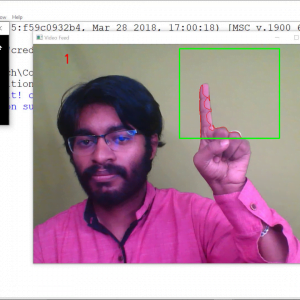

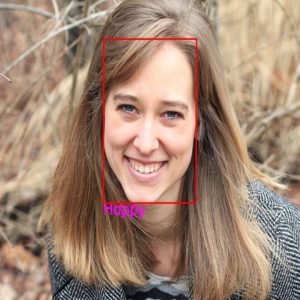

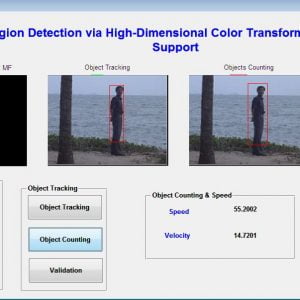

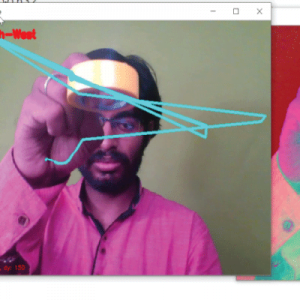

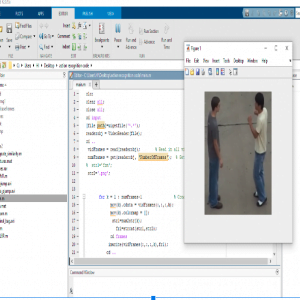

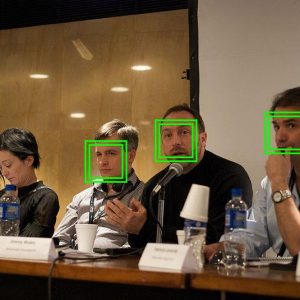

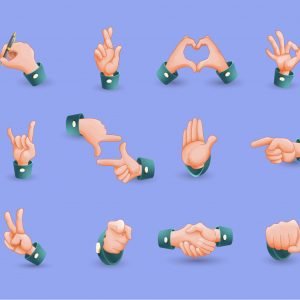

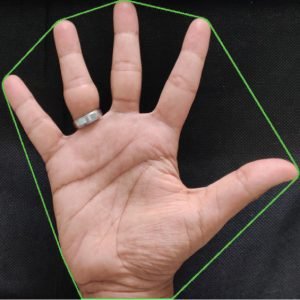

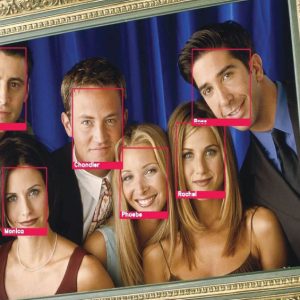

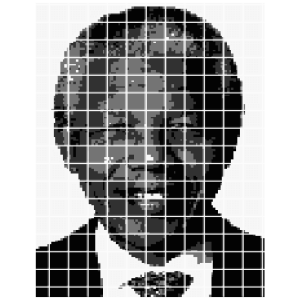

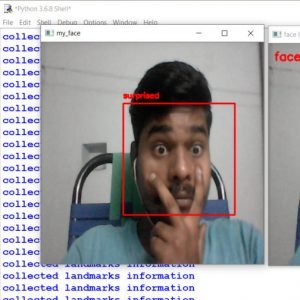

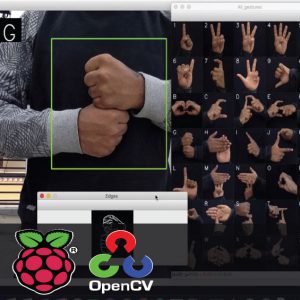

- The aim of the project is to detect faces and get emotions from human beings using video streaming. Using this we can identify the emotions with the file support.

Speech Emotion Recognition Using Matlab

ABSTRACT

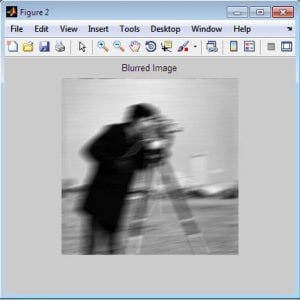

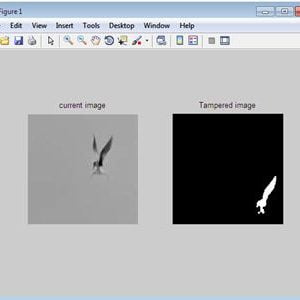

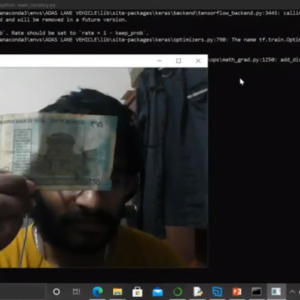

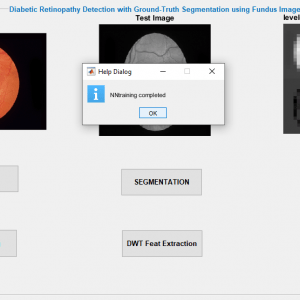

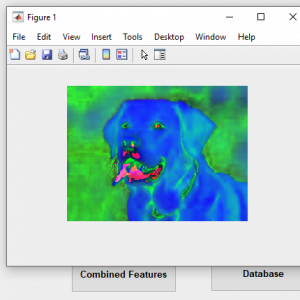

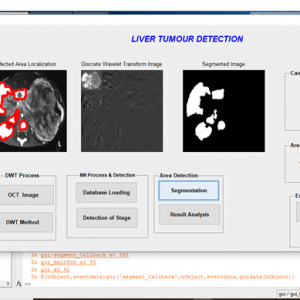

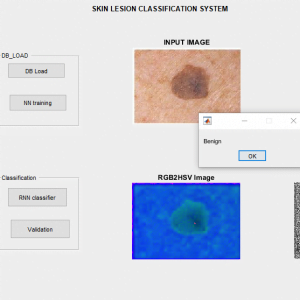

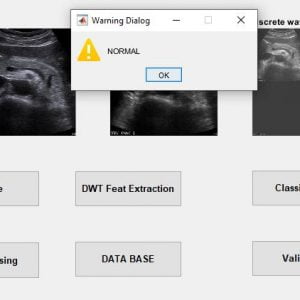

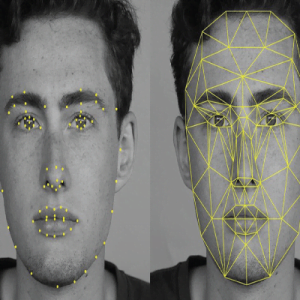

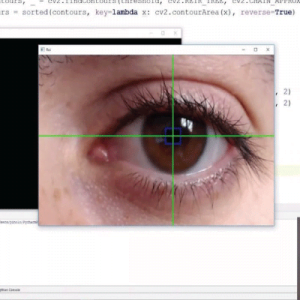

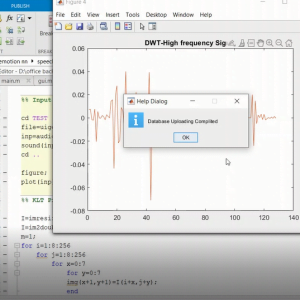

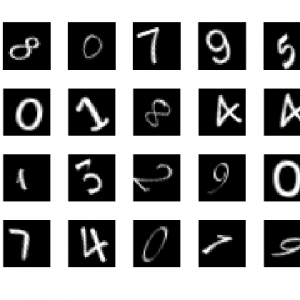

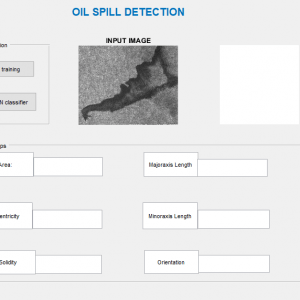

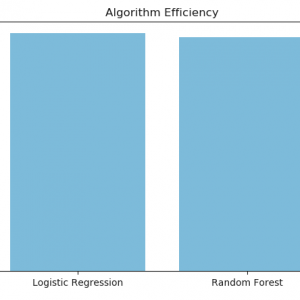

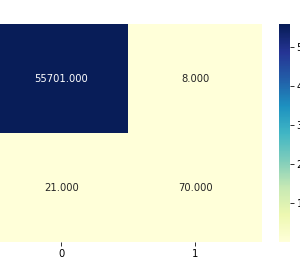

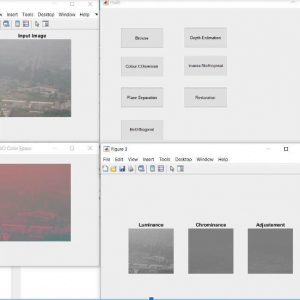

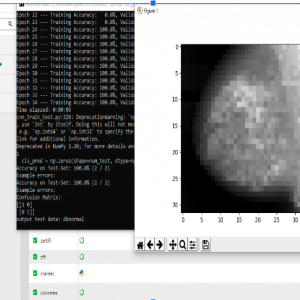

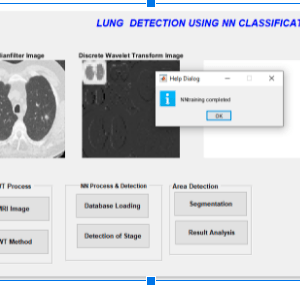

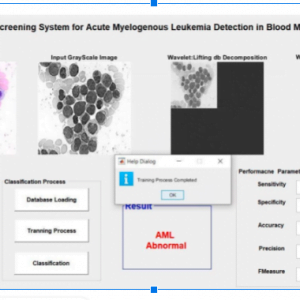

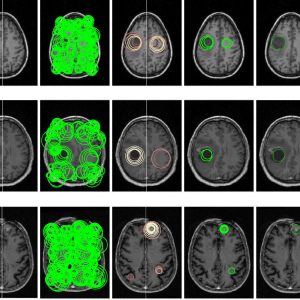

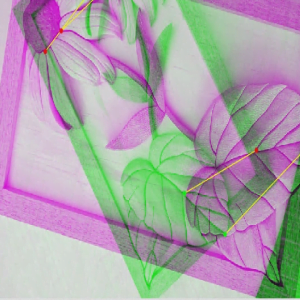

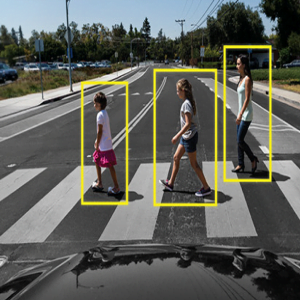

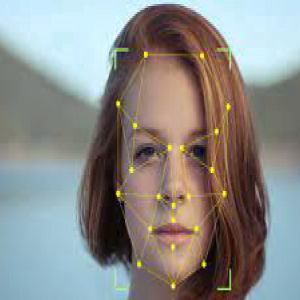

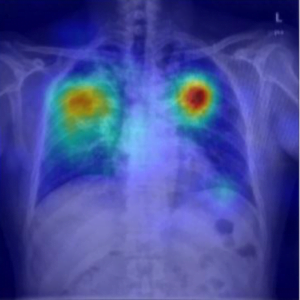

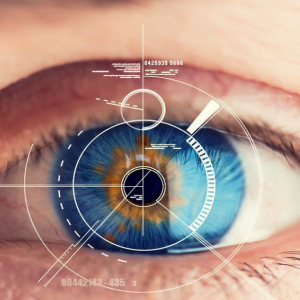

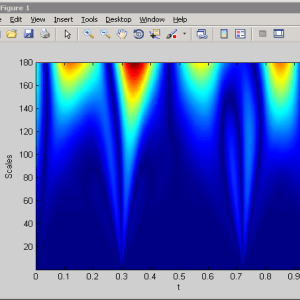

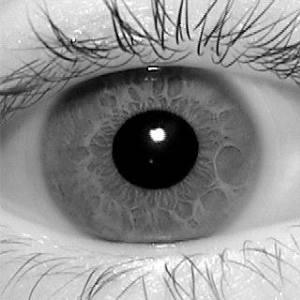

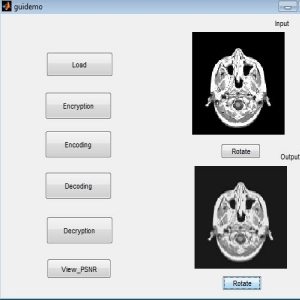

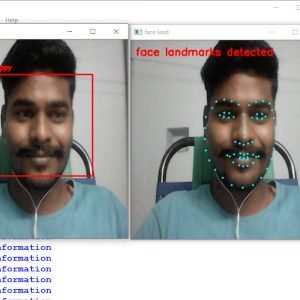

The project presents speech emotion recognition from speech signals based on features analysis, trained by SVM and classified by NN-classifier. Automatic Face emotion recognition (SER) plays an important role in HCI systems for measuring people’s emotions has dominated psychology by linking expressions to groups of basic emotions (i.e., anger, disgust, fear, happiness, sadness, and surprise). The recognition system involves Face emotion detection, features extraction, SVM and selection, and finally classification. These features are useful to distinguish the maximum number of samples accurately and the NN classifier based on discriminate analysis is used to classify the six different expressions. The simulated results will be shown that the filter-based feature extraction with the used classifier gives much better accuracy with lesser algorithmic complexity than other Face emotion expression recognition approaches.

Speech Emotion Recognition Using Matlab

INTRODUCTION

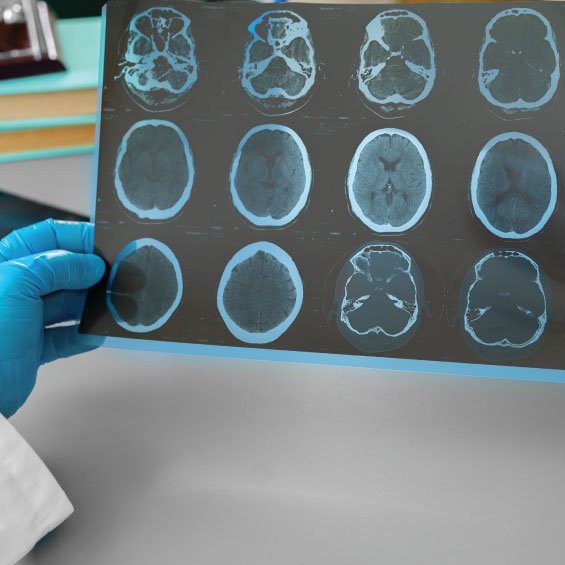

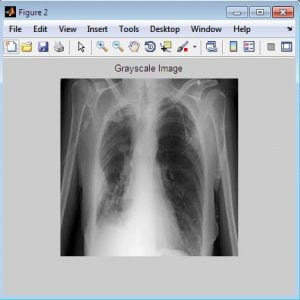

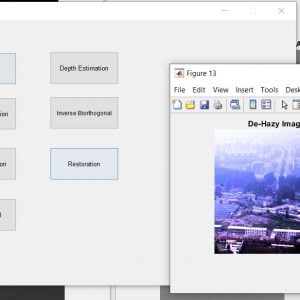

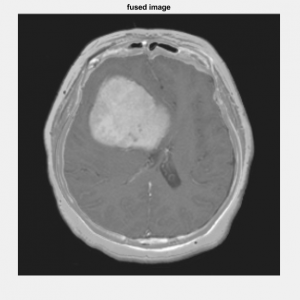

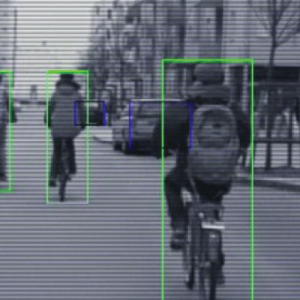

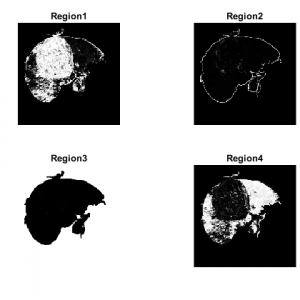

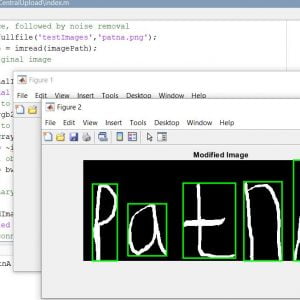

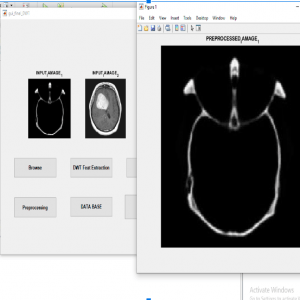

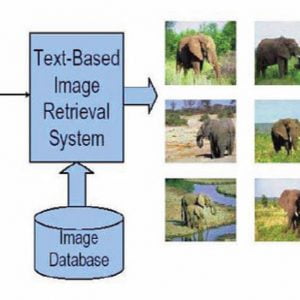

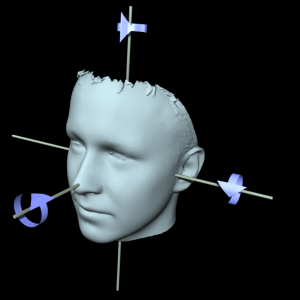

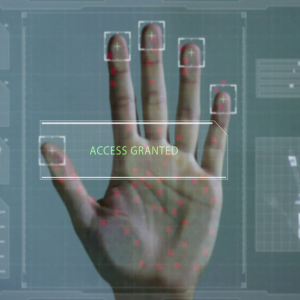

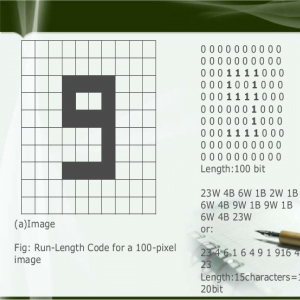

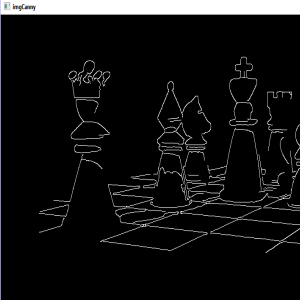

With the advent of modern technology, our desires went high and it binds no bounds. In the present era, huge research work is going on in the field of digital image and image processing. The way of progression has been exponential and it is ever increasing. Image Processing is a vast area of research in the present-day world and its applications are very widespread. Image processing in the field of signal processing where both the input and output signals are images. One of the most important applications of Image processing is Facial expression recognition. Our emotion is revealed by the expressions on our faces. Facial Expressions play an important role in interpersonal communication. Facial expression is a nonverbal scientific gesture that gets expressed on our face as per our emotions. Automatic recognition of facial expression plays an important role in artificial intelligence and robotics and thus it is a need of the generation. Some application related to this includes Personal identification and Access control, Videophone and Teleconferencing, Forensic application, Human-Computer Interaction, Automated Surveillance, Cosmetology, and so on. The objective of this project is to develop an Automatic Facial Expression Recognition System which can take human facial images containing some expression as input and recognize and classify the output.

Existing Systems

- LBP

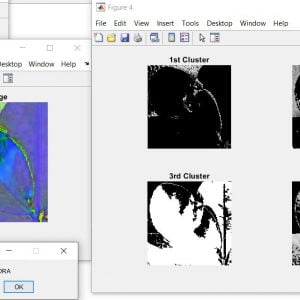

- Clustering

- CNN

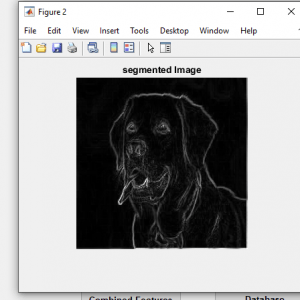

- Segmentation

DRAWBACKS

- High process load

- It doesn’t provide the best results for all stages

- Poor discrimination and low distinction data

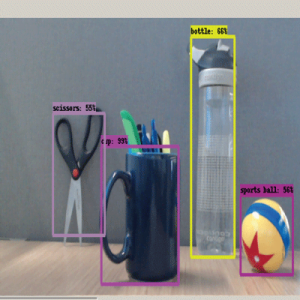

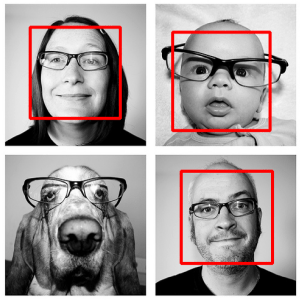

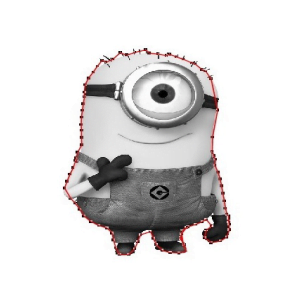

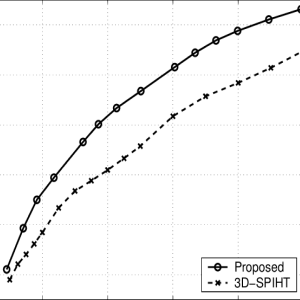

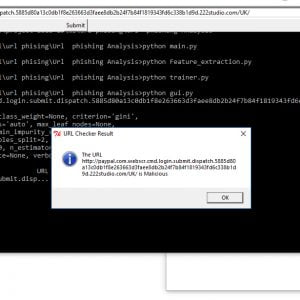

PROPOSED SYSTEM:

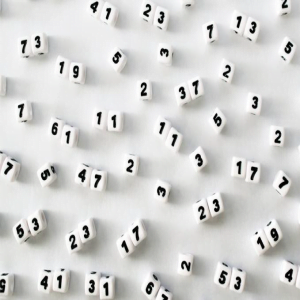

- SVM

- Haar cascade

- IN

Advantages

- Counting is attained accurately

- Less time consumption

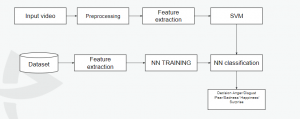

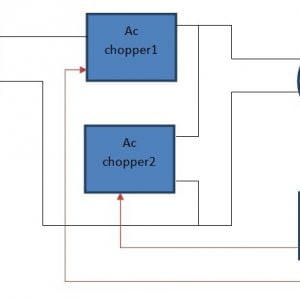

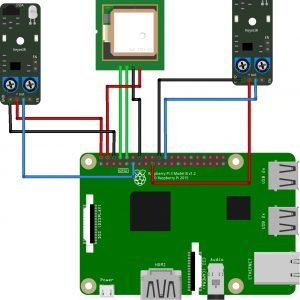

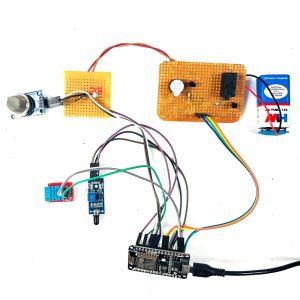

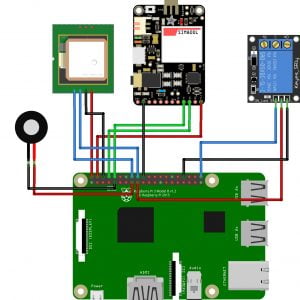

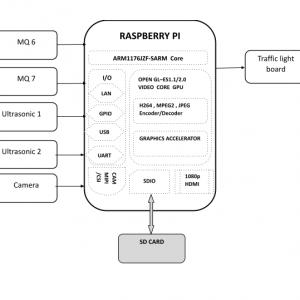

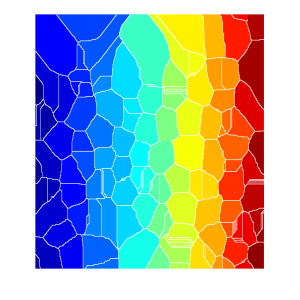

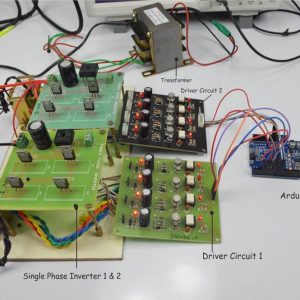

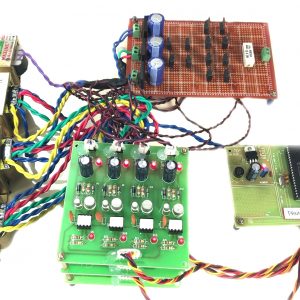

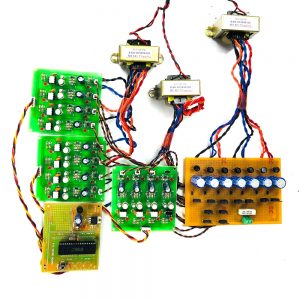

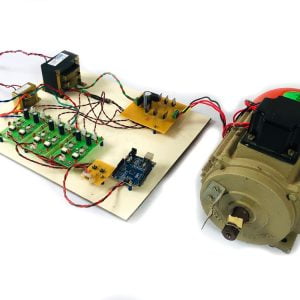

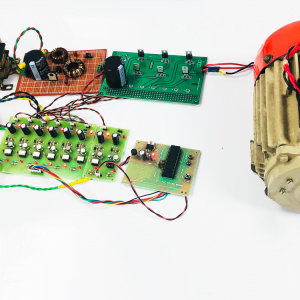

BLOCK DIAGRAM

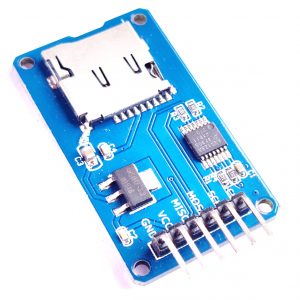

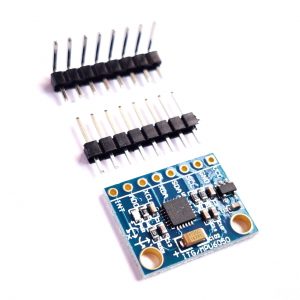

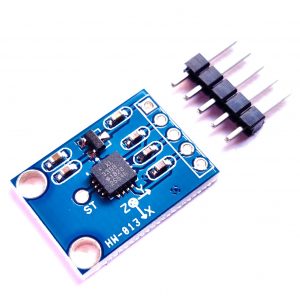

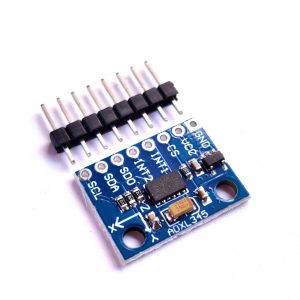

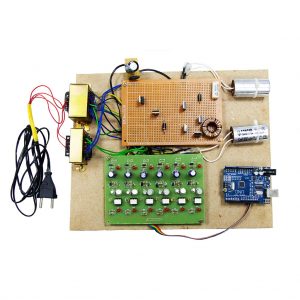

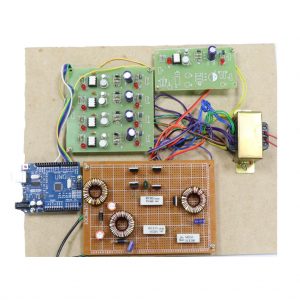

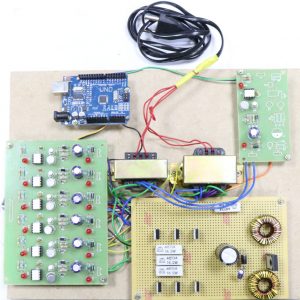

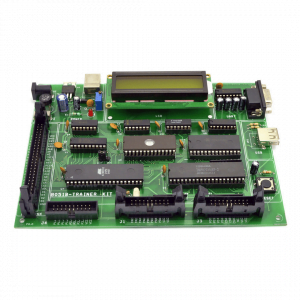

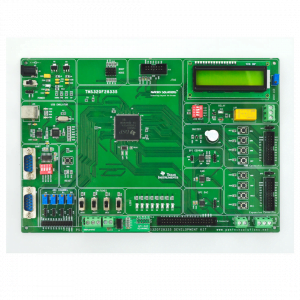

Hardware Requirements

- system

- 4 GB of RAM

- 500 GB of Hard disk

SOFTWARE REQUIREMENTS:

- MATLAB 2014 and above

REFERENCE:

- [1] H. L. Wagner, R. Buck, M. Winterbotham, “Communication of specific emotions: Gender differences in sending accuracy and communication measures,” J. Nonverbal. Behav., vol.17, pp.29-52, 1993.

- [2] J. G. Allen, D. M. Hassoun, “Sex differences in emotionality: A multidimensional approach,” Hum. Relat., vol. 29, pp. 711- 722, 1976.

- [3] C. L. Barr, R. E. Kleck, Self-other perception of the intensity of facial expressions of emotion: Do we know what we show,” J. Pers. Soc. Psychol. vol. 68, pp.608-618, 1995.

- [4] A. M. Kring, A. H. Gordon, “Sex differences in emotion: Expression, experience, and physiology,” J. Pers. Soc. Psychol. vol. 74, pp. 686-703, 1998.

- [5] L. R. Brody, Gender, emotional expression, and parent-child boundaries. In R. D. Kavanaugh, B. Zimmerberg, & S. Fein (Eds.), Emotion: Interdisciplinary perspectives, 1996, pp. 139-170. Mahwah, NJ: Lawrence Erlbaum.

Customer Reviews

There are no reviews yet.