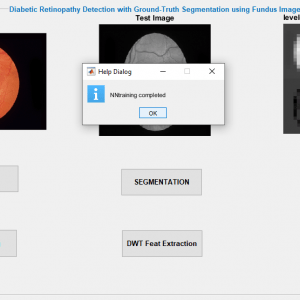

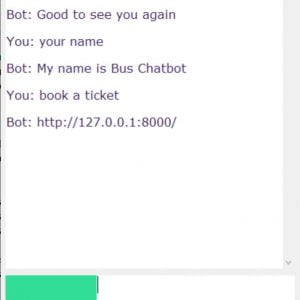

Description

Sign Language Recognition

OBJECTIVE

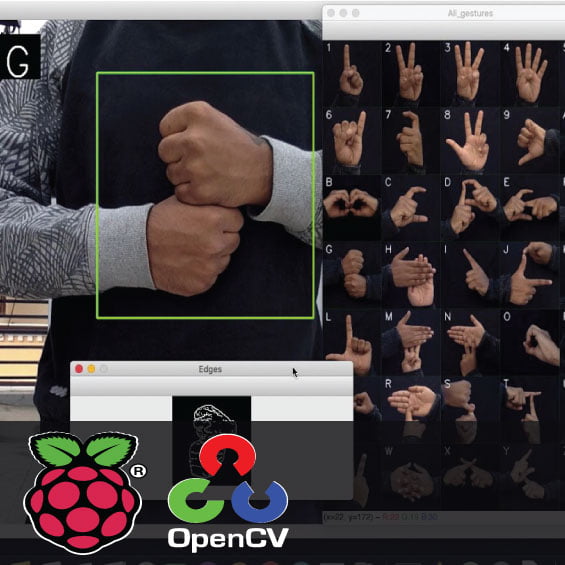

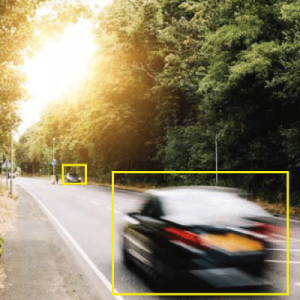

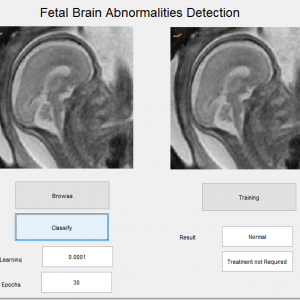

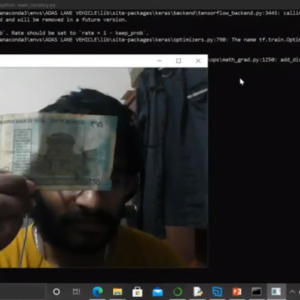

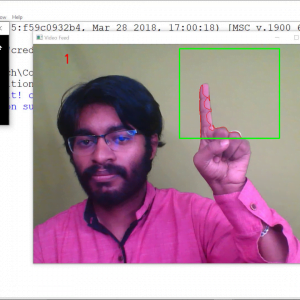

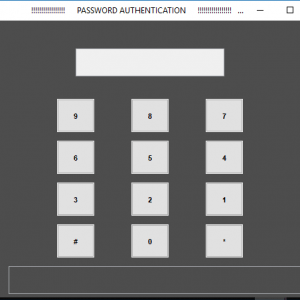

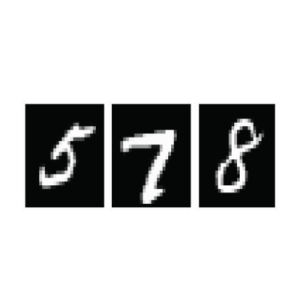

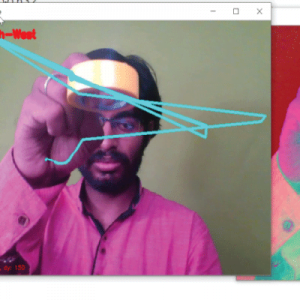

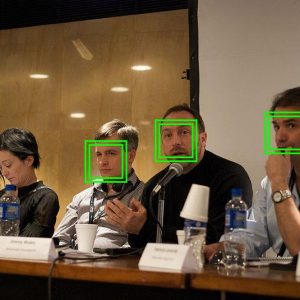

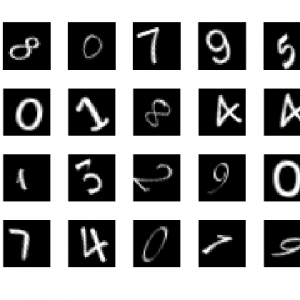

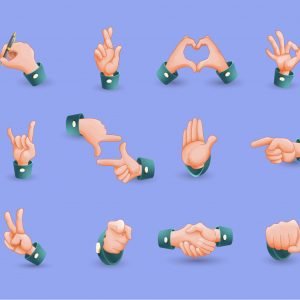

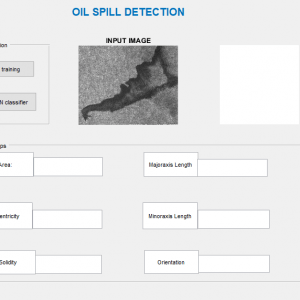

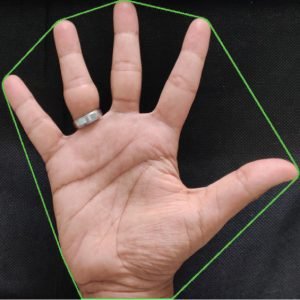

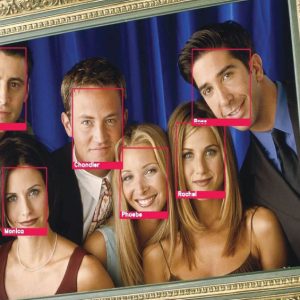

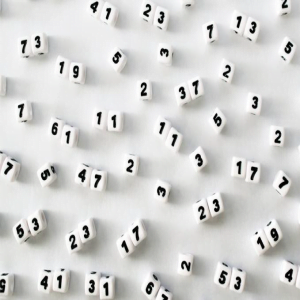

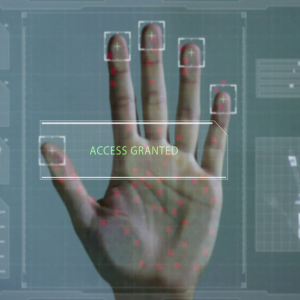

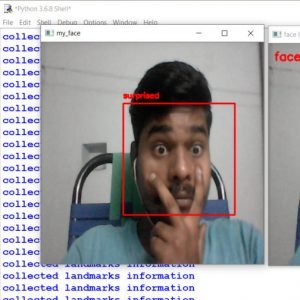

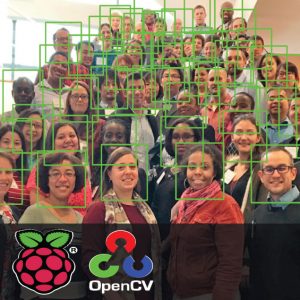

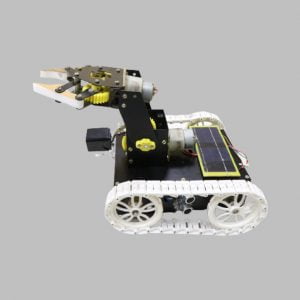

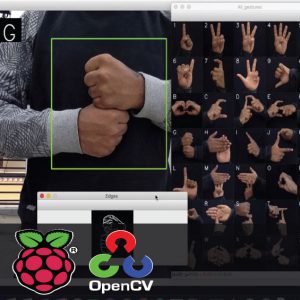

Sign Language Recognition using Raspberry Pi and OpenCV is a Gesture-based speaking system, especially for the Deaf and dumb. It uses Raspberry Pi as a core to recognize and deliver voice output. The person shows numbers from 1 to 10, it is easily identified by normal people.

ABSTRACT

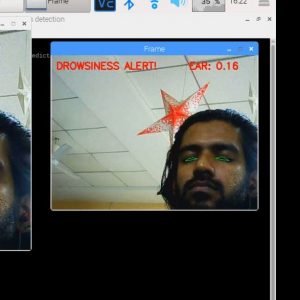

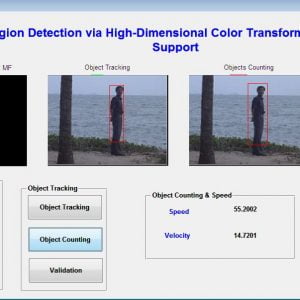

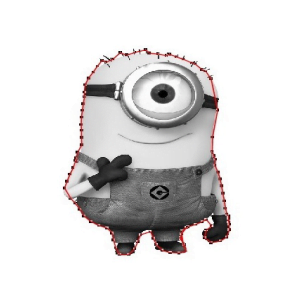

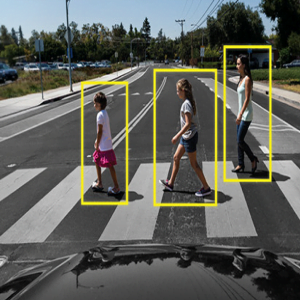

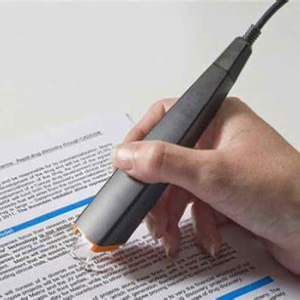

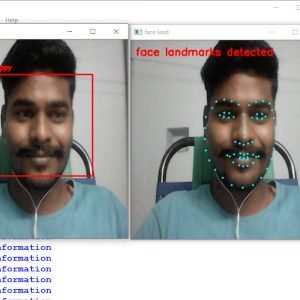

A gesture recognition system is developed for deaf and dumb people who can’t able to listen and see as normal human beings. The idea behind this system is to understand sign language so that deaf and dumb people can communicate with the outside world without any interpreter. This project work proposes a camera-based assistive gesture reading framework to help blind persons to read text labels. Here we are using 10 sign detection, Functions of each sign can be easily configurable to certain processes (ex: For any voice command, asking for some needs or typing any letters, etc.,) using the hand sign which is detected using a camera and sign detection is done using OpenCV. And this gesture is converted to text and audio.

INTRODUCTION

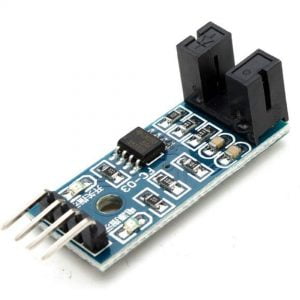

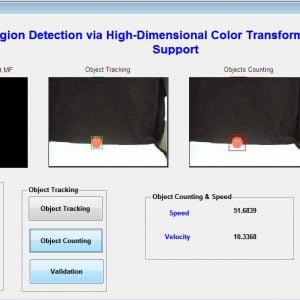

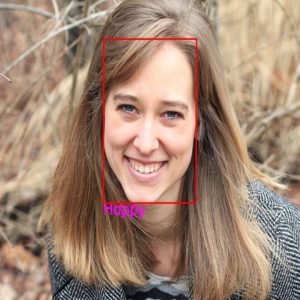

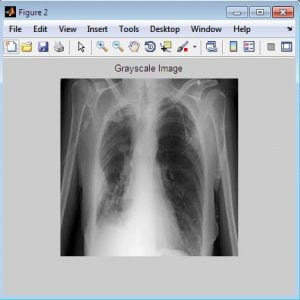

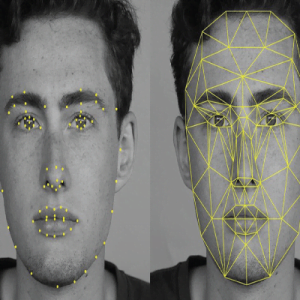

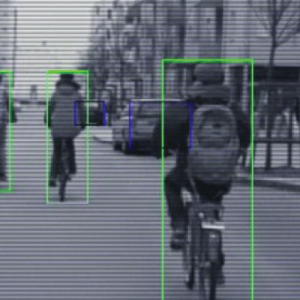

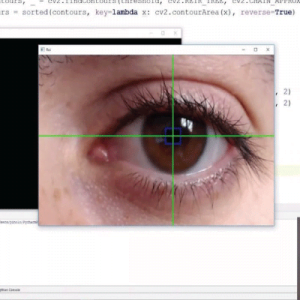

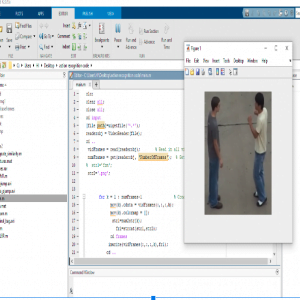

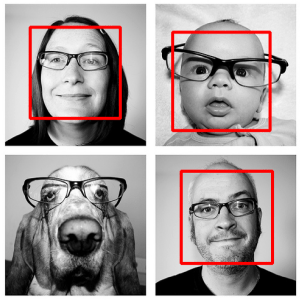

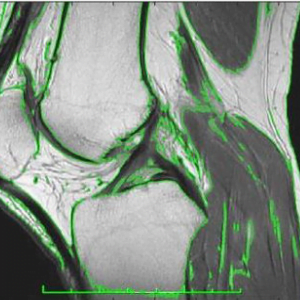

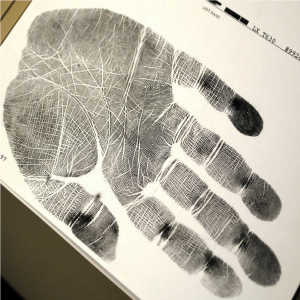

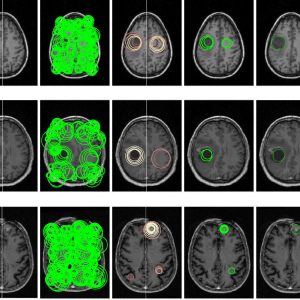

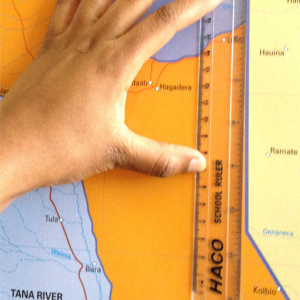

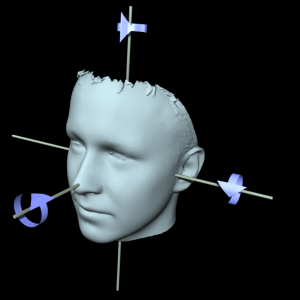

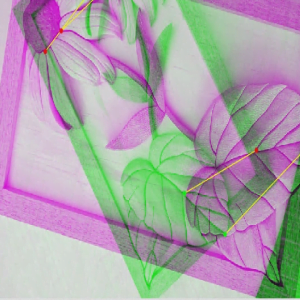

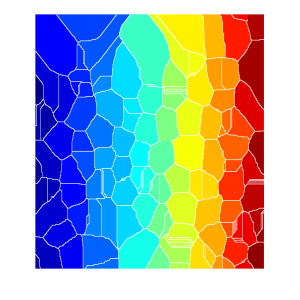

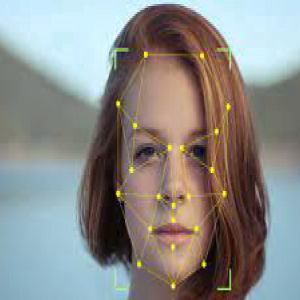

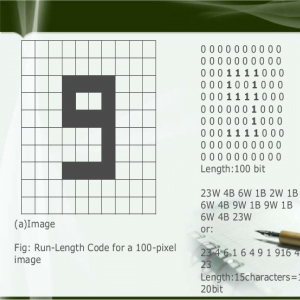

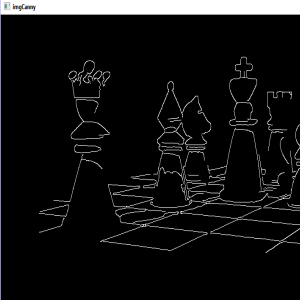

The Hand Gesture Recognition system is a widely used technology for helping dumb and deaf people. The human hand has remained a popular choice to convey information and messages in situations where other forms like speech cannot be used. Gesture recognition is a type of perceptual computing user interface that allows computers to capture and interpret human gestures as commands. The general definition of gesture recognition is the ability of a computer to understand gestures and execute commands based on those gestures. In the proposed approach, we will first look at the related works done in this field. The general purpose of the Hand gesture recognition system is to make a system capable of detecting and monitoring some features for objects that are specified according to image processing algorithms using Raspberry Pi and a camera module. The feature extraction algorithm is programmed with Python supported by OpenCV libraries and executed with the Raspberry Pi attached with an external camera. This system is working well even in poor illumination conditions. The hand gesture algorithm that embeds in the Raspberry Pi is used to detect and monitor hand gestures with the image thresholding. Images thresholding is a type of images processing. This image analysis technique is a type of image segmentation that isolates objects by converting grayscale images into binary images. Image thresholding is most effective in images with high levels of contrast.

EXISTING SYSTEM

- In the Exiting system, KNN and SVM algorithms are used

- It uses only a simulation platform, to process the image for signature detection.

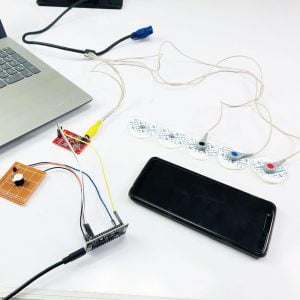

- Flex Sensors Based Sign Recognition

- Leaf switches-based gesture glove?

DISADVANTAGE

- The low range of analogy output from the flex sensor

- Difficulties are there to find the optimal gradient

- Poor Edge detection.

PROPOSED SYSTEM

This project work proposes a camera-based assistive sign detection and processing some functions like audio requests or text using Raspberry Pi. Normally in deaf and dumb schools, they are using sign language for their communication, likewise here we are using 10 signs recognition and processing some functions.

ADVANTAGES

- Mainly used for deaf and dumb people, if they show some signs, each sign does some functions like audio output or text output.

- Accurate features extraction

- Less algorithm complexity.

- Its processing time is low.

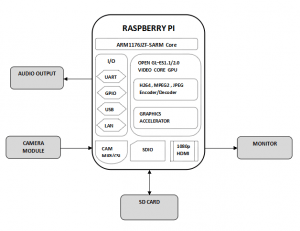

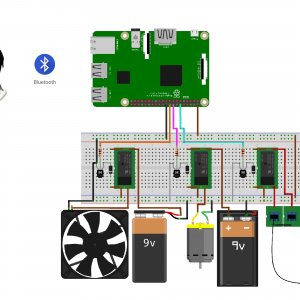

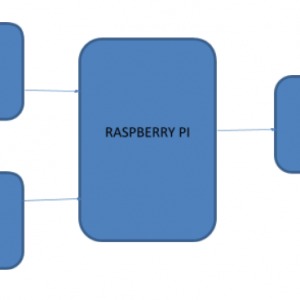

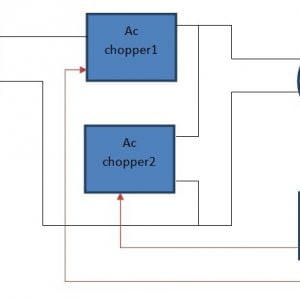

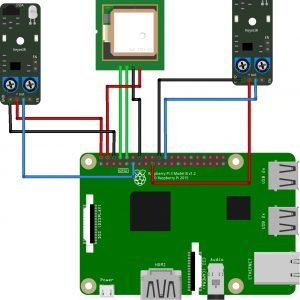

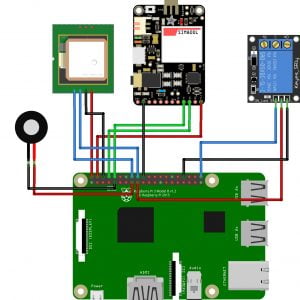

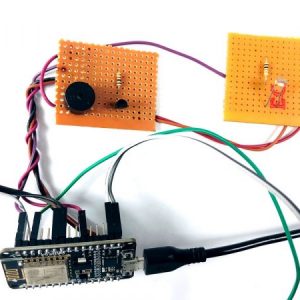

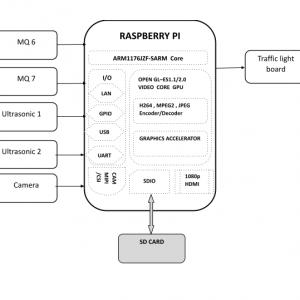

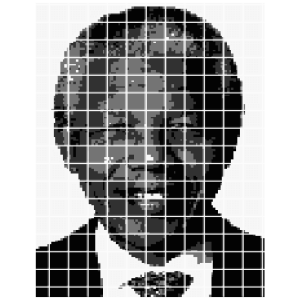

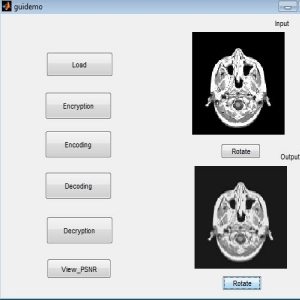

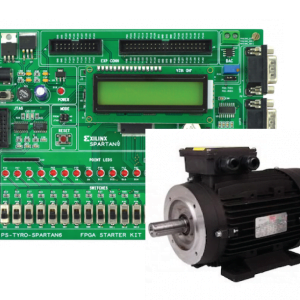

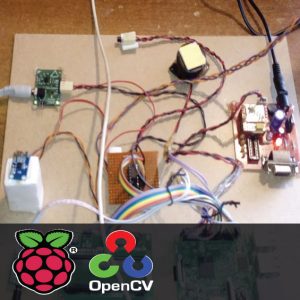

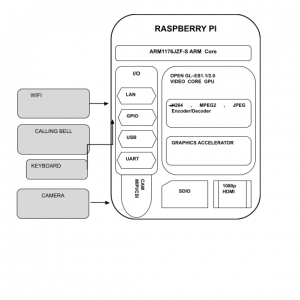

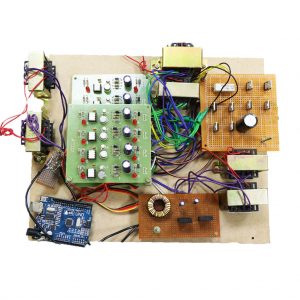

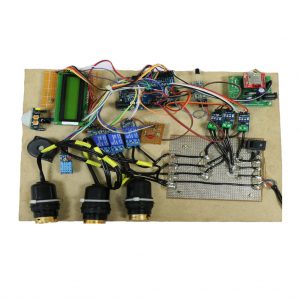

BLOCK DIAGRAM

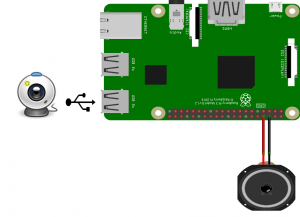

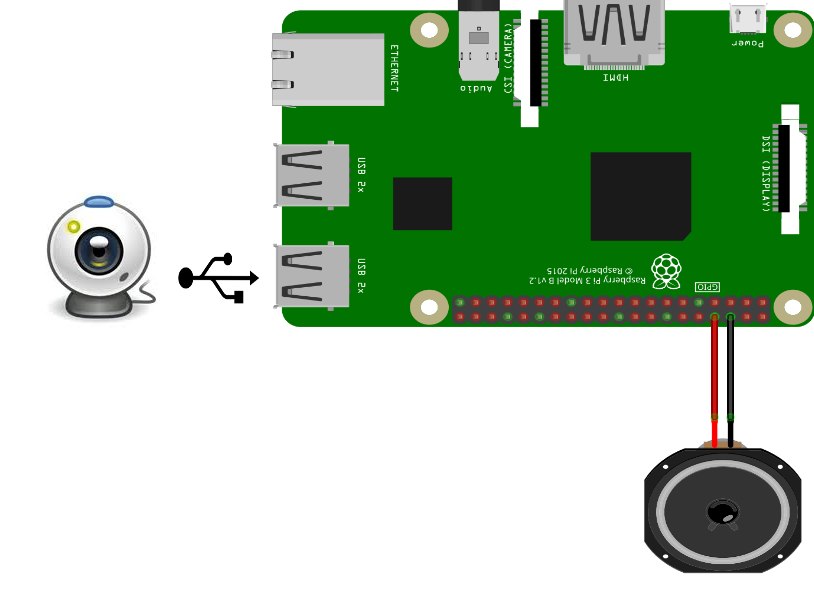

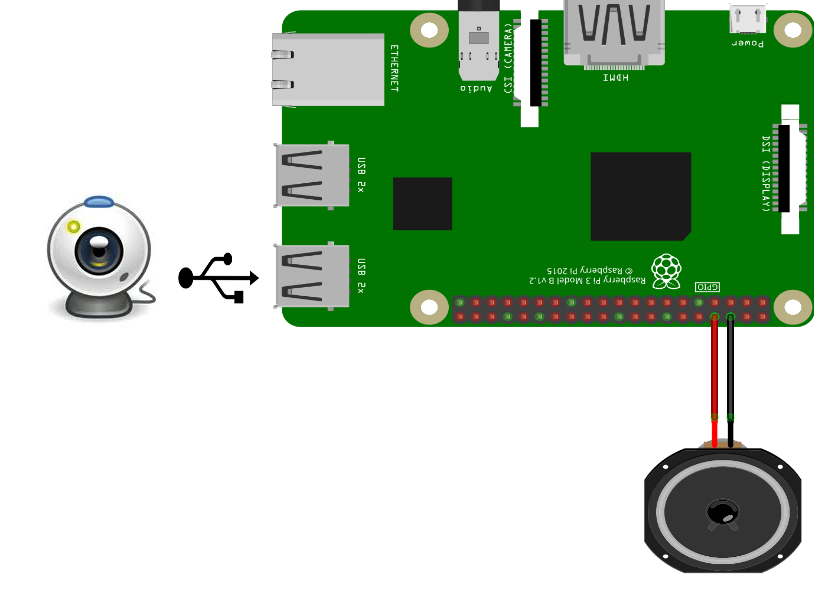

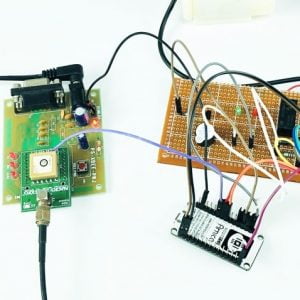

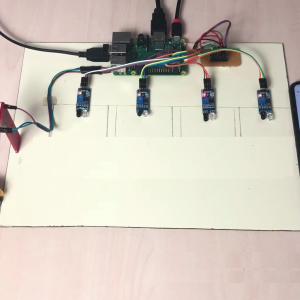

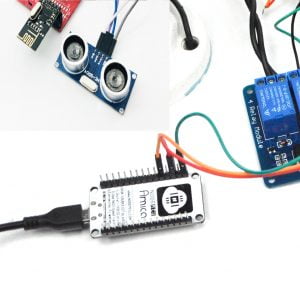

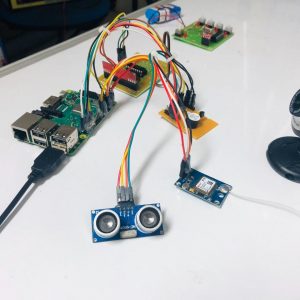

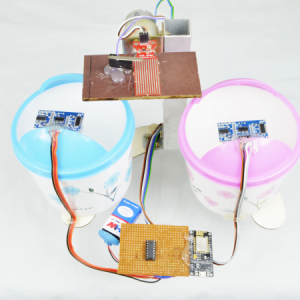

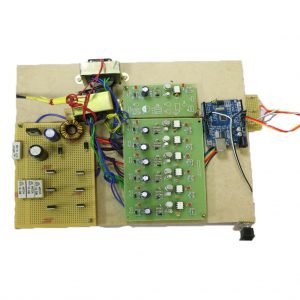

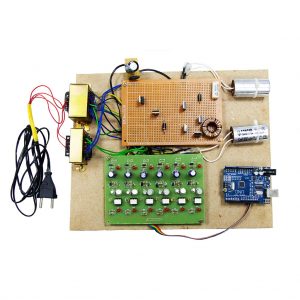

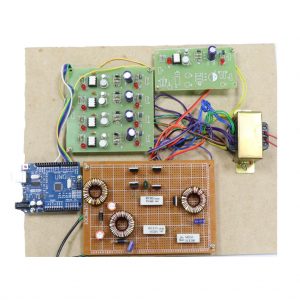

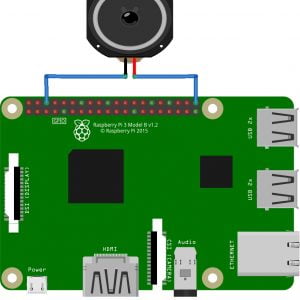

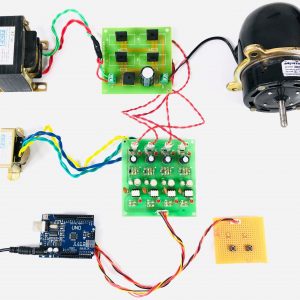

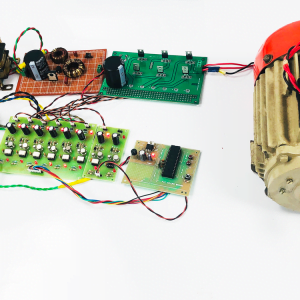

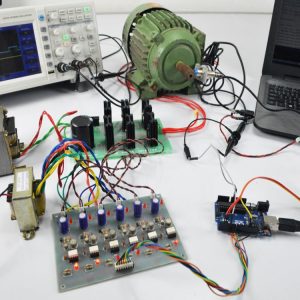

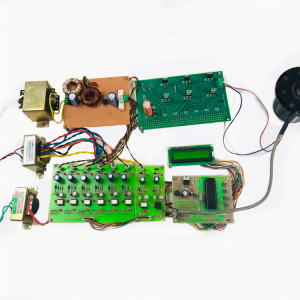

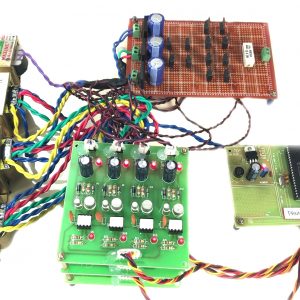

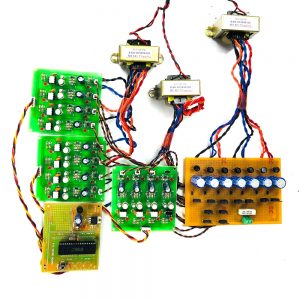

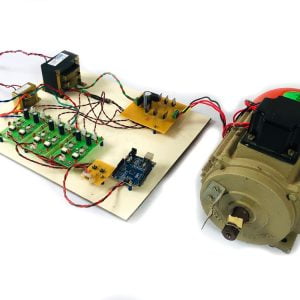

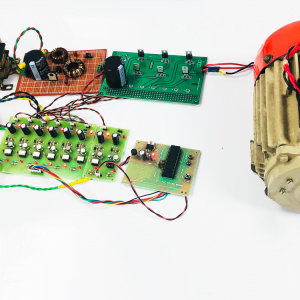

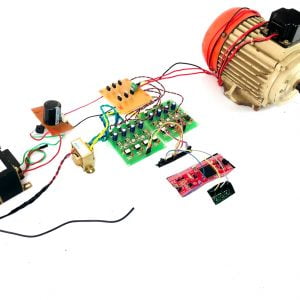

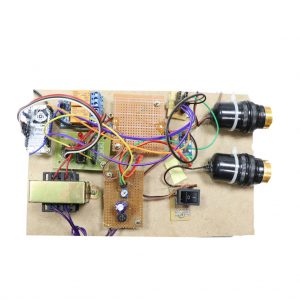

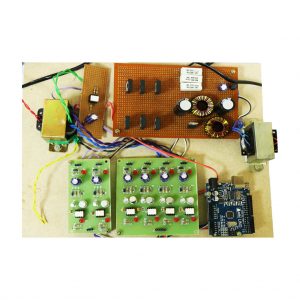

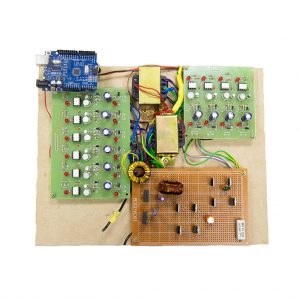

Circuit Diagram

BLOCK DIAGRAM DESCRIPTION

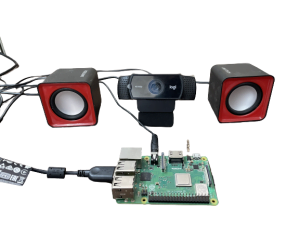

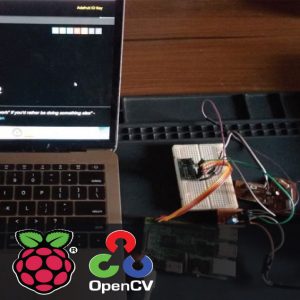

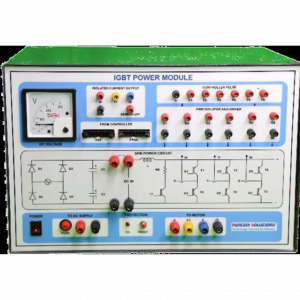

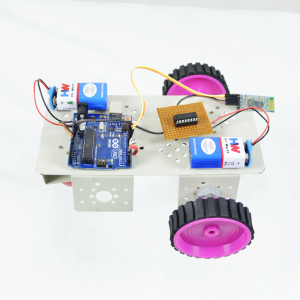

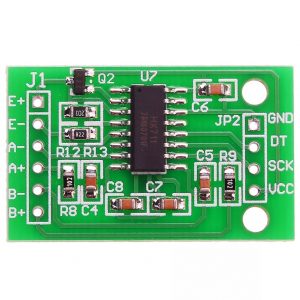

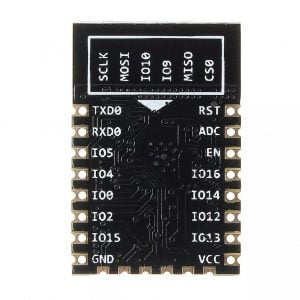

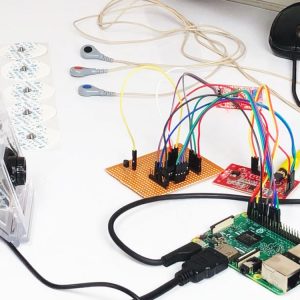

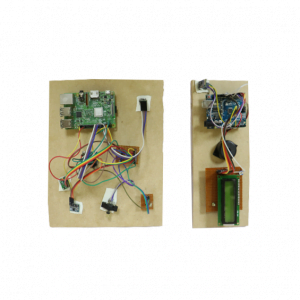

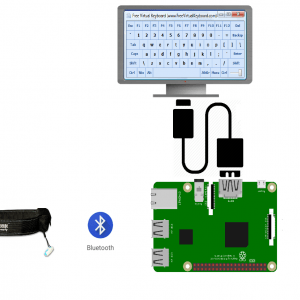

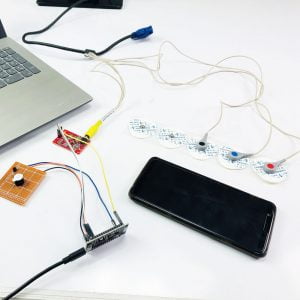

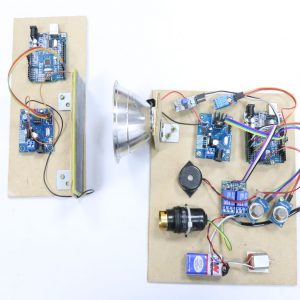

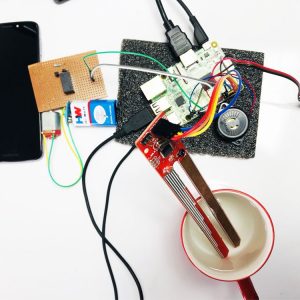

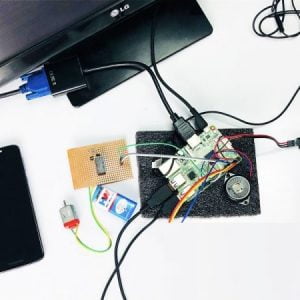

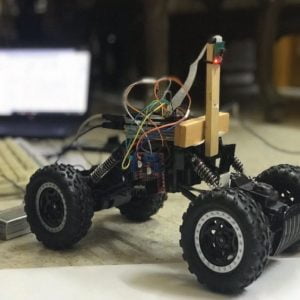

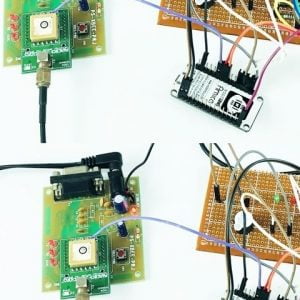

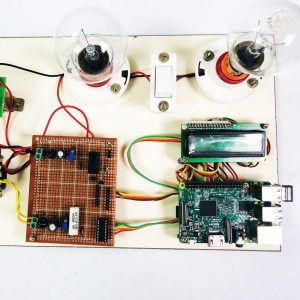

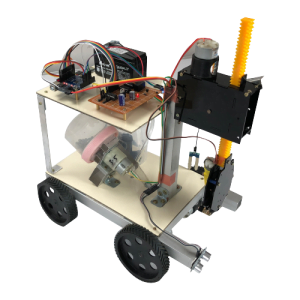

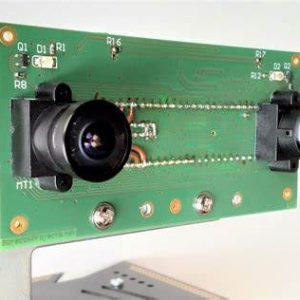

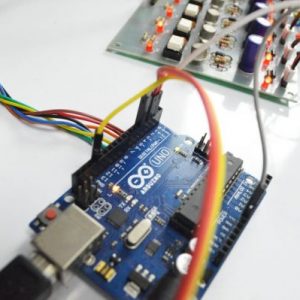

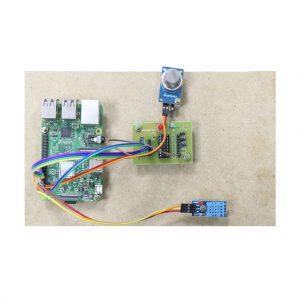

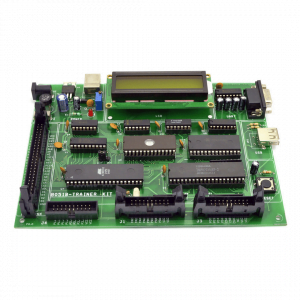

In the above block diagram, the whole system is controlled by the Arm 11 processor, and this processor is implemented on the Raspberry Pi Board. The system consists of a Raspberry pi, a Camera, an SD card, and a personal computer. Those all components are connected by USB adapters. Raspberry pi is the key element in the processing module. The first sign has been detected using a USB camera, then it performs the functions which are pre-configured for every sign.

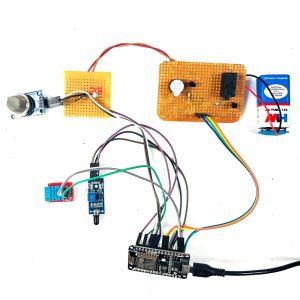

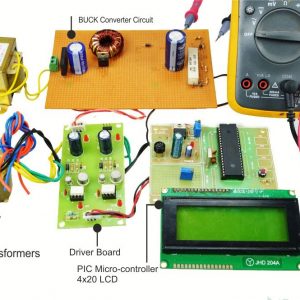

HARDWARE REQUIREMENTS

- Raspberry Pi

- USB Camera

- SD card

- Monitor

- Audio Output unit

SOFTWARE REQUIREMENTS

- Program: Python?

- Platform: Python 3 IDLE

- Raspberry pi os: Raspian os?

- Library: OpenCV

REFERENCES

[1] B.G.Lee, member IEEE and S.M.Lee ?smart wearable hand devices for sign language interpretation system with sensor fusion?, volume 18 issue: 3, February 1, 2018, IEEE sensor Journal.

[2] Mohammed Elmahgiubi, ?sign language translator and gesture recognition?, 17 December 2015, IEEE.

[3] Lih-Jen Kau, member IEEE Bo-xun Zhuo, ?a real-time portable sign language translation system?, 26 January 2017, IEEE Journal.

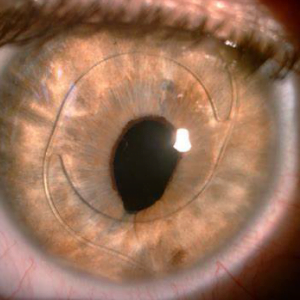

[4] Merin ary Koshi, ?a survey on advanced technology communication between deaf/dumb people using eye blink and flex sensor?, 01 February 2018, IEEE Journal.

[5] Lafayette Ahmed, ?electronic speaking system for speech impaired people:? speak up?, 29 Oct 2015, IEEE Journal.

Customer Reviews

There are no reviews yet.