Description

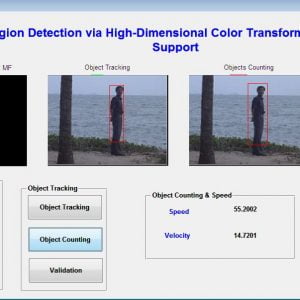

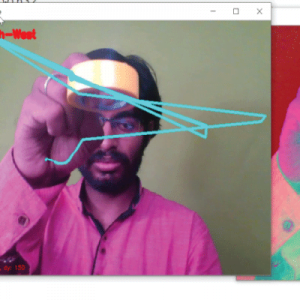

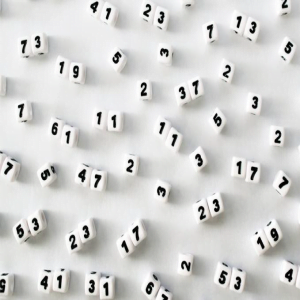

Moving Object Detection and Tracking using SIFT with K-Means Clustering

ABSTRACT:

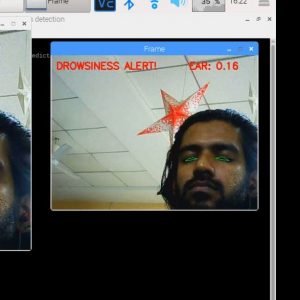

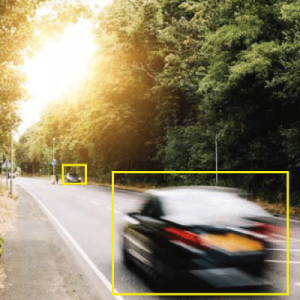

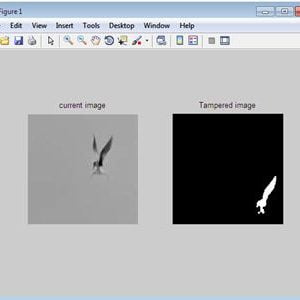

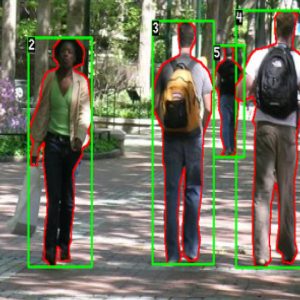

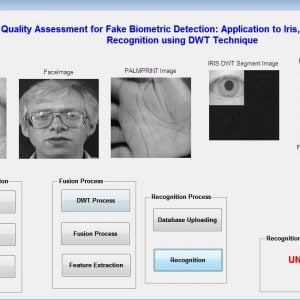

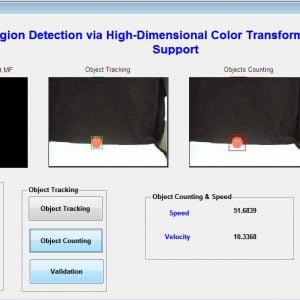

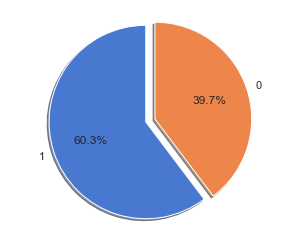

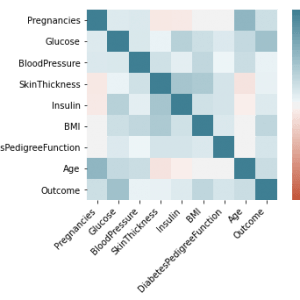

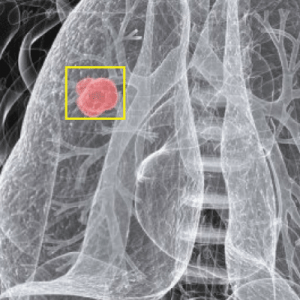

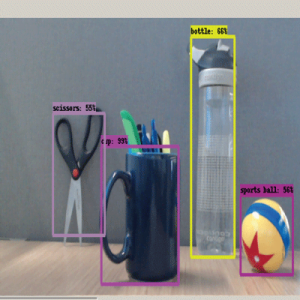

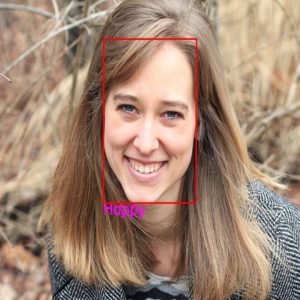

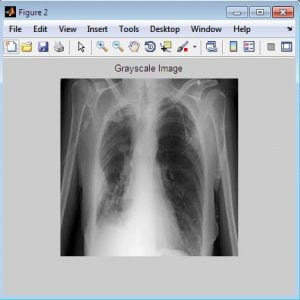

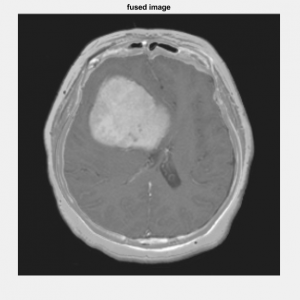

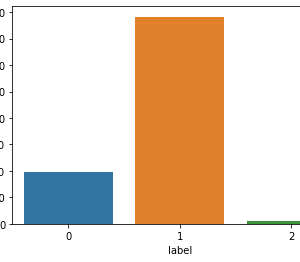

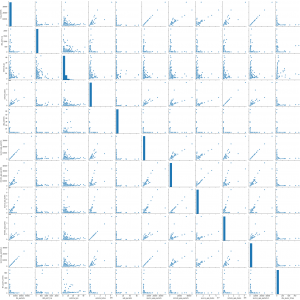

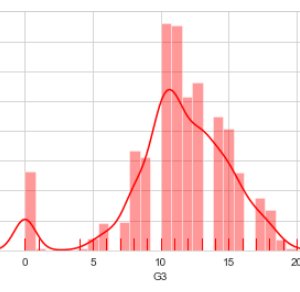

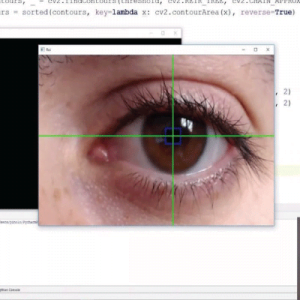

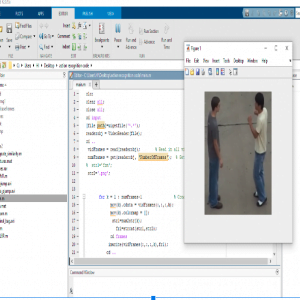

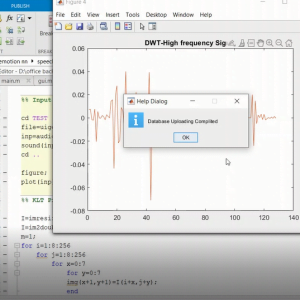

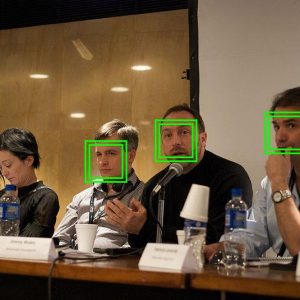

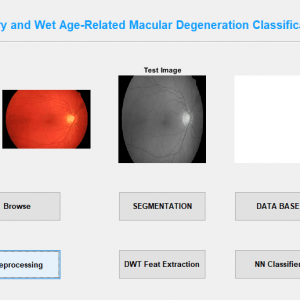

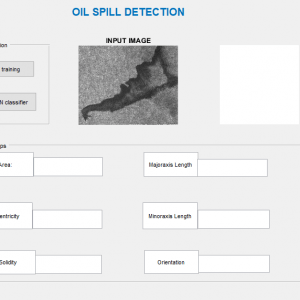

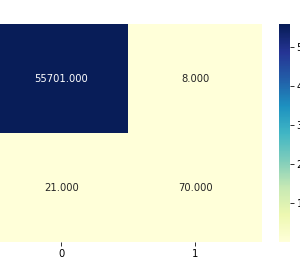

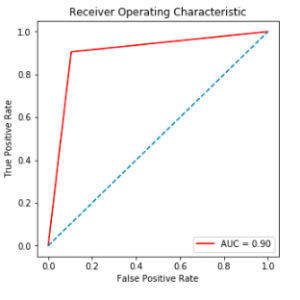

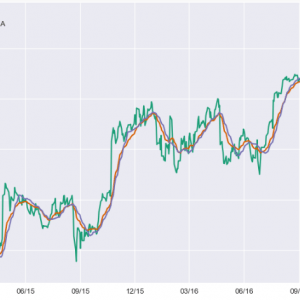

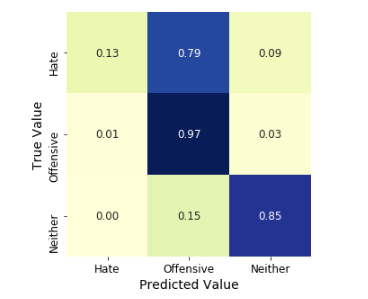

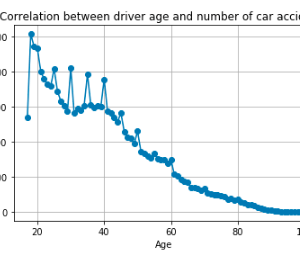

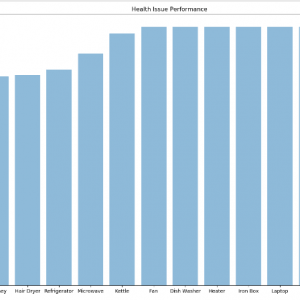

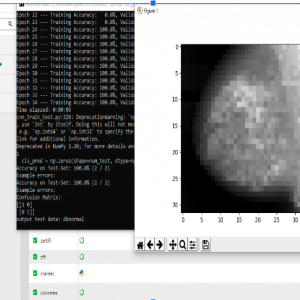

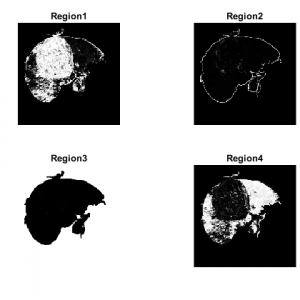

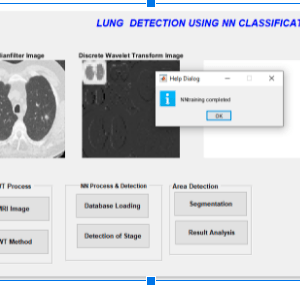

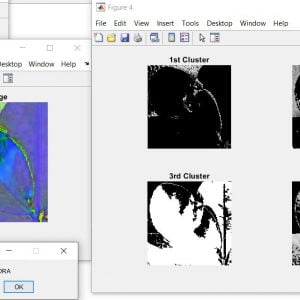

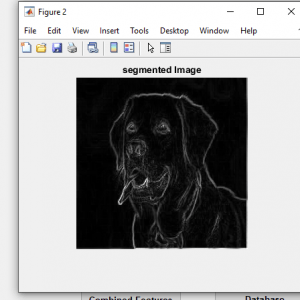

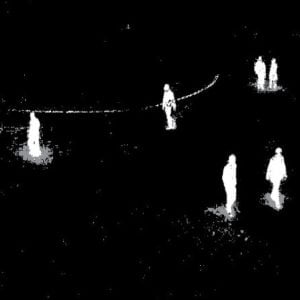

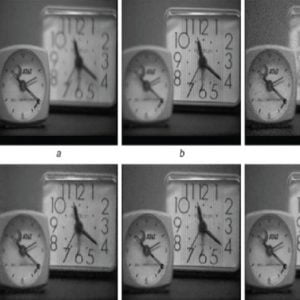

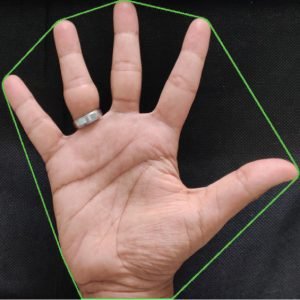

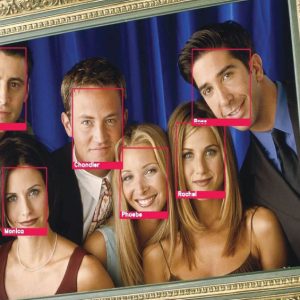

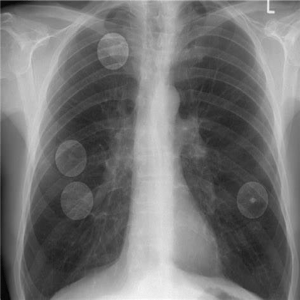

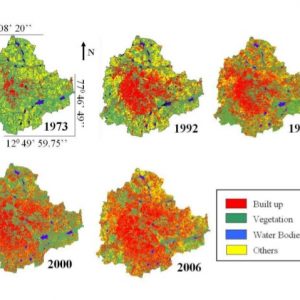

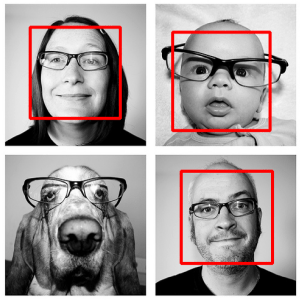

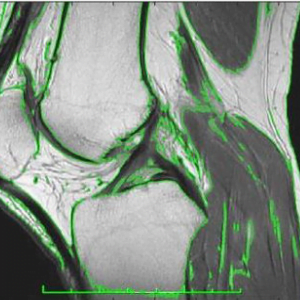

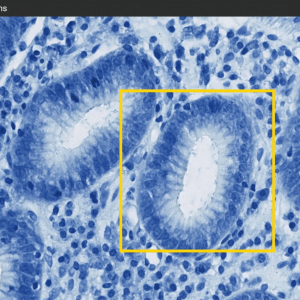

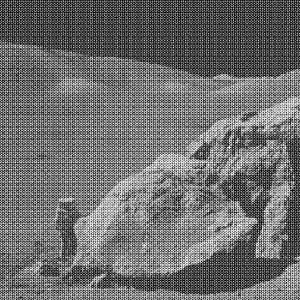

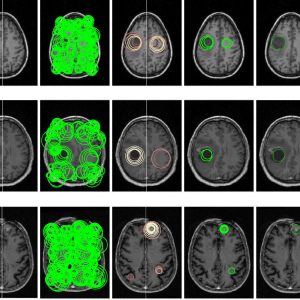

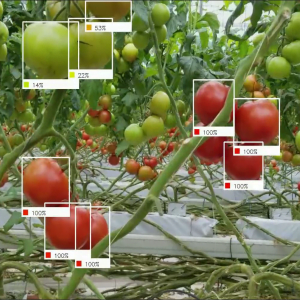

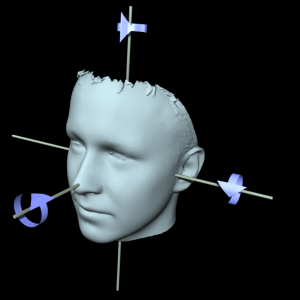

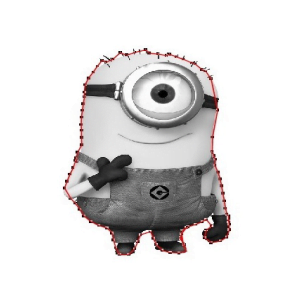

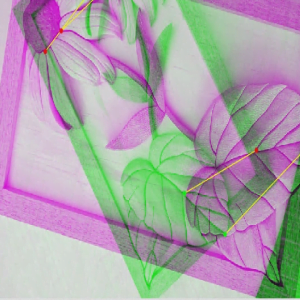

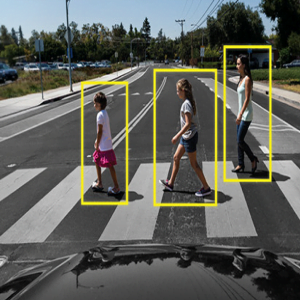

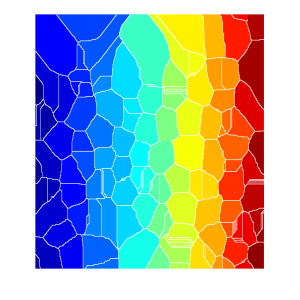

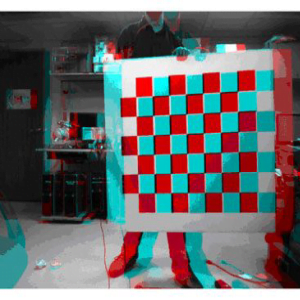

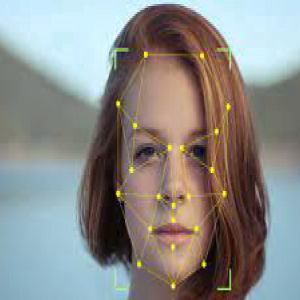

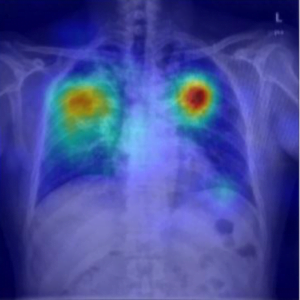

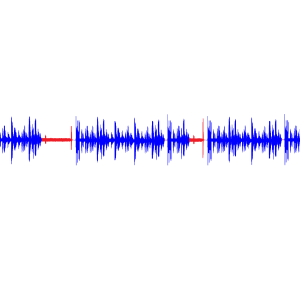

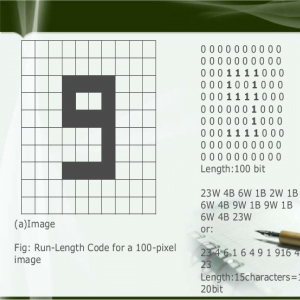

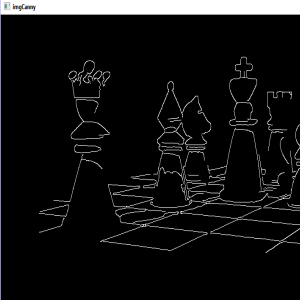

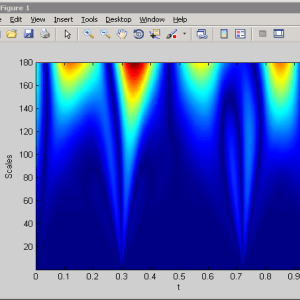

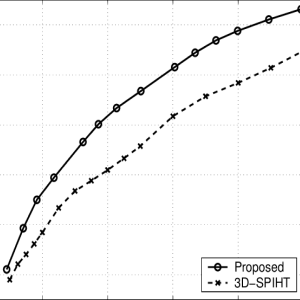

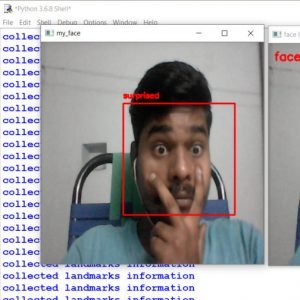

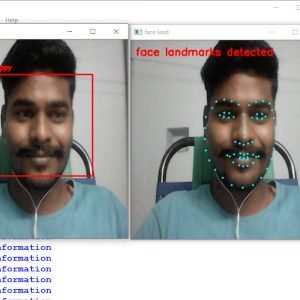

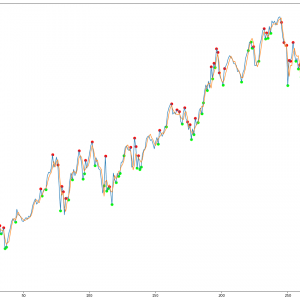

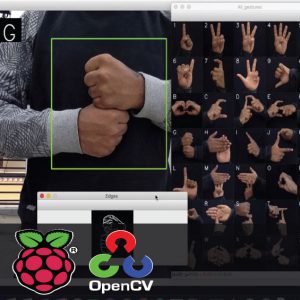

The project presents moving object detection based on SIFT algorithm for video surveillance systems. The object detection will be approached to cluster objects from the foreground in absence of background noise. Initially, it starts with feature matching by choosing the start frame or taking the initial few frames with the approximate median method. Then, a complex wavelet transform is applied to both the current and initialized background frame generating subbands of low and high frequencies. Frame differencing will be done in these subbands followed by edge map creation and image reconstruction. After the object detection, the performance of the method will be measured (between frame ground truth and obtained result) through metrics such as sensitivity, accuracy, correlation, and peak signal to noise ratio. This object detection also helps to track detected objects using connected component analysis. The simulated result shows that used methodologies for effective object detection have better accuracy and less processing time consumption rather than existing methods. Moving Object Detection and Tracking using SIFT with K-Means Clustering

Moving Object Detection and Tracking using SIFT with K-Means Clustering

INTRODUCTION:

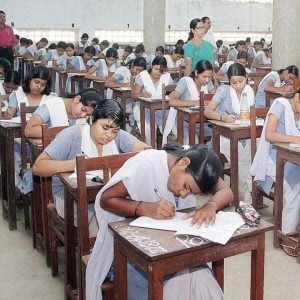

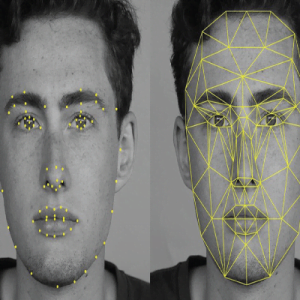

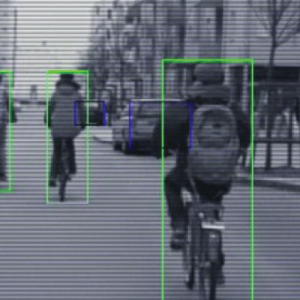

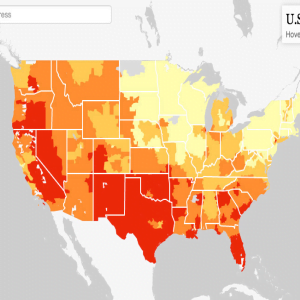

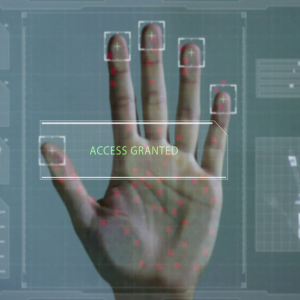

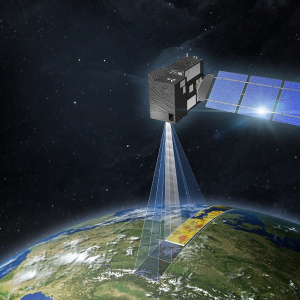

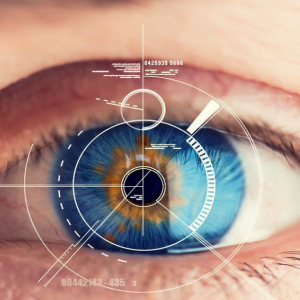

Automatic visual detection of objects is a crucial task for a large range of home, business, and industrial applications. Video cameras are among the most commonly used sensors in a large number of applications ranging from surveillance to smart rooms for video conferencing. Moving target detection means detecting moving objects from the background image to the continuous video image. Moving target tracking means finding various locations of the moving object in the video. There is a need to develop algorithms for tasks such as moving object detection. Currently used methods in moving object detection are mainly the frame subtraction method, the sift method, and the optical flow method . Moving Object Detection and Tracking using SIFT with K-Means Clustering

EXISTING SYSTEM:

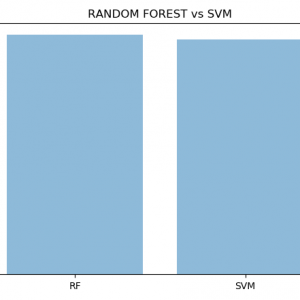

Our algorithm uses a combination of motion detection and image-based template matching to track the targets. Motion detection is determined by temporal differencing and template matching is done only on the locations as guided by the motion detection stage to provide a robust target-tracking method using SVM

Moving Object Detection and Tracking using SIFT with K-Means Clustering

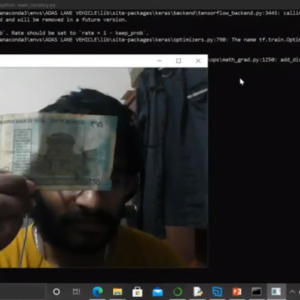

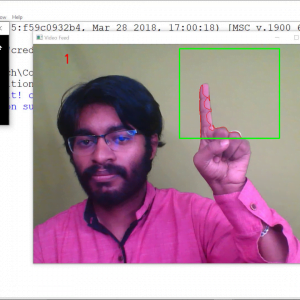

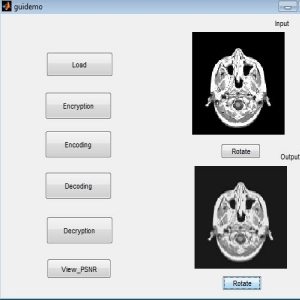

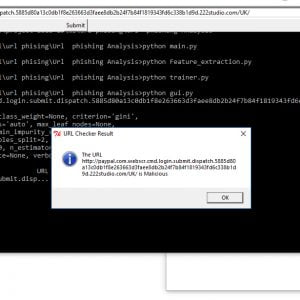

PROPOSED SYSTEM:

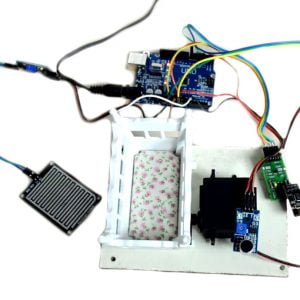

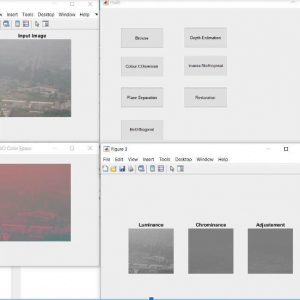

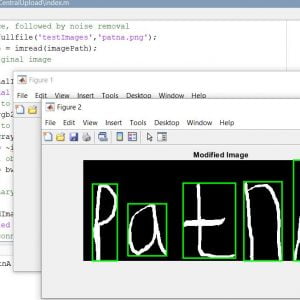

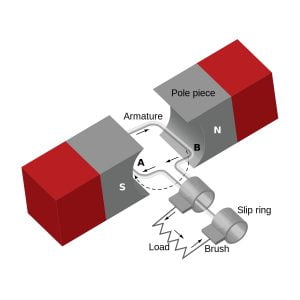

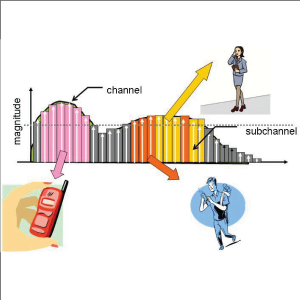

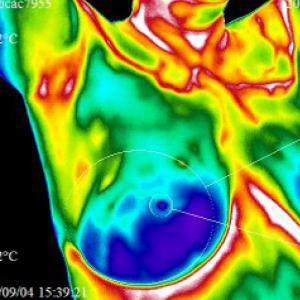

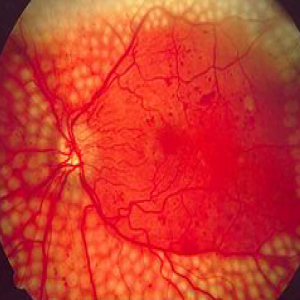

We go with the video input image and make their key points match by using SIFT algorithm for the processing of the image it clusters and signified the image in this technology that uses the difference between the current image and the background image to detect the motion region, and it is generally able to provide data included object information. The background image is subtracted from the current frame. If the pixel difference is greater than the set threshold value T, then it determines that the pixels from the moving object, otherwise, as the background pixels and it classifies and tracks the moving image.

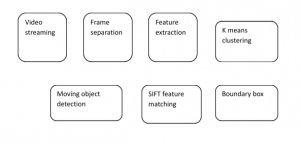

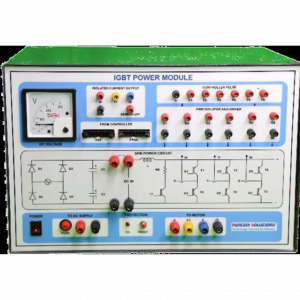

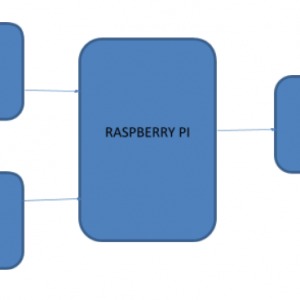

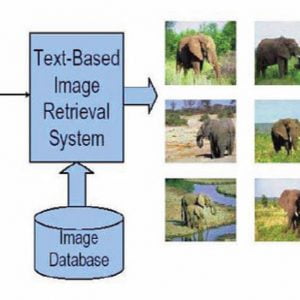

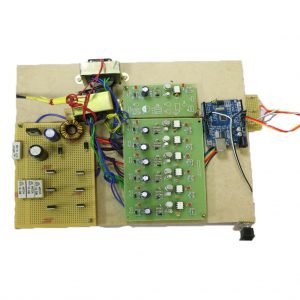

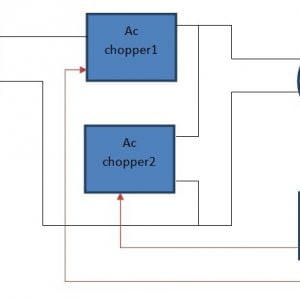

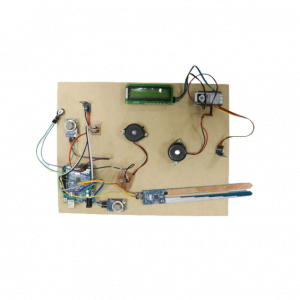

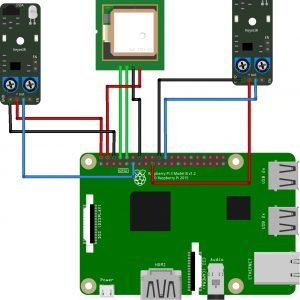

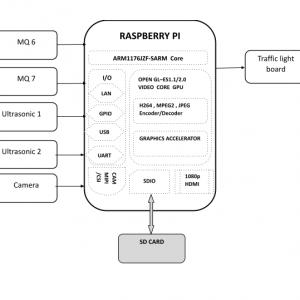

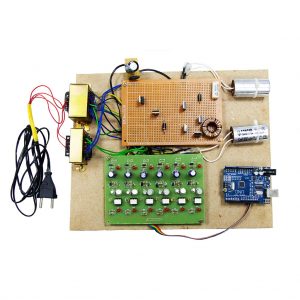

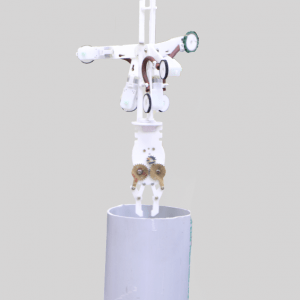

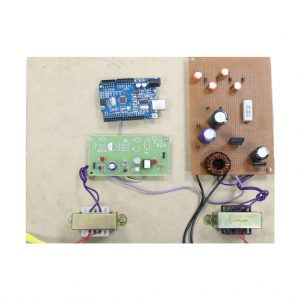

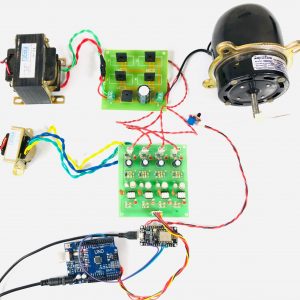

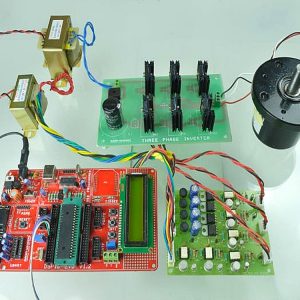

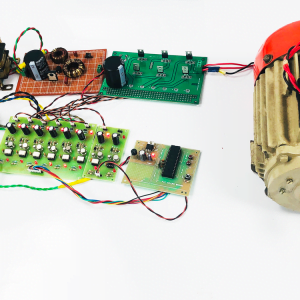

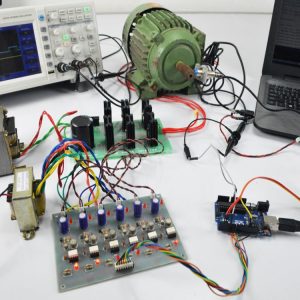

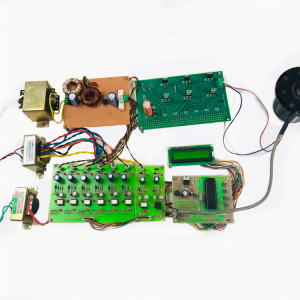

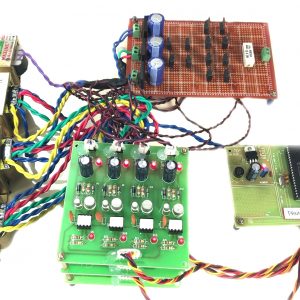

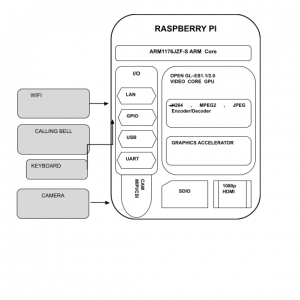

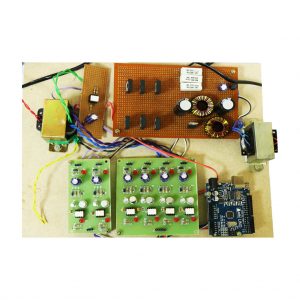

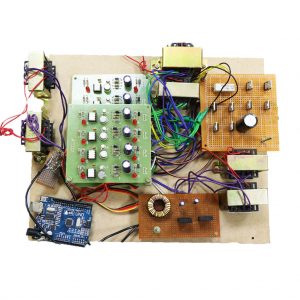

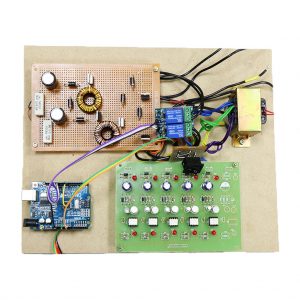

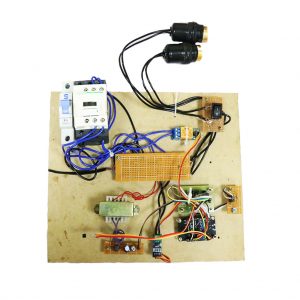

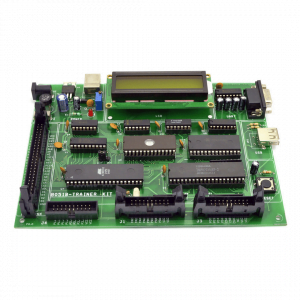

BLOCK DIAGRAM:

ADVANTAGES

- Color video process

- Reducing noise

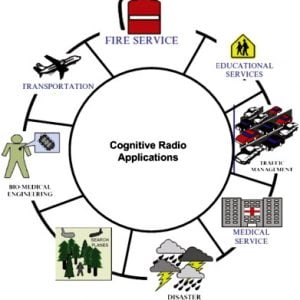

APPLICATIONS

- Video surveillance

- Forensic applications

CONCLUSION:

In the present work, a robust and sift algorithm and clustering process for to object detection domain have been proposed. The performance of the proposed method has been evaluated and compared with other standard methods in consideration in terms of various performance metrics as discussed. From the obtained results and their qualitative and quantitative analysis, it can be concluded that the proposed method is performing better in comparison to other methods as well as it is also capable of alleviating the problems associated with other spatial domain methods such as ghosts, clutters, noises, etc.

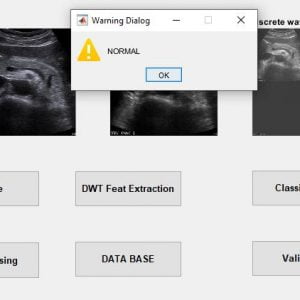

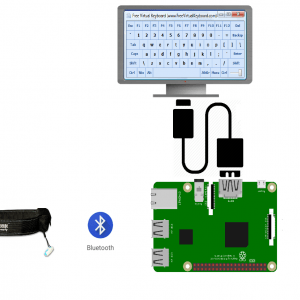

SOFTWARE REQUIREMENT :

MATLAB 7.14 and above

REFERENCES

[1] W. Hu, T. Tan, “A Survey on Visual Surveillance of Object Motion and Behaviors”, IEEE Trans. Systems, Man, and Cybernetics, Vol. 34, No. 3, pp. 334-352,2006.

[2] Y. Zhang “An Overview of Image and Video Segmentation in the Last 40 Years,” Book Vol. 1, pp. 1-16, 2006, IGI Global, USA.

[3] S.C. Cheung and C. Kamath, “Robust techniques for background subtraction in urban traffic video,” Video Communications and Image Processing, SPIE Electronic Imaging, UCRL Conf. San Jose, vol.200706, Jan 2004.

[4] N. McFarlane, C. Schofield, “Segmentation and tracking of piglets in images”, Machine Vision Application, Vol. 8, No. 3, pp. 187-193, 1995

[5] K. Kim, T. H. Chalidabhongse, D. Harwood, L. Davis, “Real-Time Foreground Background Segmentation using Codebook Model”, Real-Time Imaging, Vol. 11, No. 3, June 2005, pp. 172- 185.

Customer Reviews

There are no reviews yet.