Description

Image Compression using Huffman Coding and Run Length Coding

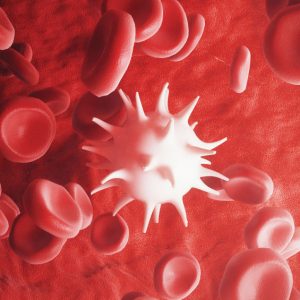

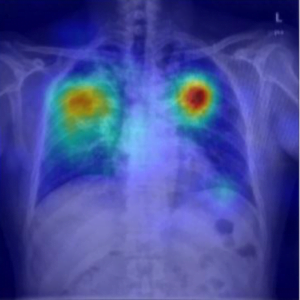

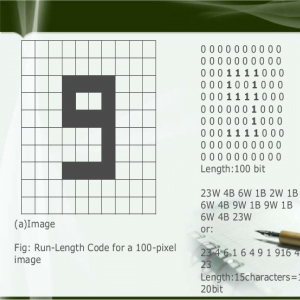

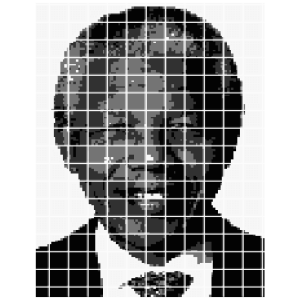

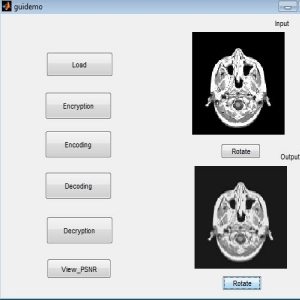

Data compression is the general term for the various algorithms and programs developed to address this problem. A compression program is used to convert data from an easy-to-use format to one optimized for compactness. Likewise, a decompression program returns the information to its original form. This research aims to appear the effect of a simple lossless compression method, RLE or Run Length Encoding, on another lossless compression algorithm which is the Huffman algorithm that generates an optimal prefix code generated from a set of probabilities and DWT gives more significance to accuracy while encoding While RLE simply replaces repeated bytes with a short description of which byte to repeat it.Image Compression using Huffman Coding and Run Length Coding

Image Compression using Huffman Coding and Run Length Coding

INTRODUCTION

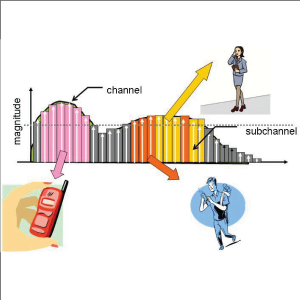

There are two dimensions along which each of the schemes discussed here may be measured, algorithm complexity and amount of compression. When data compression is used in a data transmission application, the goal is speed. Speed of transmission depends upon the number of bits sent, the time required for the encoder to generate the coded message, and the time required for the decoder to recover the original ensemble. In a data storage application, although the degree of compression is the primary concern, it is nonetheless necessary that the algorithm be efficient in order for the scheme to be practical. Several common measures of compression have been suggested, average message length, and compression ratio. Related to each of these measures are assumptions about the characteristics of the source. It is generally assumed in information theory that all statistical parameters of a message source are known with perfect accuracy

EXISTING SYSTEM

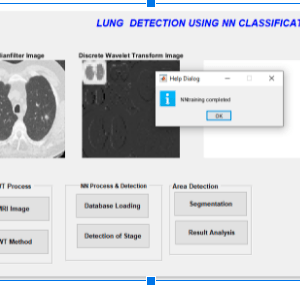

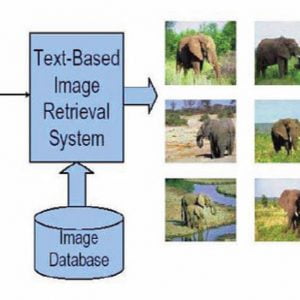

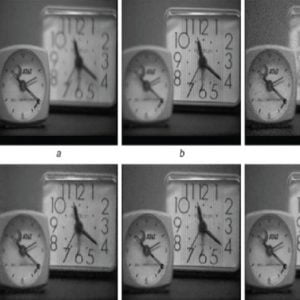

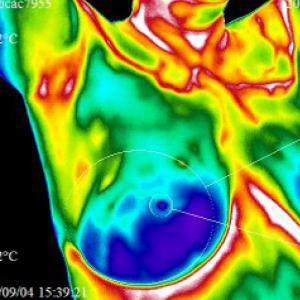

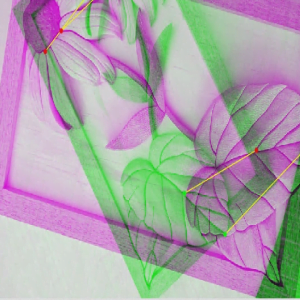

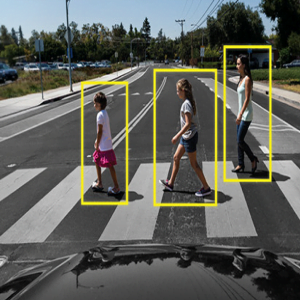

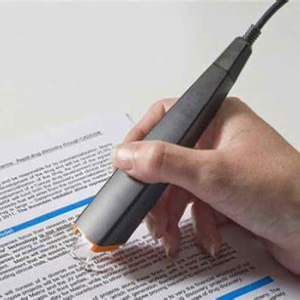

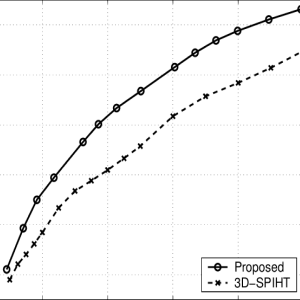

Compression refers to reducing the quantity of data used to represent a file, image, or video content without excessively reducing the quality of the original data. Do we use Discrete cosine transform and normal extraction? which also reduces the number of bits required to store and/or transmit digital media. To compress something means that you have a piece of data and you decrease its size. There are different techniques to do that and they all have their own advantages and disadvantages.

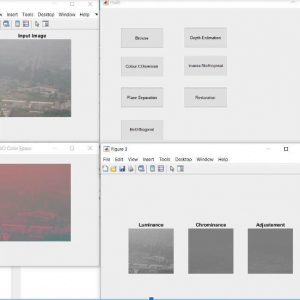

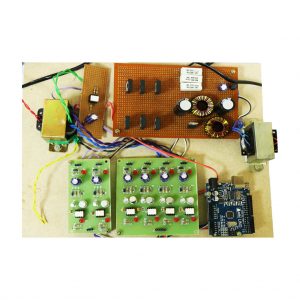

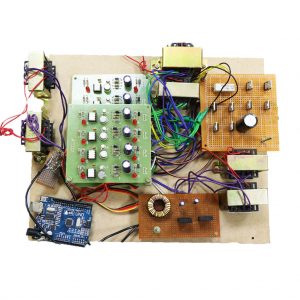

PROPOSED SYSTEM

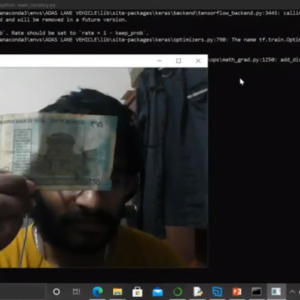

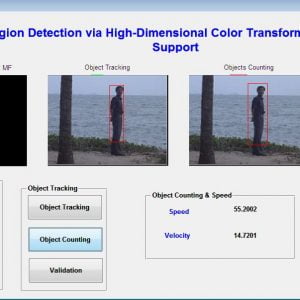

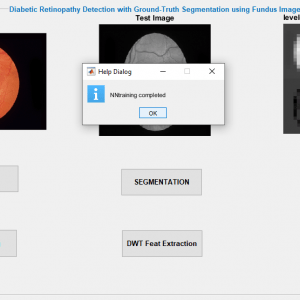

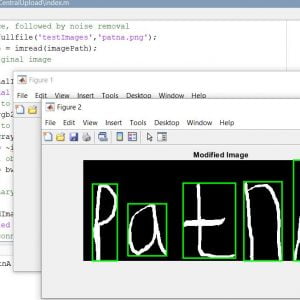

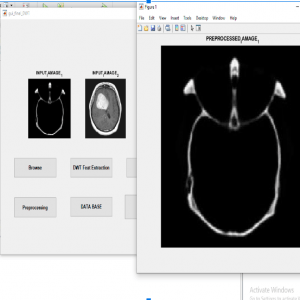

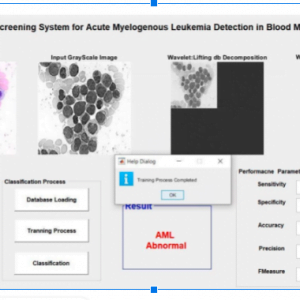

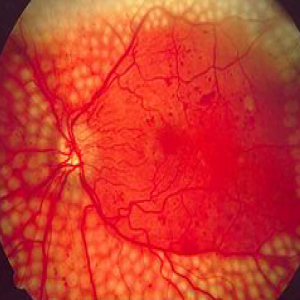

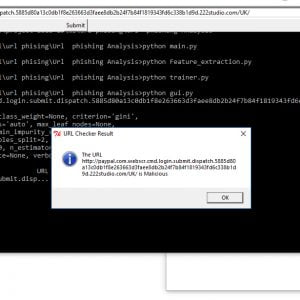

1. Taking the input file, which is a random sequence of English alphabet symbols, computing the probability for each symbol.

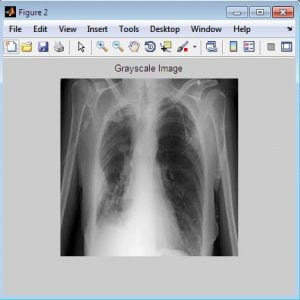

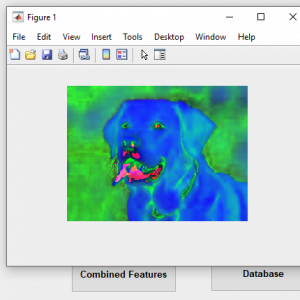

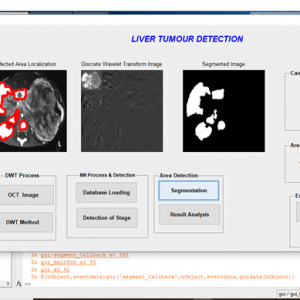

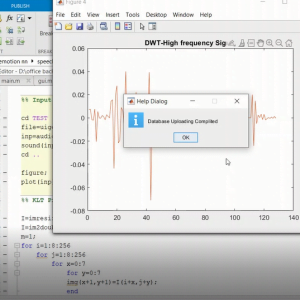

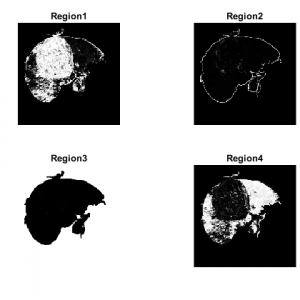

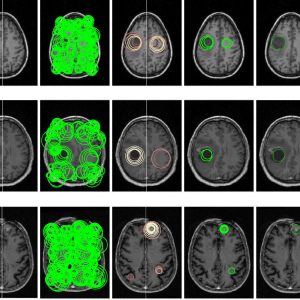

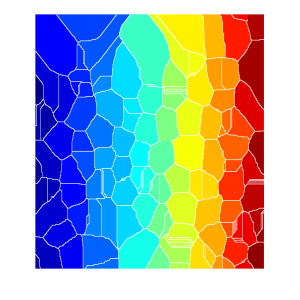

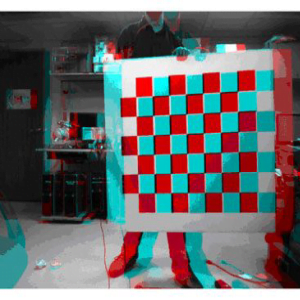

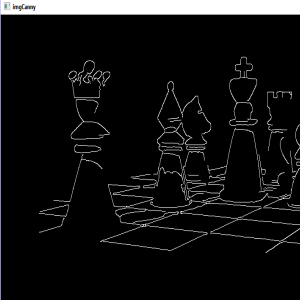

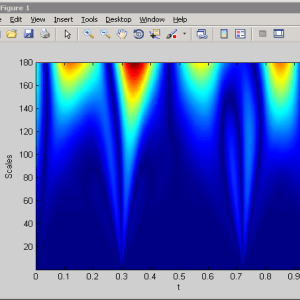

2. Applying the Huffman coding of the sequence of probabilities, the result is a string of 0 and 1 bits with a Discrete wavelet transform for the segmentation process

3. Applying the RLE method on the string of 0 and 1 bits(the result of the Huffman method). The RLE is applied after dividing the string of 0 and 1 into 8-block each and transmitting each into a byte. The RLE is applied on the bytes (0 or 255), in which 0 came from a sequence of eight zeros and 255 came from a sequence of eight ones. Here the RLE is applied only to the 0 and 255 bytes not on all the bytes in the string.

4. The final compressed file contains:- a- the number of symbols. b- the symbols. c- the code words of each symbol. d- the final strings resulted from applying the RLE method to the final code after substituting the codeword of each symbol in the input file.

Customer Reviews

There are no reviews yet.