Description

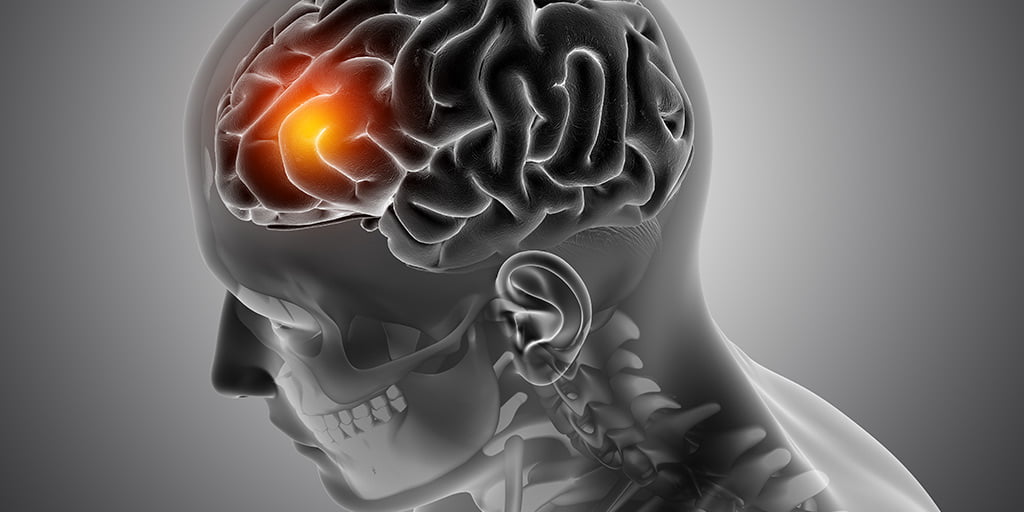

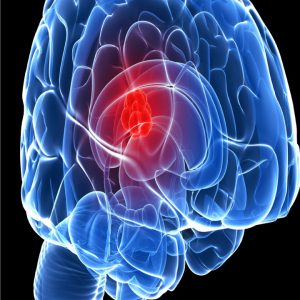

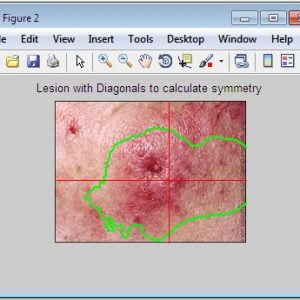

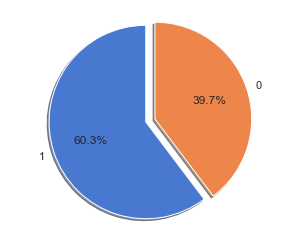

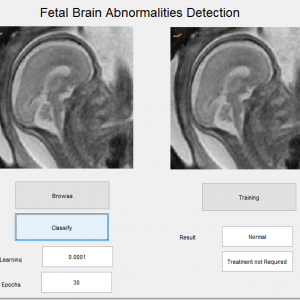

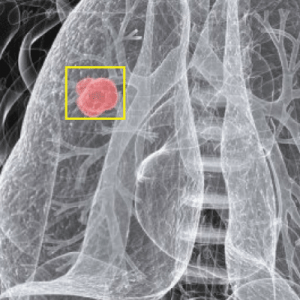

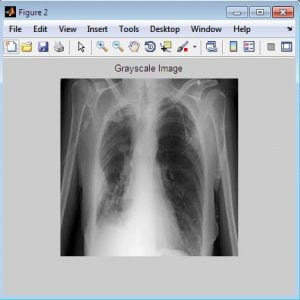

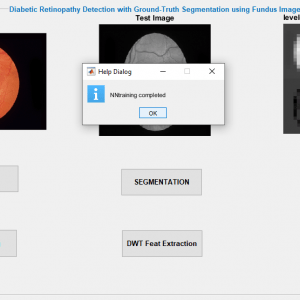

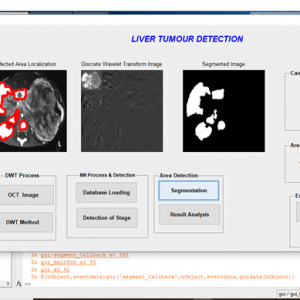

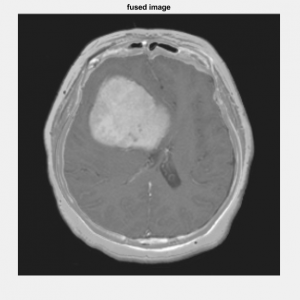

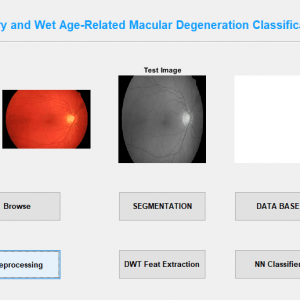

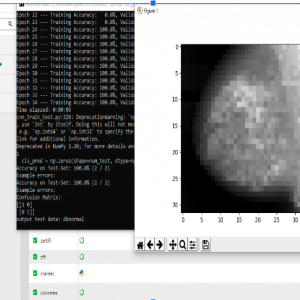

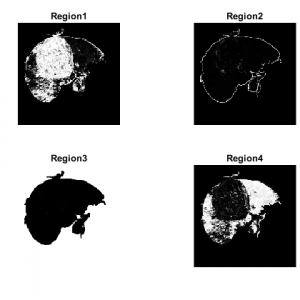

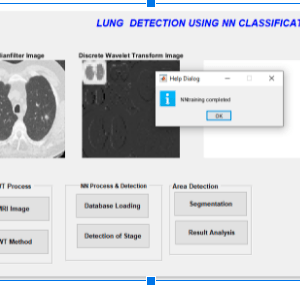

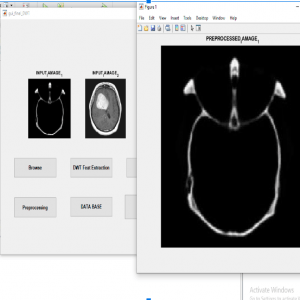

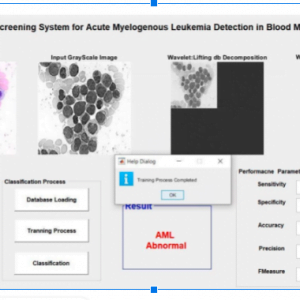

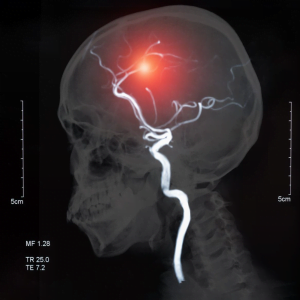

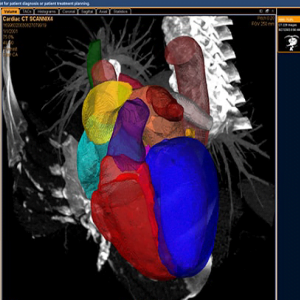

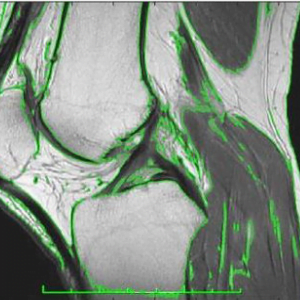

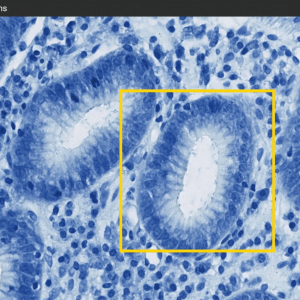

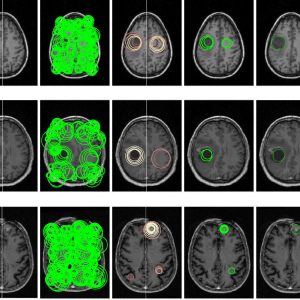

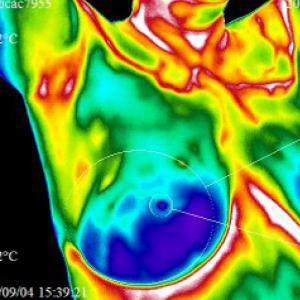

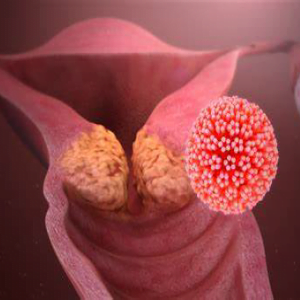

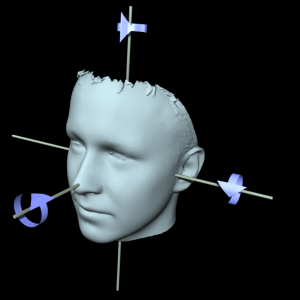

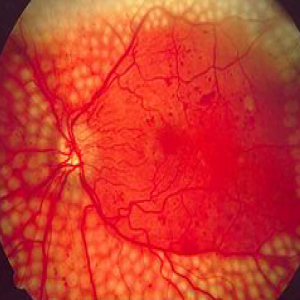

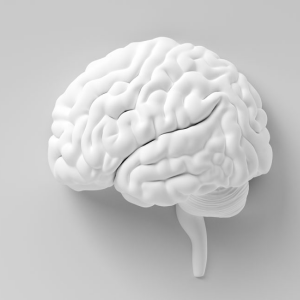

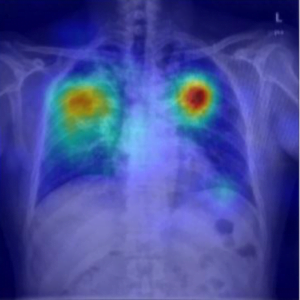

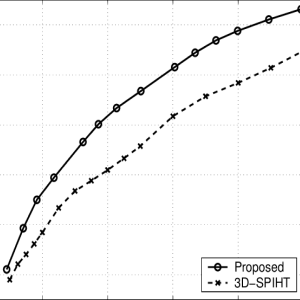

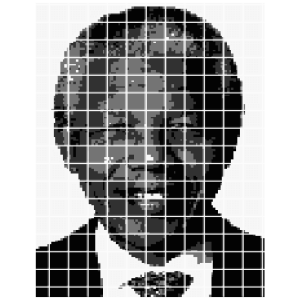

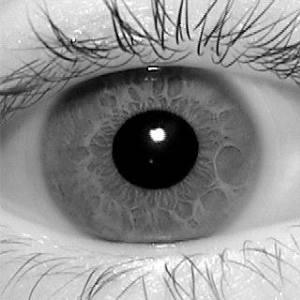

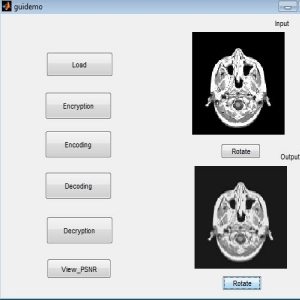

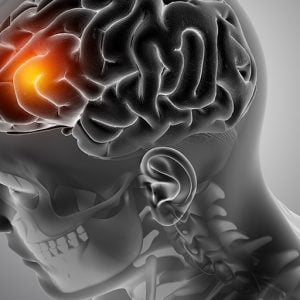

AI based Brain Tumour Detection using SWT-based Image Fusion – Brain tumor detection is a challenging task in medical image analysis. The manual process performs through domain specialists is a more time-consuming task. Numerous works are represented for brain tumor detection and discrimination but still, there is a need for a fast and efficient technique. In this article, a technique is presented to distinguish between benign and malignant tumors. Our method integrates image fusion, features extraction, and classification methods. A serial fusion of MRI and CT-based technique is used for feature extraction and fusion by using Stationary wavelet transform. The fused feature vector is supplied to the multiple classifiers to compare the better prediction rate. The databases are utilized for tumor detection. The performance outcome illustrates that the proposed model effectively classifies the abnormal and normal brain regions.

DIGITAL IMAGE PROCESSING

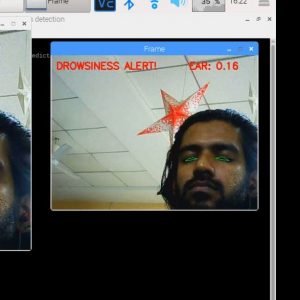

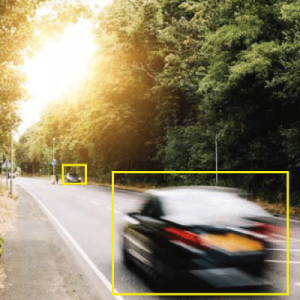

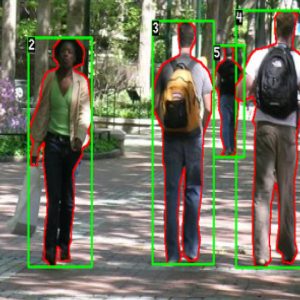

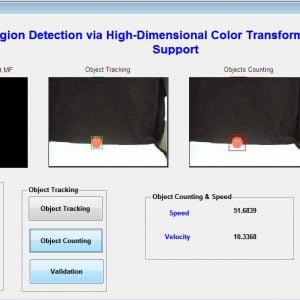

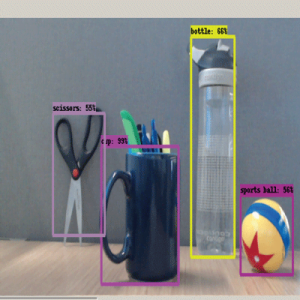

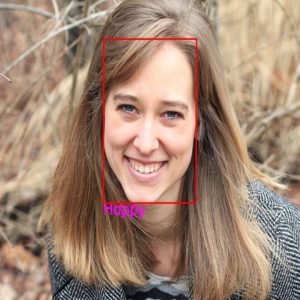

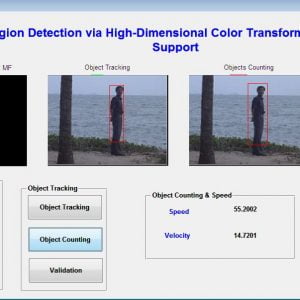

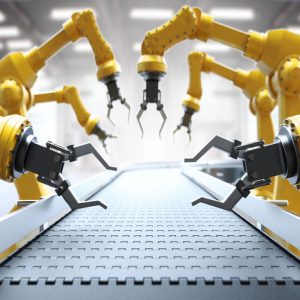

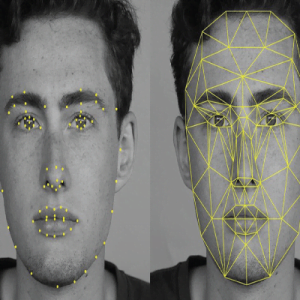

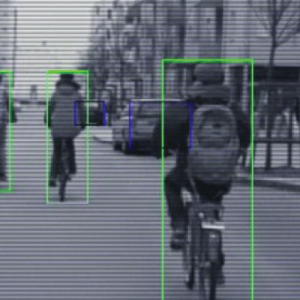

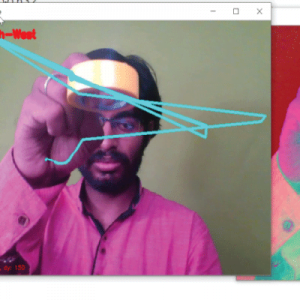

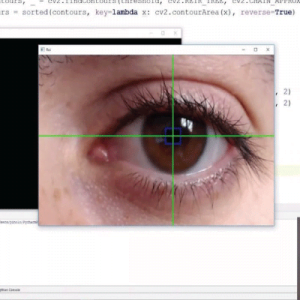

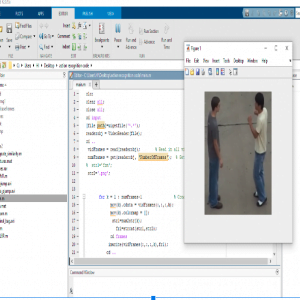

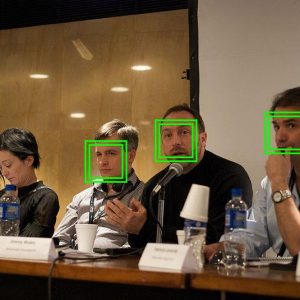

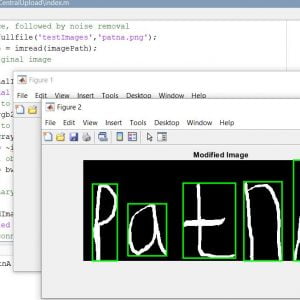

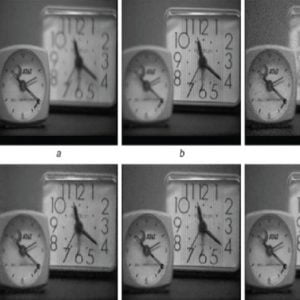

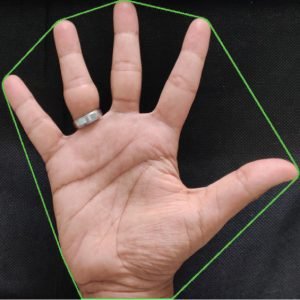

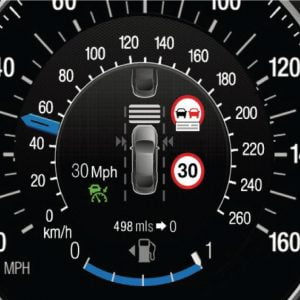

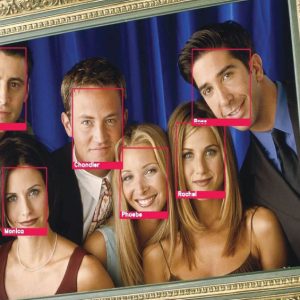

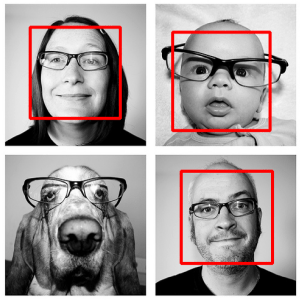

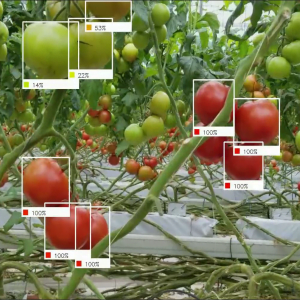

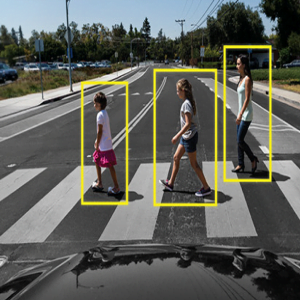

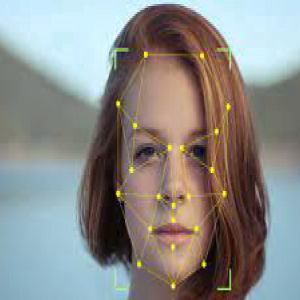

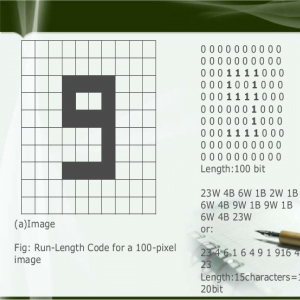

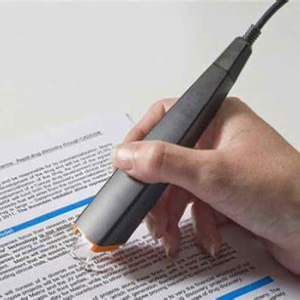

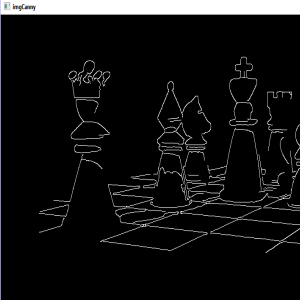

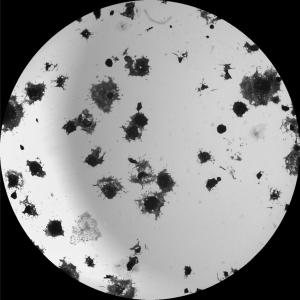

The identification of objects in an image would probably start with image processing techniques such as noise removal, followed by (low-level) feature extraction to locate lines, regions, and possibly areas with certain textures.

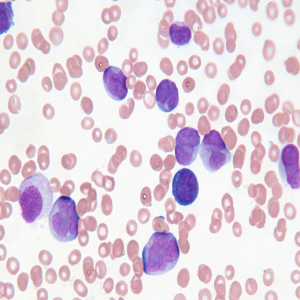

The clever bit is to interpret collections of these shapes as single objects, e.g. cars on a road, boxes on a conveyor belt, or cancerous cells on a microscope slide. One reason this is an AI problem is that an object can appear very different when viewed from different angles or under different lighting. Another problem is deciding what features belong to what object and which are background or shadows etc. The human visual system performs these tasks mostly unconsciously but a computer requires skillful programming and lots of processing power to approach human performance. Manipulating data in the form of an image through several possible techniques. An image is usually interpreted as a two-dimensional array of brightness values and is most familiarly represented by such patterns as those of a photographic print, slide, television screen, or movie screen. An image can be processed optically or digitally with a computer.

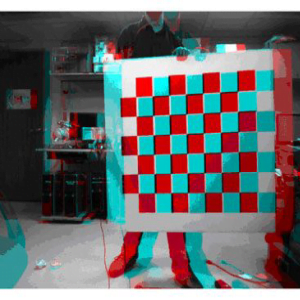

To digitally process an image, it is first necessary to reduce the image to a series of numbers that can be manipulated by the computer. Each number representing the brightness value of the image at a particular location is called a picture element, or pixel. A typical digitized image may have 512 × 512 or roughly 250,000 pixels, although much larger images are becoming common. Once the image has been digitized, there are three basic operations that can be performed on it in the computer. For a point operation, a pixel value in the output image depends on a single-pixel value in the input image. For local operations, several neighboring pixels in the input image determine the value of an output image pixel. In a global operation, all of the input image pixels contribute to an output image pixel value.

EXISTING METHOD

- principal component analysis (PCA)

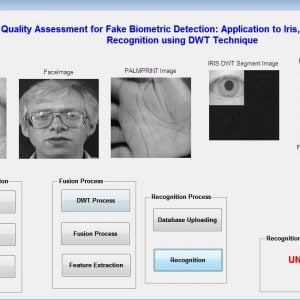

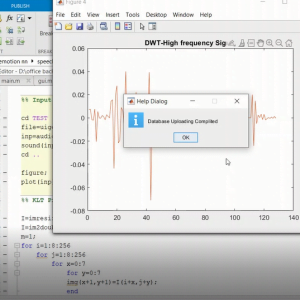

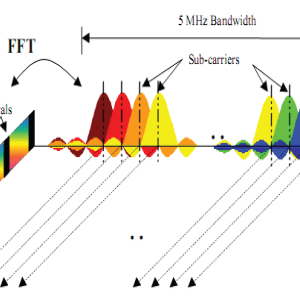

- discrete wavelet transform (DWT)

- dual-tree complex discrete wavelet transform (DTDWT)

- nonsubsampled contourlet transform (NSCT)

- Clustering for segmentation

DRAWBACKS

- Contrast information loss due to averaging method

- The maximizing approach is sensitive to sensor noise

- Spatial distortion is high

- Limited performance in terms of edge and texture representation

PROPOSED METHOD

- SWT

- ISWT

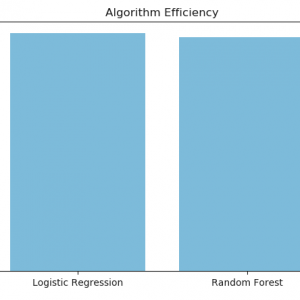

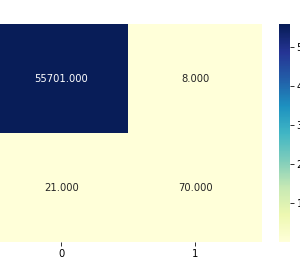

- Neural Network Classifier

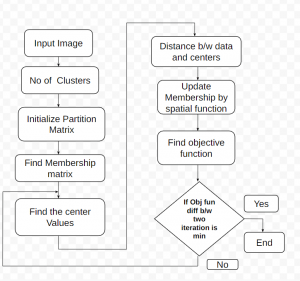

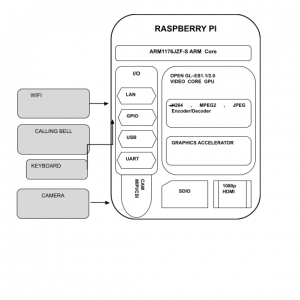

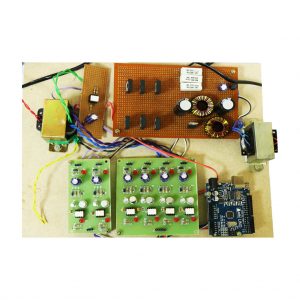

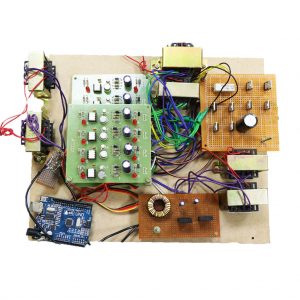

BLOCK DIAGRAM

DRAWBACK

- Contrast information loss due to averaging method

- The maximizing approach is sensitive to sensor noise

- Spatial distortion is high

- Limited performance in terms of edge and texture representation

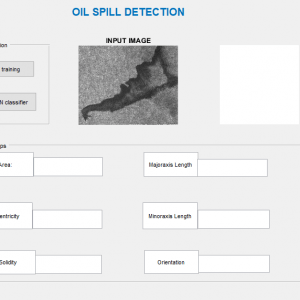

METHODOLOGIES

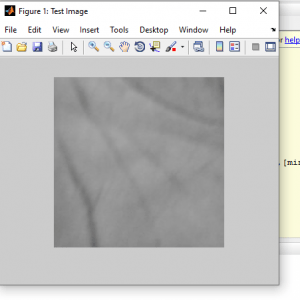

- Pre-Processing

- SWT Fusion

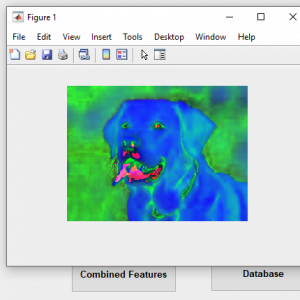

Feature Extraction

- Neural Network Classifier

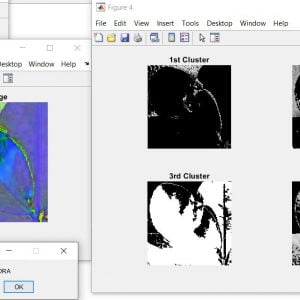

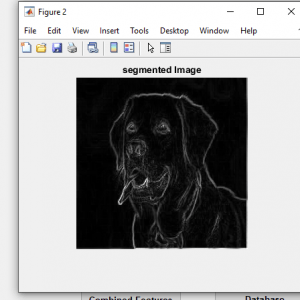

- Segmentation

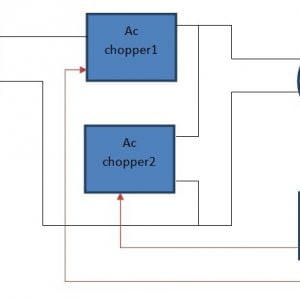

FUSION METHOD

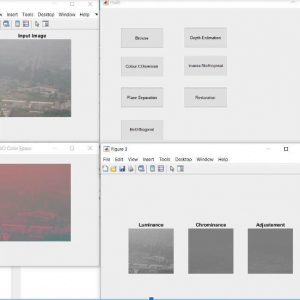

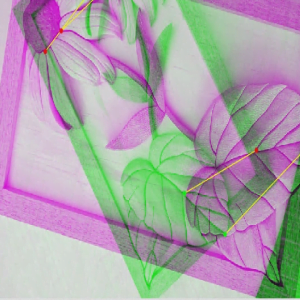

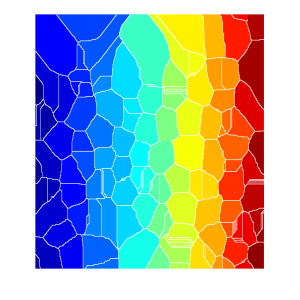

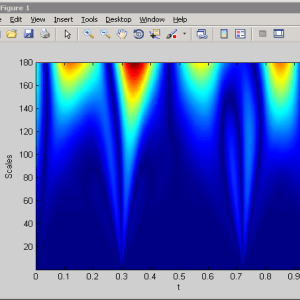

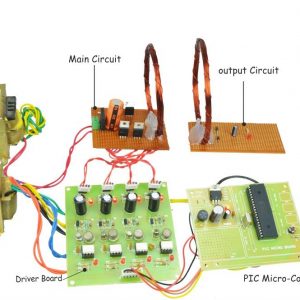

- The proposed method is composed of several parts. First, SWT is applied to decompose all the sources images into a set of sub-images that contain the important features of these sources images. At last, the fusion rule is designed based on consistency verification to effectively fuse the coefficients of different sub-images, and then inverse SWT (ISWT) is carried out to reconstruct the fused image. The detailed introductions of the proposed image fusion scheme are presented in following

REFERENCES

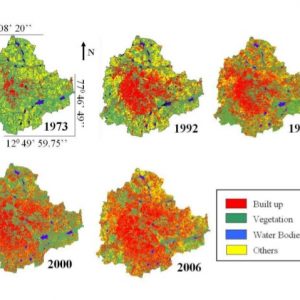

- Y. Gu, Y. Zhang, and J. Zhang, “Integration of spatial-spectral information for resolution enhancement in hyperspectral images,” IEEE Trans. Geosci. Remote Sens., vol. 46, no. 5, pp. 1347–1358, May 2008.

- Y. Zhao, J. Yang, Q. Zhang, L. Song, Y. Cheng, and Q. Pan, “Hyperspectral imagery super-resolution by sparse representation and spectral regularization,” EURASIP J. Adv. Signal Process., vol. 2011, no. 1, pp. 1–10, Oct. 2011.

- T. Akgun, Y. Altunbasak, and R. M. Mersereau, “Super-resolution reconstruction of hyperspectral images,”IEEE Trans. Image Process., vol. 14, no. 11, pp. 1860–1875, Nov. 2005.

- L. Loncanet al., “Hyperspectral pansharpening: A review,”IEEE Geosci. Remote Sens. Mag., vol. 3, no. 3, pp. 27–46, Sep. 2015.

- P. Chavez, C. Sides, and A. Anderson, “Comparison of three different methods to merge multiresolution and multispectral data- LANDSAT TM and SPOT panchromatic,”Photogramm. Eng. Remote Sens., vol. 57, no. 3, pp. 295–303, Mar. 1991

Customer Reviews

There are no reviews yet.